Your Phone Is Quietly Selling Your Every Step. It’s Worse Than You Think.

I bought my own identity for $38.75. Not my social security number or my credit card—those are cheap and replaceable.

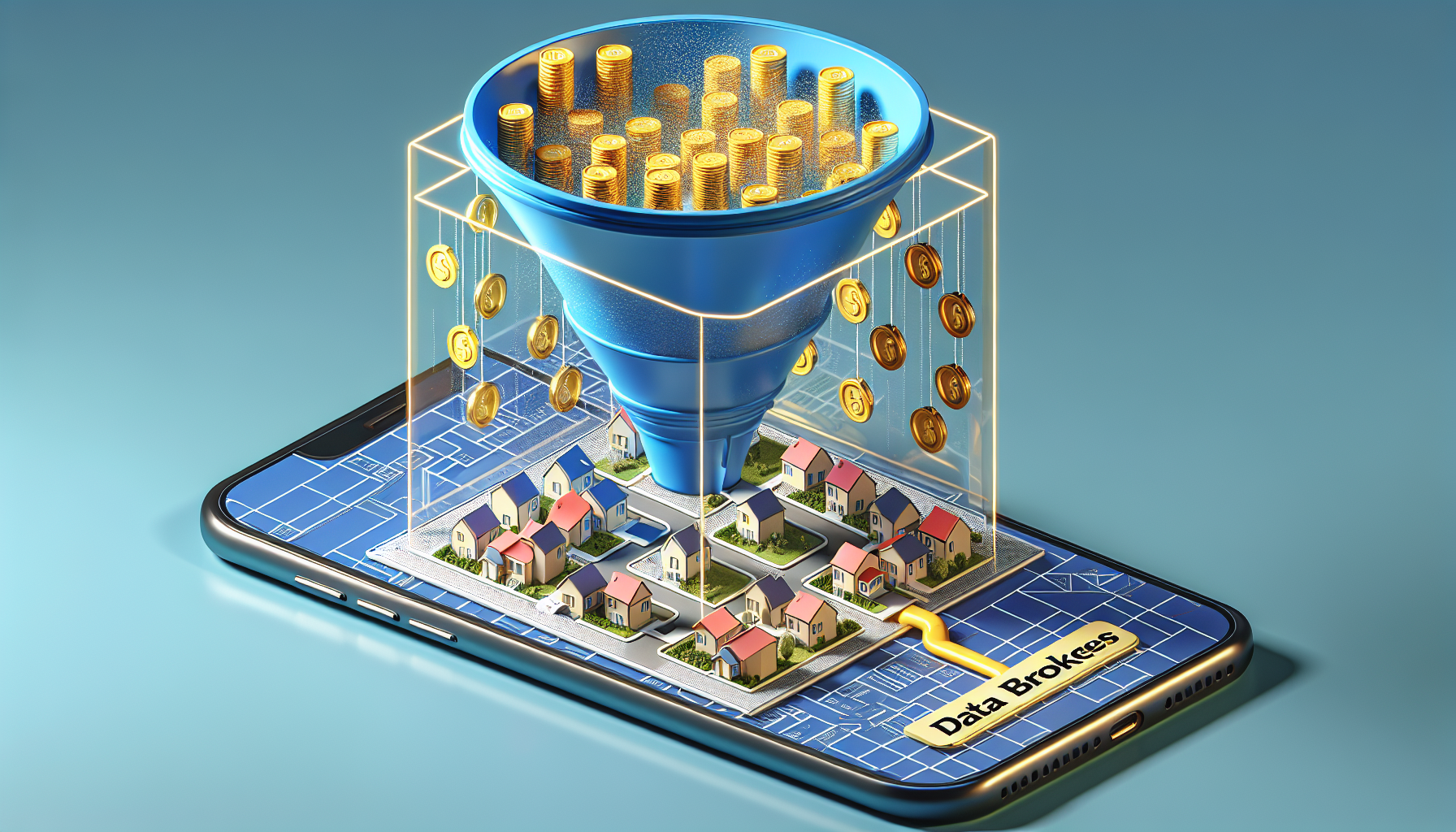

I bought something much more intimate: **my every footstep for the last three years**, neatly packaged in a CSV file from a data broker I’d never heard of.

What I saw in those 42,000 rows of data didn’t just make me uncomfortable; it made me realize that the "Privacy" settings on our iPhones and Androids are effectively a placebo.

While we’ve been arguing about AI chatbots and the latest social media filters, a multi-billion dollar industry has been **quietly liquidating the geometry of our lives** to the highest bidder.

This isn’t just about targeted ads for hiking boots because you visited a trailhead.

This is about the fundamental erosion of the "right to be nowhere," and it’s time we admit that **regulating consent is a failed experiment.** We don’t need better toggles; we need an absolute, scorched-earth ban on the sale of precise geolocation data.

The Myth of the "Anonymized" Data Point

The industry’s favorite defense is a linguistic trick.

They tell you the data is "anonymized" and "aggregated." They claim that "Device ID 8832-AF" isn't Riley Park; it’s just a sequence of coordinates.

But here’s the reality: **Your location is your fingerprint.** If a data point starts at my house every night at 11 PM and ends at my office every morning at 9 AM, it doesn't take ChatGPT 5 to figure out who I am.

It takes a middle-schooler with access to a residential directory.

When I looked at my own data, I could see the exact moment I realized I was lost in a suburb of Chicago in 2024.

I could see the three separate visits to a specialist clinic that I hadn't even told my parents about.

**There is no such thing as anonymous movement** in a world where we all return to a specific 10x10 square of "home" every night.

The "Privacy Toggle" Is a Psychological Placebo

In the spring of 2026, we like to think we’ve won the privacy war. Apple’s App Tracking Transparency (ATT) was supposed to be the "kill switch" for this industry.

We click "Ask App Not to Track," and we feel a little burst of digital dopamine, thinking we’ve pulled the curtains shut.

We haven't. We’ve just forced the brokers to get more creative. Many apps now use **"Server-to-Server" (S2S) tracking** that bypasses the device’s internal privacy gates entirely.

If you give a weather app permission to see your location "to provide local forecasts," that data is often whisked away to a third-party server where the OS can no longer see or stop what happens to it.

The industry has moved from the front door to the basement.

They aren't just tracking you through your IDFA (Identifier for Advertisers) anymore; they’re using **probabilistic fingerprinting**—combining your battery level, your screen brightness, and your IP address to recreate your identity even after you’ve told them to stop.

**The "Ask Not to Track" button has become a digital "Close Door" button in an elevator**—it makes us feel in control while the system does exactly what it was designed to do.

The Framework: The Trinity of Spatial Surveillance

To understand why this is a systemic threat rather than a series of isolated leaks, we need to look at **The Trinity of Spatial Surveillance.** This is the three-step process by which your morning walk becomes a commodity.

1. The "Utility" Harvest

This is the stage where you trade your coordinates for a service. It’s the weather app, the coupon finder, or the "find my car" utility. You grant permission because the trade feels fair in the moment.

You want to know if it’s going to rain, so you hand over the keys to your whereabouts. The app provides the service, but the **location data is the real product.**

2. The Broker Bridge

Once the data leaves your phone, it enters the "Shadow Economy." Large aggregators—companies you’ve never heard of like Near, X-Mode, or Cuebiq—buy these pings in bulk.

They don’t just buy from one app; they buy from thousands. This is where the **"Aggregation Trap"** happens.

One app knows you went to the gym; another knows you went to the pharmacy. The broker is the only one who knows you went to both, and then went to a divorce attorney’s office.

3. The Weaponized Insight

This is the final stage. The data is sold to hedge funds (to track foot traffic at retail stores), political campaigns (to see who attended a protest), or even foreign intelligence services.

By the time the data reaches this stage, your **initial "consent" is three layers deep and completely irrelevant.** You agreed to a weather forecast; you did not agree to have your political leanings modeled by a private equity firm in 2027.

Why "Consent" Is the Wrong Solution

For the last decade, our legislative approach to privacy has been built on the "Notice and Consent" model. It’s the reason you spend half your day clicking "Accept All" on cookie banners.

But when it comes to precise geolocation, **consent is a fiction.**

The power imbalance is too great. If you need a navigation app to get to a job interview, you "consent" to whatever terms they put in front of you.

If your insurance company offers a discount for "safe driving" tracking, you "consent" because you’re struggling with inflation in 2026. This isn't a free choice; it’s a **coerced transaction.**

Moreover, your location data doesn't just belong to you. When your phone pings, it often reveals the location of everyone you’re with.

If I track a thousand people at a church, I’ve effectively tracked the church itself.

**Privacy isn't just an individual right; it’s a collective safety requirement.** By allowing the sale of this data, we are allowing the wholesale mapping of our social fabric.

The Case for a Total Ban on Precision Sale

We need to stop trying to "fix" the data broker industry and start dismantling it. The solution is simple: **Ban the sale, transfer, or licensing of precise geolocation data to third parties.**

This wouldn't break your GPS. Google Maps would still work. Your weather app would still know it’s raining.

But the data would be **legally "toxic."** It would be like nuclear waste—useful for the person generating it, but illegal to sell to the neighbors.

If we move to a "Usage-Only" model, we break the Broker Bridge. If an app can’t sell your data, the incentive to harvest it disappears.

We would see a sudden, "miraculous" shift in app design where developers realize they don’t actually need to know your coordinates within three meters to tell you it’s Tuesday.

We’ve done this before with other types of data. You can’t legally sell someone’s medical records or their library checkout history in many jurisdictions.

Why is the fact that you visited a reproductive health clinic or a domestic violence shelter considered **"fair game" for the open market?**

The Right to Be Nowhere

As we move toward 2027, the "Digital Twin" of our world is becoming more detailed than the world itself. Every step we take is being logged, indexed, and auctioned.

We are living in a panopticon where the walls are made of code and the guards are algorithms trying to predict our next move.

The most radical thing you can do in 2026 is to be unfindable. But that shouldn't require leaving your phone in a lead-lined box or living in a cave. It should be a **default setting of citizenship.**

We have to stop treating our movements as "exhaust" and start treating them as "intellectual property" of the soul. Your path through the world is the story of your life.

It’s your mistakes, your secret romances, your grief, and your quietest moments of reflection. **That story shouldn't be for sale for $38.75.**

Have you ever looked into what data brokers have on you, or do you prefer to stay in the dark? Let’s talk about whether "privacy" even means anything in 2026 in the comments below.

---