State of AI in 2026: LLMs, Coding, Scaling Laws, China, Agents, GPUs, AGI | Lex Fridman Podcast #490

I spent four hours last night listening to Lex Fridman’s latest deep dive on the state of AI in 2026.

While most listeners were probably dazzled by the talk of AGI timelines, I was staring at my terminal, watching a Kubernetes cluster struggle to orchestrate a swarm of **Claude 4.6 agents**.

The realization hit me somewhere around the two-hour mark: the era of "just add more parameters" is officially dead.

If you’re still trying to solve engineering problems by refining your system prompts for **ChatGPT 5**, you’re already behind the curve.

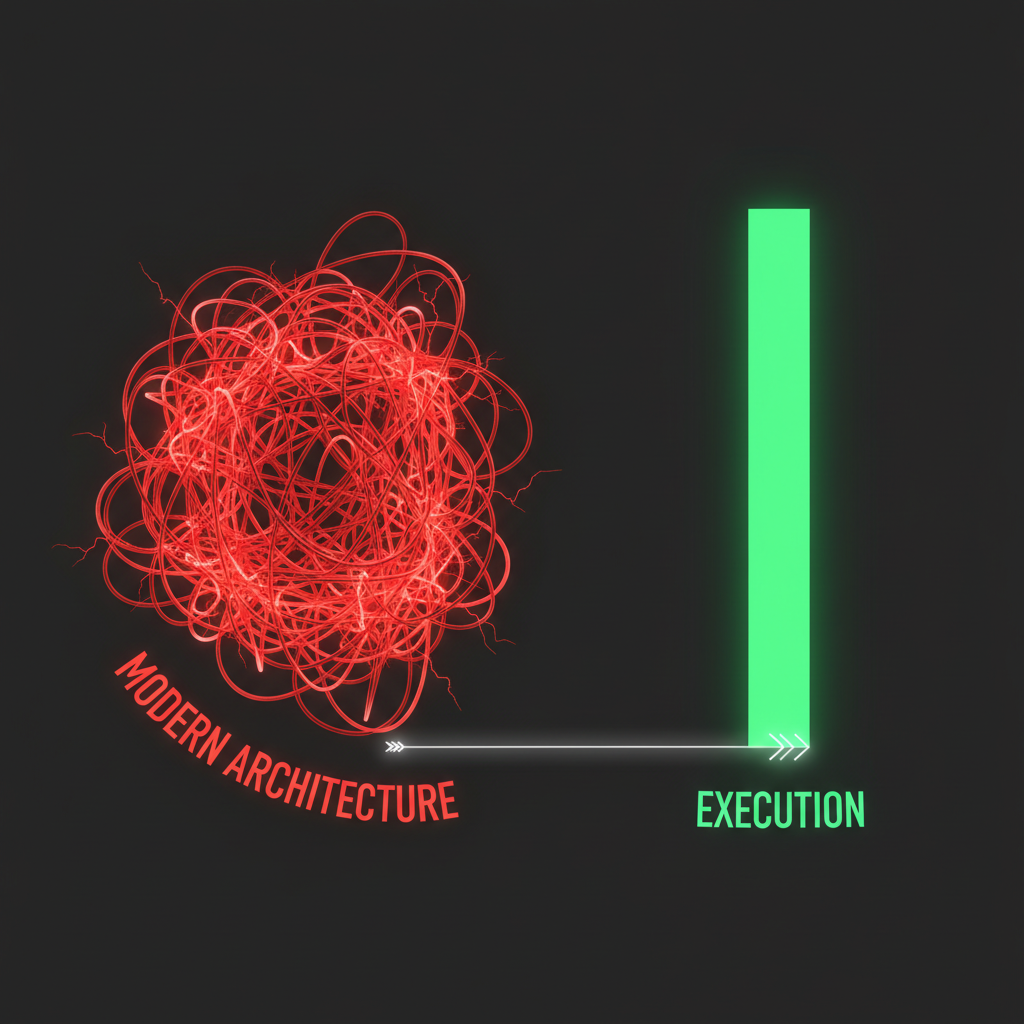

We’ve reached the point where raw intelligence is a commodity, but execution is a nightmare.

Lex #490 wasn't just a podcast; it was a post-mortem for the way we’ve built software for the last three years.

The Great Scaling Myth of 2026

For the better part of 18 months, we’ve been told that Scaling Laws were an unbreakable law of physics.

The industry's collective bet was that if we just threw enough H200s and Blackwell B200s at the problem, the models would eventually "reason" their way out of hallucination.

In the podcast, the discussion took a sharp turn toward the "Data Wall." We’ve officially run out of high-quality human text to scrape, and the synthetic data loops we relied on in 2025 are starting to show signs of model collapse.

I see this every day in my infrastructure work.

When I ask **Gemini 2.5** to refactor a legacy Go service, it doesn't just give me code; it gives me a slightly degraded version of the code it saw in its own training set six months ago.

We aren't scaling up anymore; we’re scaling sideways.

Why Your "Coding" Skills Are Now "Orchestration" Skills

One of the most provocative segments of the interview focused on the death of the "Senior Software Engineer" as we knew it in 2024.

The guests argued that by early 2027, the act of typing characters into a VS Code instance will be seen as a low-level industrial task, akin to manual assembly line work.

I’ve felt this shift in my own workflow over the last few months. I no longer write functions; I define **state machines for agents**.

When I’m deploying a new microservice, I’m not worried about the syntax.

I’m worried about whether my agentic loop has the correct permissions to modify the Terraform files without triggering a circular dependency.

**Claude 4.6** is brilliant at the logic, but it’s the "glue" that breaks—the infra-to-agent interface that Lex’s guests highlighted as the new bottleneck.

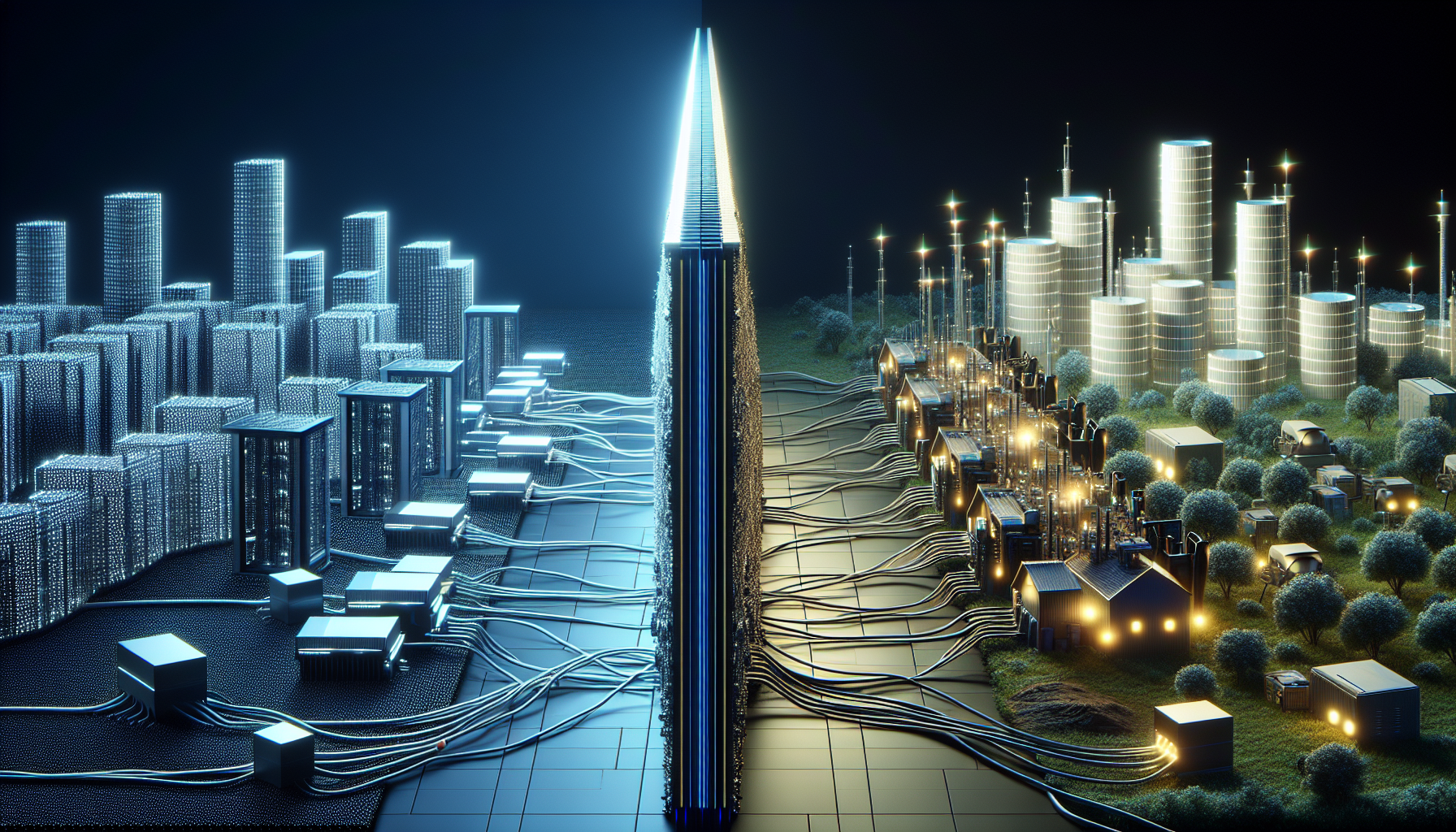

The Compute Iron Curtain: China vs. The World

The geopolitical discussion in #490 was uncharacteristically blunt. We’re no longer talking about a "race"; we’re talking about two completely divergent evolutionary paths for AI.

While the US has focused on massive, centralized clusters and **AGI safety**, the reports out of China suggest a pivot toward hyper-efficient, "Small Language Models" (SLMs) optimized for edge-computing and autonomous hardware.

They aren't trying to build a god in a data center; they’re trying to build a brain for every drone and factory robot.

This creates a massive headache for infrastructure engineers like me. We’re moving toward a world where the "global internet" is split by AI compatibility.

If your agent is running on a Western stack, it might literally be unable to communicate with a manufacturing API optimized for a different cognitive architecture.

The GPU Gold Rush Is Transitioning to a Power Crisis

Lex asked a pointed question about the "GPU ceiling." The answer was sobering: we aren't limited by silicon anymore; we’re limited by the grid.

In early 2026, we saw the first major "AI Brownouts" in Northern Virginia.

The podcast guests confirmed what we’ve been whispering in Slack channels for months—the cost of a single **ChatGPT 5** query isn't just cents anymore; it’s a measurable fraction of a kilowatt-hour.

As an infra guy, this changes everything. My job is no longer just about "uptime"; it’s about **thermal efficiency**.

We are beginning to see the rise of "Carbon-Aware Inference," where agents intentionally delay non-critical reasoning tasks until renewable energy production peaks.

If you aren't thinking about the energy cost of your agentic loops, your CFO is going to fire you by the end of the year.

Is AGI Actually Here, or Just Really Good at Faking It?

The "State of AI 2026" wouldn't be complete without the AGI debate. The consensus in the podcast was that we’ve moved the goalposts so many times that we didn't notice we already crossed the line.

If an AI can manage a $50 million infrastructure budget, optimize database queries in real-time, and write its own security patches, does it matter if it "feels" like a person?

We’ve reached **Functional AGI**.

I’ve had moments where a **Claude 4.6** instance suggested a network topology change that I hadn't considered—a move that saved us $12,000 a month in egress fees.

It wasn't "predicting the next token." It was analyzing a complex system and finding a needle in a haystack of YAML. That’s reasoning, whether the skeptics like it or not.

The Reality Check: Agents Are Still Idiots

Despite all the hype in Lex #490, there was a necessary moment of silence for the things AI still can't do. The "last mile" of autonomy is still a disaster.

We have models that can pass the Bar Exam but can't figure out why a Docker container is stuck in a `CrashLoopBackOff` if the error message is slightly ambiguous.

The podcast highlighted that **Reliability Engineering** is the only job security left.

AI can dream up the architecture, but it can't "feel" the latency. It doesn't get that "gut feeling" when a deployment feels too fast to be successful.

We are the guardians of reality in a world where AI is increasingly hallucinating "perfect" solutions that don't survive first contact with production traffic.

How to Pivot Your Career Before 2027

If you’re a developer or a tech pro, Lex #490 provided a very clear roadmap for survival. Stop trying to be the person who "knows" things. Start being the person who **validates** things.

1. **Become an Agent Architect:** Learn how to chain models together. A single model is a toy; a swarm of models with specialized roles is an employee.

2. **Master the Context Window:** The "Skill of 2026" isn't prompting; it’s **context engineering**. How do you feed 2 million tokens of documentation into a model without it losing the thread?

3. **Focus on Local Inference:** As energy costs and privacy concerns rise, the real money is in running 7B models on local hardware that perform like GPT-4.

The Cognitive Debt We’re Accruing

My biggest takeaway from the four-hour marathon wasn't about GPUs or China. It was about the "Cognitive Debt" we’re building.

We are offloading so much of our critical thinking to these models that we’re losing the ability to debug the foundations.

If the AI writes the code, and the AI reviews the code, and the AI deploys the code... who is left to understand the code when the power goes out?

Lex #490 was a celebration of how far we’ve come, but for me, it was a warning. We’re building a skyscraper on a foundation we no longer fully control.

**Have you noticed your own technical skills starting to "atrophy" as you rely more on Claude and ChatGPT, or are you finding yourself more productive than ever?

Let’s talk about the reality of the 2026 workflow in the comments.**

---