ChatGPT Images 2.0 Just Changed Everything. I Wasn't Ready For This.

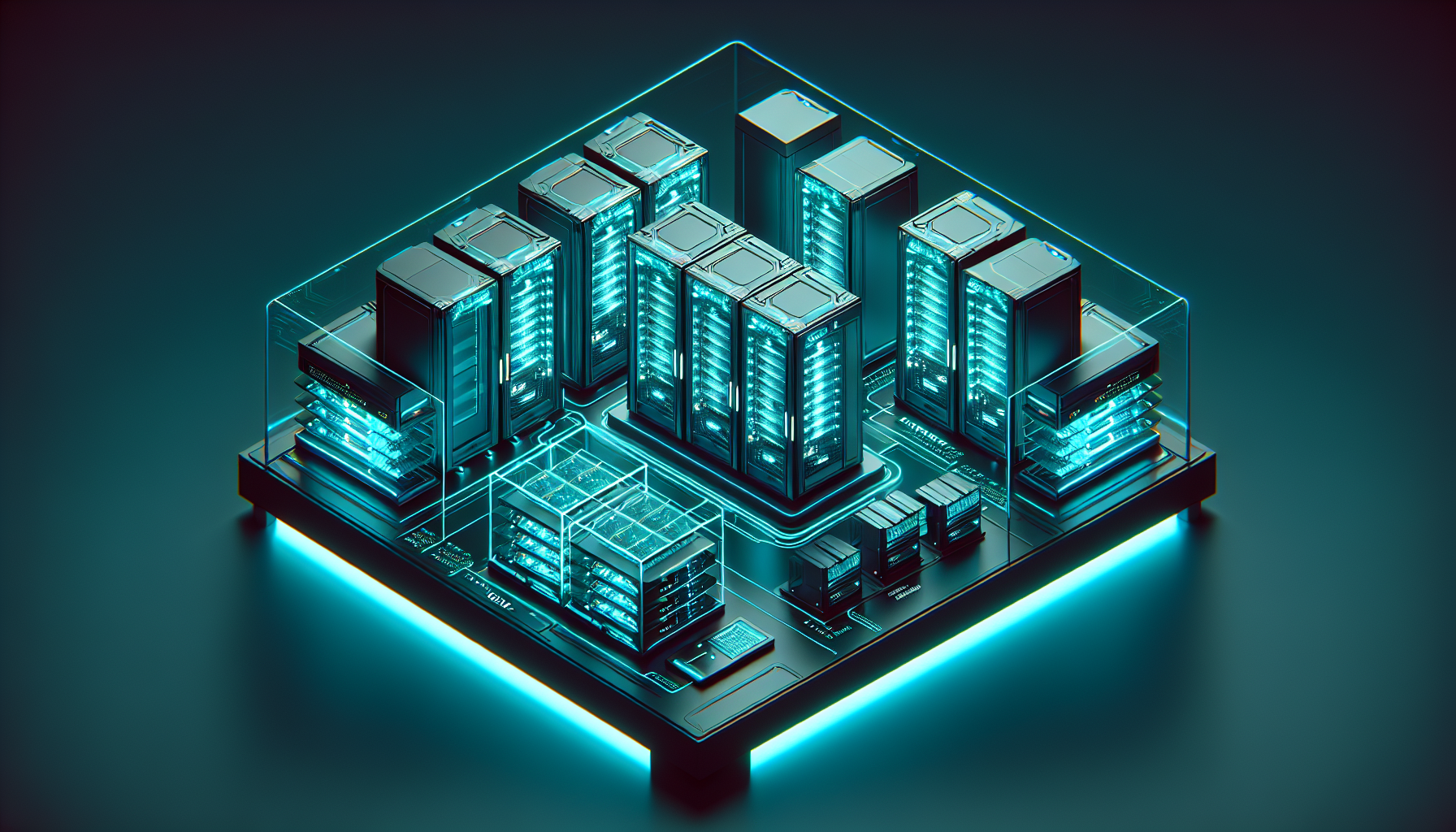

I spent three hours yesterday looking at a picture of a server rack. It sounds pathetic, I know.

But after six years in infrastructure engineering, I’ve developed a hyper-sensitive "BS detector" for AI-generated diagrams.

Usually, the wires go nowhere, the labels are gibberish, and the physics make me want to retire early.

**Only this time, there wasn't any bullshit.** I was looking at a high-fidelity system architecture diagram generated by ChatGPT Images 2.0, and for the first time since the AI boom started, I felt a genuine chill.

It didn't just look pretty; it was technically coherent.

The prompt was simple. I asked for a 3D isometric view of a multi-region Kubernetes cluster with specific VPC peering and a global load balancer.

In the old days—meaning six months ago—I’d get a colorful mess of spaghetti. **ChatGPT Images 2.0 handed me a production-ready mockup that I actually used in a stakeholder meeting an hour later.**

I wasn't ready for this. I thought image generation was for the marketing team and people making "Cyberpunk Tokyo" wallpapers. I was wrong.

We’ve officially crossed the line from "cool toy" to "functional infrastructure tool," and the implications for how we design and document systems are massive.

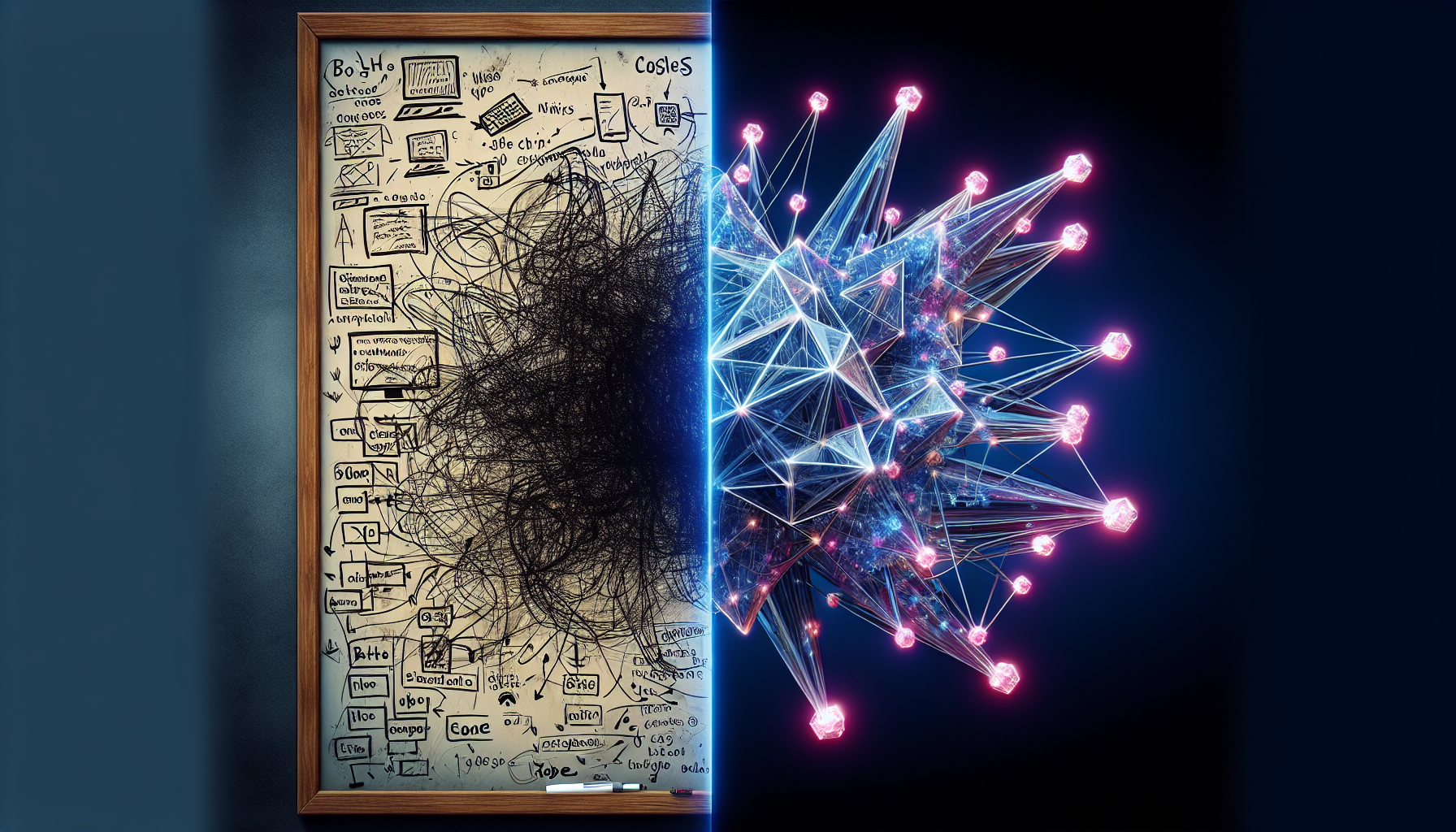

The Day My Design Workflow Died

I’ve always been a "Whiteboard and Lucidchart" purist. There’s a specific mental clarity that comes from manually dragging arrows between microservices and databases.

I argued that AI couldn't handle the nuances of network topology because it didn't "understand" the underlying logic.

Then came the April update.

**ChatGPT 5 integrated the new Images 2.0 engine, and the spatial reasoning gap simply evaporated.** I stopped using ChatGPT just for code snippets and started using it to visualize the race conditions I was seeing in our staging environment.

"Show me a sequence diagram of a distributed lock failure," I typed. It didn't just draw boxes.

It mapped out the temporal latency, the TTL expiration, and the retry logic in a way that made the bug obvious to our junior devs.

**I realized my "manual is better" stance was becoming technical debt.**

The shift isn't just about better resolution or fewer "extra fingers." It’s about the fact that the model now understands the relationship between objects in a 3D space.

When you ask for a "redundant power supply configuration," it knows that those components need to be physically and logically separated.

Physics, Text, and the End of "AI Artifacts"

The biggest joke in AI art used to be the text. We’ve all seen the "SDRVRE RACK" labels and the unreadable labels that looked like Cthulhu’s handwriting.

**ChatGPT Images 2.0 has solved the typography problem with surgical precision.**

In my testing over the last 48 hours, I’ve pushed it to generate complex UI mockups for our internal monitoring dashboard.

Not only is the text 100% legible, but it follows the design system I described in the prompt.

It’s using the Deep Teal and Bright Cyan branding of our school project without me having to upload a CSS file.

**The "In-Canvas" editing is the real game-changer here.** If the AI generates a diagram but the database is in the wrong subnet, I don't have to regenerate the whole image.

I just highlight the database and say, "Move this to the private subnet and add a NAT gateway."

The model understands the context of the change. It doesn't just "Photoshopping" a box; it reroutes the virtual wires and updates the labels to reflect the new architecture.

This is a level of iterative design that previously required a dedicated UX designer and three days of back-and-forth.

Why Infrastructure Engineers Should Care About Pixels

You might be wondering why an infrastructure guy is obsessed with an image generator.

Isn't this just "DALL-E with a fresh coat of paint?" **No, because we are moving toward a world where the diagram *is* the code.**

I’m already seeing early experiments where people take these ChatGPT 2.0 images and pipe them into Claude 4.6 to generate Terraform scripts.

The visual-to-code pipeline is becoming the most efficient way to bootstrap a new environment.

If I can visually confirm a complex VPC peering arrangement in ten seconds, I can spot "architectural rot" before a single line of YAML is written.

**Visualizing complexity is the only way we survive the next wave of scale.** We are building systems that are too large for any human to hold in their head simultaneously.

We’ve reached a point where the "hallucinations" are being replaced by "inferences." The AI is making educated guesses based on best practices.

If I forget to add a backup vault in my prompt, Images 2.0 often adds it anyway, labeling it as a "Recommended Security Best Practice." It’s like having a senior architect looking over your shoulder.

The Reality Check: It’s Not a Magic Wand (Yet)

Before we all delete our Figma accounts, let’s talk about where this still fails. Because it does fail, and when it fails, it’s spectacular.

**ChatGPT Images 2.0 still struggles with "Non-Euclidean" requests.**

If you ask for a system that defies standard networking logic—like a circular dependency that somehow resolves—it tries too hard to make it look "right." It will draw a beautiful, convincing lie.

If you aren't an expert, you might not realize the architecture it just proposed is physically impossible to implement.

There’s also the "Style Drift" issue. When you’re doing iterative editing, the AI occasionally forgets the lighting or the perspective of the original shot.

You’ll have a 3D server rack on the left and a 2D flat-design database on the right. **It’s a reminder that we are still working with a stochastic parity, not a deterministic CAD tool.**

Privacy is the other elephant in the room. As an infrastructure engineer, I’m wary of "leaking" our actual network topology into a model’s training set.

Even with Enterprise privacy settings, there’s a visceral hesitation to upload a screenshot of a production incident and ask for a visual root cause analysis.

How to Ship 10x Faster in 2026

If you want to stay relevant in this "Images 2.0" era, you need to stop thinking like a prompt engineer and start thinking like a Director of Photography.

The way you describe spatial relationships matters more than the keywords you use.

**Start using "Spatial Keywords" in your prompts.** Don't just ask for a diagram.

Ask for "Top-down orthographic projection," "isometric wireframe," or "exploded view." These terms force the model to use its 3D reasoning engine rather than its "artistic" engine.

I’ve found that the best workflow is a "Model Sandwich." I use ChatGPT 5 to brainstorm the architecture and generate the initial Images 2.0 mockup.

Then, I take that image and feed it to Claude 4.5 to "fact-check" the topology. **The cross-model validation catches the beautiful lies before they reach production.**

We are entering a phase where the "Draft" phase of any project is effectively zero. You can go from an idea in a Slack channel to a high-fidelity visual prototype in under two minutes.

That speed is a superpower, but only if you have the technical depth to know when the AI is just showing you what you want to see.

The End of the "Ugly" Tech Stack

For years, internal tools and backend documentation have been allowed to be ugly.

We prioritized function over form because "nobody sees the backend anyway." **ChatGPT Images 2.0 has removed the excuse for bad design in technical documentation.**

When it’s just as easy to generate a beautiful, interactive 3D map of your microservices as it is to write a bulleted list, the list becomes unacceptable.

We are going to see a "Visual Renaissance" in DevOps. Documentation that people actually want to read because it looks like a high-end technical manual from 2050.

I wasn't ready for a world where my most-used "dev tool" was an image generator. But looking at the clarity of my team’s latest architectural review, I’m not going back to the whiteboard.

**The barrier between "thinking" and "visualizing" has been dismantled.**

Have you noticed your team relying more on AI visuals lately, or are you still sticking to manual diagramming?

I’m curious if I’m the only one who feels like the "visual gap" just disappeared overnight. Let’s talk in the comments.

---