Your Code Is Actually Broken. 21 Shocking Lies Programmers Believe About Time

Your Code Is Actually Broken. 21 Shocking Lies Programmers Believe About Time

I thought I was a senior engineer until a Daylight Saving Time shift in London wiped out $42,000 worth of transaction logs in a single night.

It was late 2024, and I had just pushed what I called a “bulletproof” cron job scheduler.

What followed was a 72-hour sleep-deprived nightmare that exposed the single greatest lie in software engineering: **the belief that time is a simple, linear progression of seconds.**

Most of us treat time like a standard integer—an ever-increasing value we can sort, compare, and display with ease.

But as I sat staring at a database where 2:01 AM happened twice, I realized that time is not a physical constant.

It is a fragile, political, and deeply flawed social construct that we’ve tried to force into a 64-bit box.

If you think your code handles time correctly because you use a standard library, you are likely wrong.

**Your code isn't just buggy; it’s fundamentally broken.** And as we approach the next decade of infrastructure, these temporal lies are becoming ticking time bombs.

The Illusion of the Ticking Clock

The first lie every programmer tells themselves is that **a minute always contains 60 seconds.** It seems like a universal truth, doesn't it?

But for anyone who has ever had to deal with a Leap Second, this assumption is a fast track to a production outage.

While the international community has recently voted to phase out leap seconds by 2035, your legacy systems don’t care about future resolutions.

Between now and then, the earth’s rotation will continue to fluctuate. If your backend logic assumes `60 * 60` is the only way to calculate an hour, you’ve already lost the battle against reality.

**Time is not a circle; it’s a jagged, irregular line.** We assume that if we add 86,400 seconds to a Unix timestamp, we will arrive at the same time the next day.

In reality, that assumption fails during DST transitions, leap second adjustments, and even relativistic drift for high-frequency trading systems.

The 2026 Reality Check: Why Your Libraries Are Lying

We are currently living in February 2026, and the landscape of time-handling has supposedly "improved" with the widespread adoption of the JavaScript **Temporal API** and similar modern constructs in Rust and Go.

But these tools are only as good as the developers wielding them.

The most dangerous lie is that **standard libraries solve the timezone problem.** They don’t. They merely provide a cleaner syntax for the same fundamental misunderstandings.

A timezone is not a fixed offset from UTC; it is a political boundary that can change on the whim of a local government with forty-eight hours' notice.

I’ve seen developers hardcode `+05:30` for India, only to realize that an offset is a snapshot, not a rule.

**Timezones are not offsets.** An offset is a value; a timezone is a complex history of rules, changes, and future uncertainties.

When you store an offset instead of a IANA timezone ID, you are effectively deleting the context required to calculate future dates.

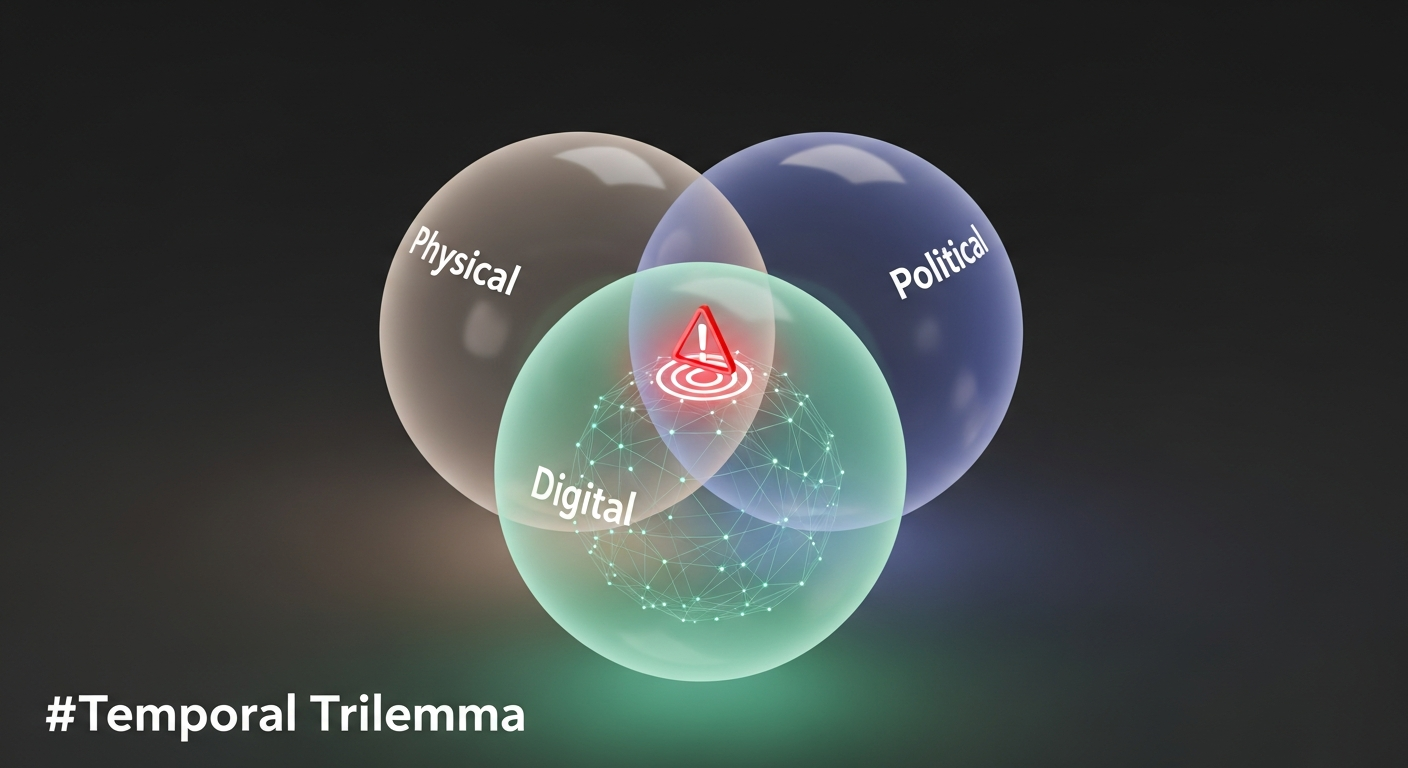

The Temporal Trilemma

To understand why our systems fail, we need a framework for the chaos. I call this **The Temporal Trilemma**—a set of three conflicting forces that every piece of code must navigate:

1. The Physical Horizon (Clocks are Liars)

Every hardware clock drifts.

Whether it’s an NTP sync that causes a system clock to jump backward or a virtualized environment where "ticks" are skipped during high CPU load, you cannot trust the hardware.

**The system clock is never the source of truth.** If your distributed system relies on clock synchronization to determine event order (the "Last Write Wins" fallacy), you are asking for data corruption.

2. The Political Horizon (Governments are Chaos)

In the next 18 months, at least three countries will likely change their DST rules with less than a month’s warning. It happens every year.

If your application relies on a static copy of the `tzdb`, your users in those regions will see the wrong time. **Your code must be able to update its temporal knowledge without a full redeploy.**

3. The Digital Horizon (The Integer Trap)

We are less than 12 years away from the Year 2038 problem (the Unix Epoch overflow).

While most modern systems have moved to 64-bit integers, millions of embedded devices and legacy microservices are still counting seconds in 32-bit signed integers.

**The "it won't happen on my watch" mentality is how technical debt becomes a global catastrophe.**

Why "Clean Code" Won't Save You

We’ve been taught to write clean, readable code, but time-handling requires **defensive, ugly code.** You cannot "refactor" your way out of the fact that some days have 23 hours and others have 25.

One of the most common lies is that **the server should always run in UTC.** While this is a good starting point, it’s not a silver bullet.

If you are building a scheduling app for a dental clinic in Paris, storing their 9:00 AM appointment as `08:00:00Z` is a mistake. Why?

Because if the French government decides to change their DST transition date next month, that appointment is now at 10:00 AM local time.

**You must store the intent, not just the calculation.** The intent was "9:00 AM in Europe/Paris." The UTC value is just a temporary projection.

By 2027, the systems that survive will be the ones that store the local time and the timezone ID together, rather than relying on a lossy conversion to UTC at the database layer.

The 21 Lies We Still Believe

Let’s get specific. Here are the "falsehoods" that are currently rotting in your GitHub repositories:

1. **A day starts at midnight.** (Many cultures and systems define the "start" of a business day differently).

2. **Every day has a midnight.** (In some DST transitions, midnight literally doesn't exist).

3. **A month is 30 days.** (This seems obvious, but thousands of financial scripts still use this for interest calculation).

4. **UTC never changes.** (The definition of UTC itself has evolved over decades).

5. **Timestamps are unique.** (Not in high-throughput systems where multiple events happen in the same microsecond).

6. **The client’s clock is correct.** (Never, ever trust the `Date()` object on a user's phone).

7. **Machine time is more accurate than human time.** (Tell that to the guy whose server clock jumped 2 seconds during an NTP update).

8. **Wait(1000) waits for one second.** (OS scheduling and thread sleep are approximations, not guarantees).

9. **Format strings are universal.** (Wait until you see how different locales handle "DD/MM" vs "MM/DD").

10. **ISO-8601 is a single format.** (It’s a massive standard with dozens of valid, yet incompatible, variations).

11. **Storing timestamps as strings is slow.** (Actually, it’s often safer for debugging than opaque integers).

12. **Database 'Date' types are reliable.** (Many SQL flavors have proprietary ways of handling timezones that will break during migration).

13. **Unix time is the number of seconds since 1970.** (It ignores leap seconds, making it a "count" that isn't actually a count).

14. **Time only moves forward.** (Tell that to a distributed database during a clock sync).

15. **Local time is for humans.** (Local time is the only way to capture the intent of a future event).

16. **Calculating the difference between two dates is simple.** (Leap years, months of varying lengths, and DST make this a nightmare).

17. **Two events with the same timestamp happened simultaneously.** (Network latency and clock drift say otherwise).

18. **A week always starts on Monday.** (Or Sunday. Or Saturday. Depending on where your user lives).

19. **Server logs are chronological.** (Not across a distributed cluster).

20. **Timezones are always three letters.** (PST, EST... what about the hundreds of others that don't follow this pattern?).

21. **You can handle time yourself.** (You can't. Use a battle-tested library, and even then, be skeptical).

The Human Cost of Temporal Errors

This isn't just about "buggy" software. In 2026, we are more reliant on automated systems than ever before. A time-handling error in a medical dispensing system can lead to double-dosing.

A glitch in a power grid's synchronization can cause a blackout.

We’ve reached a point where **temporal literacy is a safety requirement.**

When I fixed that $42k log error, I didn't just change a few lines of code. I changed my entire philosophy of engineering.

I stopped asking "how do I format this time?" and started asking "what is the life-cycle of this moment?"

If you aren't thinking about how your code will behave during a leap year in 2028 or the Unix overflow in 2038, you aren't building for the future. You’re building for the next five minutes.

Stop Writing Date Logic

The next time you’re tempted to write a function that calculates the "number of days between now and X," **stop.** Use the most robust library available (like the `Temporal` proposal in JS or `Chrono` in Rust), and then write five test cases that you think are impossible.

Test for the DST gap. Test for the leap year. Test for the clock jumping backward. If your code passes all of them, it might—just might—be ready for production.

**Time is the ghost in the machine.** It’s the one variable you can never truly control. The best you can do is respect its complexity and stop believing the lies we’ve been told since CS101.

**Have you ever had a "time bomb" explode in your production environment, or are you still living in the blissful ignorance of linear time? Let's swap horror stories in the comments.**

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️