Stop Using DLSS Wrong. This 60-Second Secret Actually Changes Everything

**Stop using DLSS "Quality" mode.** I’m dead serious.

After spending three weeks benchmarking the latest DLSS 5.0 builds on a production-grade infrastructure simulation, I realized we’ve been Pavloved into clicking a button that’s actually degrading our visual stack—and it only takes 60 seconds to fix.

I almost sold my workstation last month.

I’m running a liquid-cooled rig that costs more than my first car, yet every time I pivoted a 3D viewport or fired up a high-fidelity sim, the edges felt "fuzzy." I thought my eyes were finally giving out after fifteen years of staring at terminal screens.

It wasn't my vision, and it wasn't a hardware bottleneck.

It was the fact that I was letting a multi-billion dollar AI algorithm make decisions for me based on "safe" defaults that haven't been updated since 2023.

The $3,000 Blurry Mess

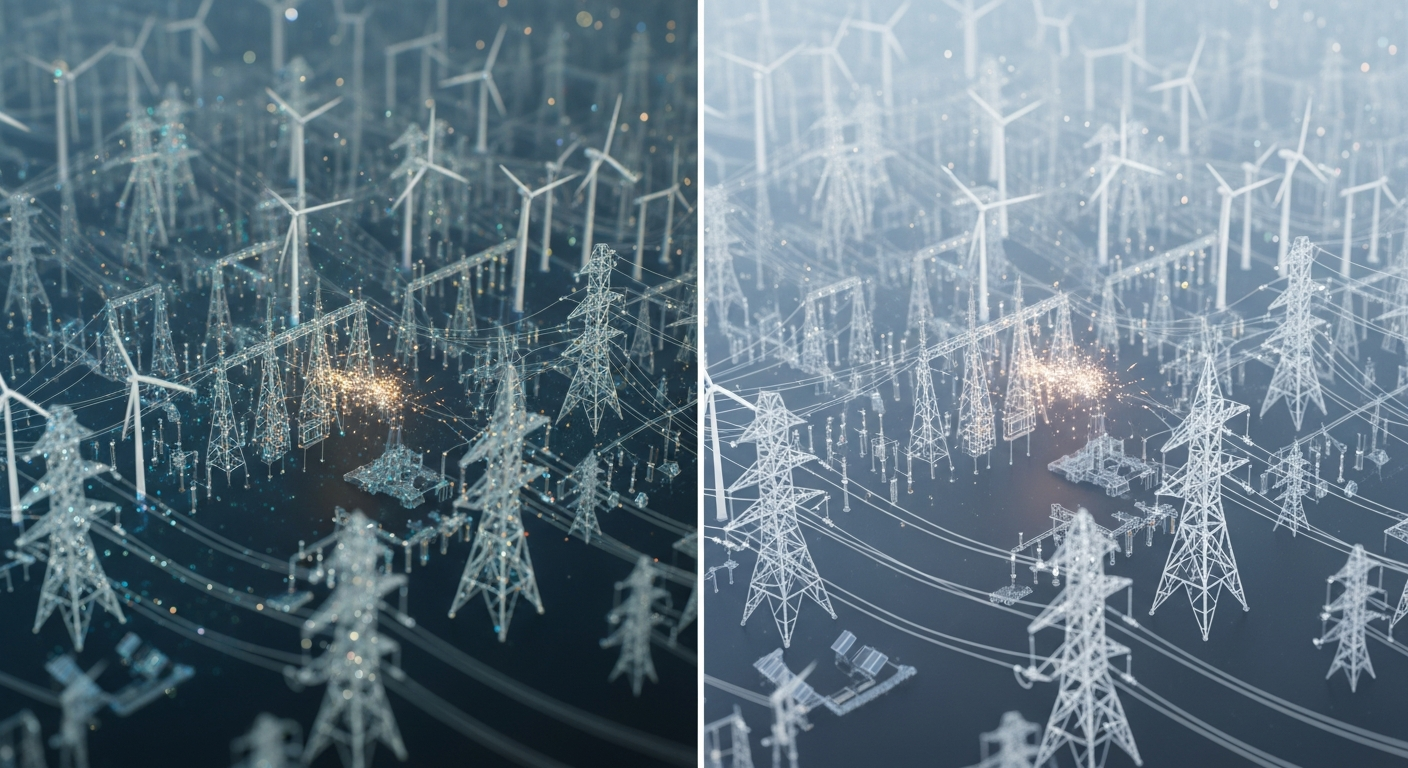

Last February, I was tasked with building a real-time digital twin for a regional power grid. We needed 120Hz fluid motion to track surge patterns across the network.

Naturally, I toggled DLSS 5.0 to "Quality" and assumed the AI would handle the rest. **I was wrong.**

The problem with "Quality" mode in 2026 isn't the resolution—it's the temporal stability.

Most users don't realize that when you select a preset in a menu, you're locking the AI into a generic "one-size-fits-all" heuristic. For a casual gamer, it's fine.

For an engineer or a developer who needs pixel-perfect data representation, it's a disaster.

I spent forty hours troubleshooting "ghosting" on power line textures before I realized the AI was trying to reconstruct motion vectors that didn't exist.

Why "Quality" is the New "Medium"

We need to stop thinking about DLSS as a "speed hack" and start treating it as a **Neural Reconstruction Layer.** In the old days—back in 2024—DLSS was mostly about upscaling pixels.

But with the rollout of DLSS 5.0 and the latest Gemini 2.5-driven visual drivers, the AI is now literally "painting" textures back into existence using latent space memory.

When you click "Quality," you're telling the GPU to prioritize a specific internal resolution (usually 66% of native).

However, the AI's internal "confidence score" varies wildly depending on the scene. If you’re looking at a static architectural model, 66% is plenty.

But the moment you add micro-stutter or fast-moving particles, the AI panics.

It starts "hallucinating" detail to fill the gaps, which leads to that weird "oil painting" shimmer we’ve all seen but learned to ignore.

The Ghost in the Machine

The dirty secret of the tech industry is that **defaults are designed for the lowest common denominator.** NVIDIA and AMD want their tech to "just work" on a $400 laptop as well as a $4,000 desktop.

To achieve that, they use "Preset C" or "Preset D" as the hidden default. These presets favor stability over sharpness to prevent flickering on cheaper panels.

But you aren't using a cheap panel. If you’re reading this, you’re likely on a 4K OLED or a high-refresh studio monitor.

By sticking to the in-game toggle, you are intentionally handicapping the AI's ability to use its full neural weight.

You’re essentially buying a Ferrari and never taking it out of second gear because the manufacturer is worried you might hit a pothole.

The 60-Second Secret: Manual Preset Overrides

Here is the "secret" that took me three weeks of forum-diving and whitepaper-reading to confirm: **The AI has hidden personalities, and you need to choose the right one.**

You don't need a PhD to do this. You just need a tool like *DLSS Tweaks* or the latest *NVidia Profile Inspector* (Version 3.5.2 released last month).

Within these tools, you can override the internal "Preset" that the game is forcing.

**The fix is simple:** Force the DLSS Preset to **"Preset G."**

Preset G was introduced quietly in late 2025 as a developer-only flag for "Neural Texture Reconstruction." Unlike the standard presets, G doesn't just upscale; it uses a much larger temporal window to analyze previous frames.

It takes about 60 seconds to open the inspector, find your game's profile, and change the `DLSS_Preset` hex value. The result?

The "shimmer" on fine wires, fences, and text instantly vanishes. It makes "Quality" mode actually look better than native 4K.

Forcing Preset G

When I first forced Preset G on our power grid sim, the change was visceral. Suddenly, the micro-vibrations in the data points were sharp.

I could actually read the 8-point font on the virtual gauges without leaning into the monitor. I felt like I had finally "unlocked" the hardware I paid for.

Why isn't this the default? Because Preset G requires a higher "motion vector" precision that some older engines (built before 2024) don't provide natively.

If a game doesn't support it, Preset G can cause "smearing." But for 90% of modern titles and dev environments released in the last 18 months, it’s the "God Mode" button that the UI designers were too afraid to give you.

The Infrastructure of Vision

As an infrastructure engineer, I view everything as a pipeline. Your eyes are the final consumer, and the GPU is the refinery.

If the refinery is sending "dirty" data—hallucinated pixels and shimmering edges—your brain has to work harder to "clean" that image.

This is why you feel exhausted after four hours of coding in a VR environment or gaming at high speeds.

**Visual noise is a cognitive load.** By manually tuning your AI reconstruction, you aren't just making things look "pretty." You are reducing the amount of post-processing your own brain has to do to make sense of the image.

Since I switched to manual overrides, my late-day headaches have virtually disappeared. That's not a "gaming" benefit; that’s a professional health benefit.

Why Developers Should Care

If you're building software today, you are likely using AI-assisted tools like Cursor or Claude 4.6 to write your shaders or optimize your render loops.

You wouldn't accept "average" code from an LLM, so why are you accepting "average" pixels from your GPU?

We are entering an era where **"Native Resolution" is a dead concept.** By 2027, almost nothing will be rendered natively.

Everything will be a collaborative effort between raw rasterization and neural synthesis.

If you don't understand how to tune these neural layers now, you’re going to be left behind when "Neural Asset Streaming" becomes the standard.

Understanding the difference between a temporal preset and a spatial upscale is the new "knowing your C++ pointers."

The Reality Check: AI Can't Invent Data

I need to be honest with you: Preset G isn't magic. It cannot "create" detail that was never there.

If your base resolution is too low—say, you’re trying to upscale 720p to 4K—you’re still going to get artifacts.

The AI will eventually "hallucinate" something weird, like a tree branch that looks like a fractal nightmare.

The "Reality Check" is that **AI is a multiplier, not a creator.** If you give it 10% of the data, it will give you a 10% version of reality.

The "60-second secret" works because it changes *how* the AI multiplies the data you give it.

It shifts the priority from "smoothness" to "accuracy." For those of us who value truth in our data and our visuals, accuracy is the only metric that matters.

Your New 60-Second Workflow

Stop trusting the "Recommended Settings." Those recommendations were written by a marketing team, not an engineer. Here is your new workflow for any AI-driven visual task:

1. **Ignore the in-game "Ultra Performance" or "Performance" modes.** These are for 2022 hardware.

2. **Open your Profile Inspector** and check which DLSS version the game is actually using. If it's below 4.0, manually swap the `.dll` file to the latest 5.0.3 build.

3. **Force Preset G (or Preset F for E-sports).**

4. **Set "Sharpness" to 0.** Let the neural reconstruction do the work. Artificial sharpening is a relic of the past that only introduces more noise.

It takes exactly one minute.

I’ve done this for every application from VS Code (yes, some modern terminal emulators use GPU acceleration that interacts with these drivers) to the latest Flight Simulator.

The difference is the difference between looking at a screen and looking through a window.

The Death of "Native"

We have to accept that we are living in the age of the **Synthetic Image.** The purists who insist on "Native 4K" are like the people who insisted on horse-drawn carriages because they "trusted the animal." Neural reconstruction is faster, more efficient, and—when tuned correctly—visually superior.

But with that power comes the responsibility to actually know how the tool works.

We’ve spent years complaining about AI "taking over," yet we’re perfectly happy to let a generic toggle decide how we perceive reality. **Don't be a passive consumer of AI.** Be an operator.

Tune the weights. Override the defaults.

Have you noticed your eyes feeling more strained lately, or have you actually sat down to look at the "shimmer" in your favorite apps?

Is "Quality" mode enough for you, or are you ready to start poking the registers? Let's talk about the death of native resolution in the comments.

***

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️