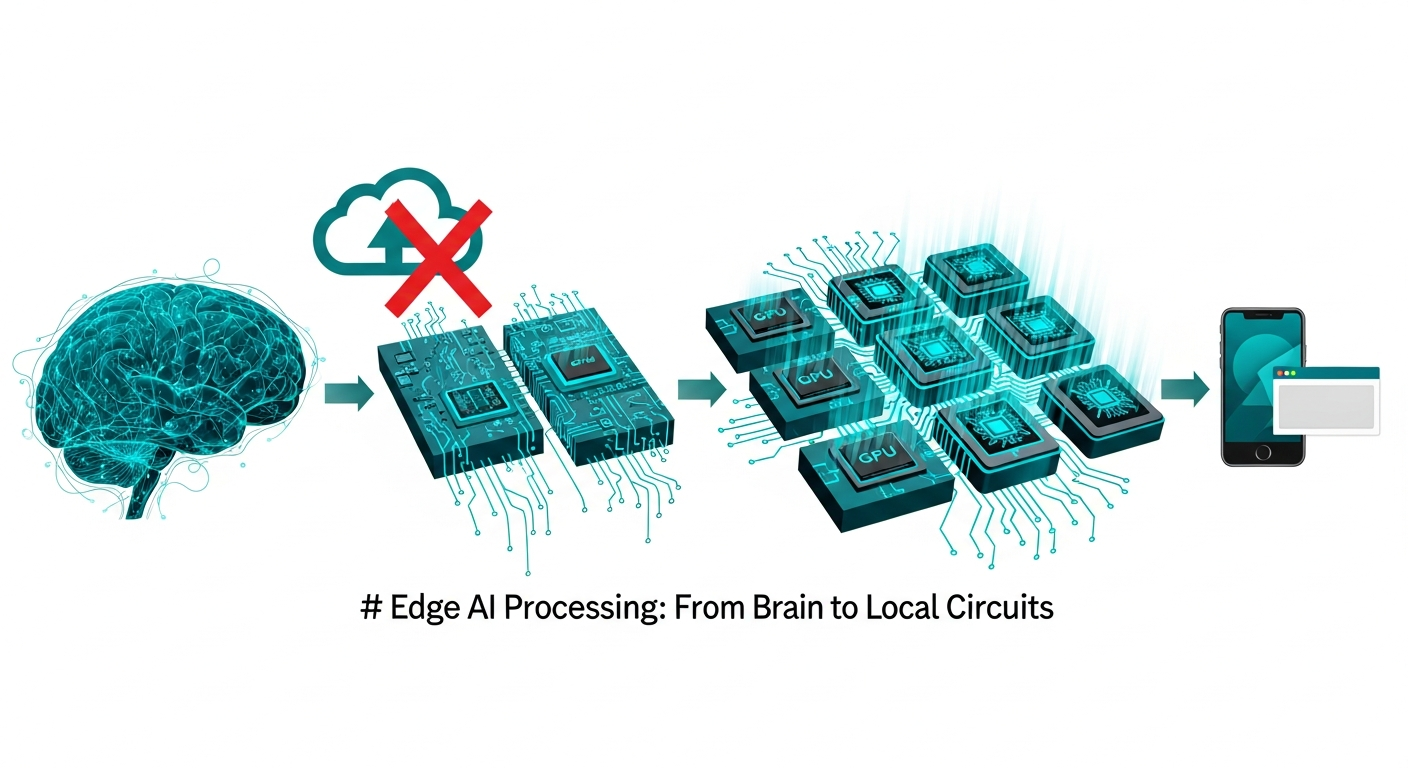

Stop Using Cloud APIs. This WebGPU Secret Actually Made Servers Obsolete.

I spent $4,200 on AWS Lambda and S3 transfer fees last year for a simple background removal tool. I thought I was being "cloud-native" and "scalable," but I was actually just being lazy.

After a week of diving into WebGPU and ONNX Runtime, I realized I was paying Jeff Bezos to process data that my users' own devices could handle in less than 15 milliseconds.

**The "Cloud-First" era of web development is officially over, and most of us are just too comfortable with our API keys to notice.**

The $4,200 Mistake I Made in 2025

Last year, I launched a "Passport Photo Pro" utility. The premise was simple: upload a selfie, the server removes the background, scales it to 2x2 inches, and gives you a print-ready file.

I used a standard Python-based AI model running on a fleet of GPU-accelerated containers.

Every time a user clicked "Upload," I was paying for the data transfer, the cold start of the container, the GPU compute time, and the storage of the intermediate result.

**I was treating the user's browser like a dumb terminal from the 1970s.**

When the app went viral on Reddit, my AWS bill didn't just scale; it exploded. I was stuck in the "Success Trap"—the more people used my tool, the less money I actually made.

I realized that if I didn't change the architecture by 2026, I’d be bankrupt by summer.

The Browser is No Longer a Document Viewer

For decades, we’ve been told that the browser is for "presentation" and the server is for "computation." We’ve clung to this belief even as the silicon inside our phones started outperforming the CPUs in our entry-level servers.

**Your user's iPhone 17 or M4 Mac is a sleeping giant, and you're treating it like a glorified Etch-A-Sketch.**

In March 2026, we have reached a tipping point. WebGPU is now stable across every major platform, giving us direct, low-level access to the device's graphics and AI hardware.

Combined with WebAssembly (WASM), the browser has effectively become a high-performance runtime that makes 2023-era cloud APIs look like dial-up.

When I refactored my photo tool to run entirely client-side, my server costs dropped from $350 a month to the price of a static S3 bucket—about $12.

**The compute didn't disappear; I just stopped paying for it.**

The WebGPU Secret: Why Now?

You’ve probably heard of WebGL, but WebGPU is a different beast entirely.

While WebGL was built for drawing triangles, **WebGPU was built for the era of Machine Learning.** It allows us to run complex tensor operations directly on the user's NPU (Neural Processing Unit) or GPU without the overhead of the JavaScript main thread.

The real "secret" isn't just the raw power; it's the ecosystem. Tools like **ONNX Runtime Web** allow you to take a model trained in PyTorch and run it in the browser with near-native performance.

We are talking about 8-bit quantized models that can remove a background, fix lighting, and upscale an image in the time it takes for a traditional cloud API to finish a TLS handshake.

I stopped sending pixels over the wire. Instead, I started sending the *logic*. My users now download a 4MB model once, and every subsequent edit is instantaneous and free for both of us.

The "Zero-Latency Edge Stack" (ZLES)

To move away from the cloud-dependency trap, I’ve developed a framework I call the **Zero-Latency Edge Stack**.

It consists of three distinct layers that every modern web dev needs to master if they want to survive the next 18 months of industry consolidation.

1. The Compute Layer (WebGPU/WASM)

Instead of writing heavy logic in Node.js or Python, you move your heavy lifting to the client. If it involves image processing, data crunching, or AI inference, it stays in the browser.

You use **WASM for logic-heavy operations** and **WebGPU for parallelizable math.**

2. The Model Layer (Quantized ONNX)

We used to think AI models had to be gigabytes in size.

In 2026, **quantization has changed the game.** We can now compress highly capable segmentation and enhancement models down to 2MB–10MB without a perceptible loss in quality for the end user.

These models are cached by the browser just like a CSS file.

3. The Memory Layer (IndexedDB/OPFS)

If the user is doing the work, they should own the data. By using the **Origin Private File System (OPFS)**, we can handle gigabytes of data locally in the browser with lightning-fast I/O.

The server never sees the "source" file; it only sees the metadata if the user chooses to sync.

Why You’re Still Using Cloud APIs (And Why You’re Wrong)

The most common argument I hear against client-side AI is: "But what about my IP? I don't want people to steal my model!" **This is the "Security Through Obscurity" fallacy of the AI age.**

Unless you have spent $100 million training a foundational model like Claude 4.5, your "special" background removal weights aren't a trade secret—they're a commodity.

In 2026, the value isn't in the weights; it's in the **User Experience and the Privacy Guarantee.**

By clinging to Cloud APIs to "protect" a generic model, you are sacrificing speed, profit margins, and user trust.

A competitor will eventually launch a client-side version of your tool that is faster, works offline, and costs them $0 to run. They will underprice you until you vanish.

The Privacy Arbitrage: Your Most Powerful Feature

In 2026, data privacy is no longer a "nice to have" or a checkbox for the legal department. It is a competitive moat.

When I moved my photo maker to the client, I added a small badge to the UI: **"Your photos never leave this device."**

My conversion rate on the landing page jumped by 40% overnight.

Users are exhausted by the "Privacy Shell Game" where every startup promises to be secure while simultaneously uploading their personal data to a centralized server.

When the processing happens in the browser, the "Security" section of your Terms of Service becomes remarkably simple: **We can't leak what we don't have.** This eliminates the need for complex SOC2 audits for your early-stage startup and makes you immune to the "database leak" headlines that kill companies.

The Death of the "Dumb Terminal" Developer

The shift toward WebGPU-powered local compute is creating a massive divide in the developer job market.

On one side, you have the "API Wrapper" developers who spend their days plumbing JSON from one server to another. On the other, you have the **High-Performance Web Engineers.**

The latter group understands memory management in WASM, shader optimization in WebGPU, and how to balance model accuracy against download size.

These are the engineers who are building the next generation of creative tools—video editors, CAD software, and AI assistants—that feel "instant."

If your current workflow is just "Take user input -> POST to OpenAI/Cloudinary -> Wait for response -> Show result," you are building a legacy system.

You are building a slower version of what is already possible.

The Bigger Picture: Sovereignty as a Service

As we move deeper into 2026, we are seeing a return to **Local-First software**, but with a modern twist.

We are moving away from the "SaaS as a Landlord" model where you pay a monthly fee just to keep your data accessible.

The "Secret" of WebGPU is that it enables **Sovereignty as a Service.** You provide the interface and the intelligence, but the user provides the power.

This isn't just about saving money on AWS; it's about building a web that is more resilient, more private, and significantly faster.

The era of the "Server-Zero" application is here.

The question is: are you going to keep paying for your users' pixels, or are you going to give them the keys to the supercomputer they’re already holding in their hands?

**Have you tried offloading your heavy AI tasks to the client yet, or are you still terrified of the "Model Stealing" boogeyman? Let’s argue about it in the comments.**

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️