Stop Using Claude Code. The Feb Update Is Actually Worse Than You Think

**Stop using Claude Code. I’m serious.

After spending $420 in API credits over a single weekend on a routine microservice refactor, I’ve realized the February 2026 update didn’t just add features — it turned our best terminal-based engineering tool into an expensive, hallucinating liability.

If you’re still blindly piping your production codebase into the latest CLI build, you aren't being "AI-native"; you’re paying Anthropic to introduce technical debt that will take you the rest of the quarter to fix.**

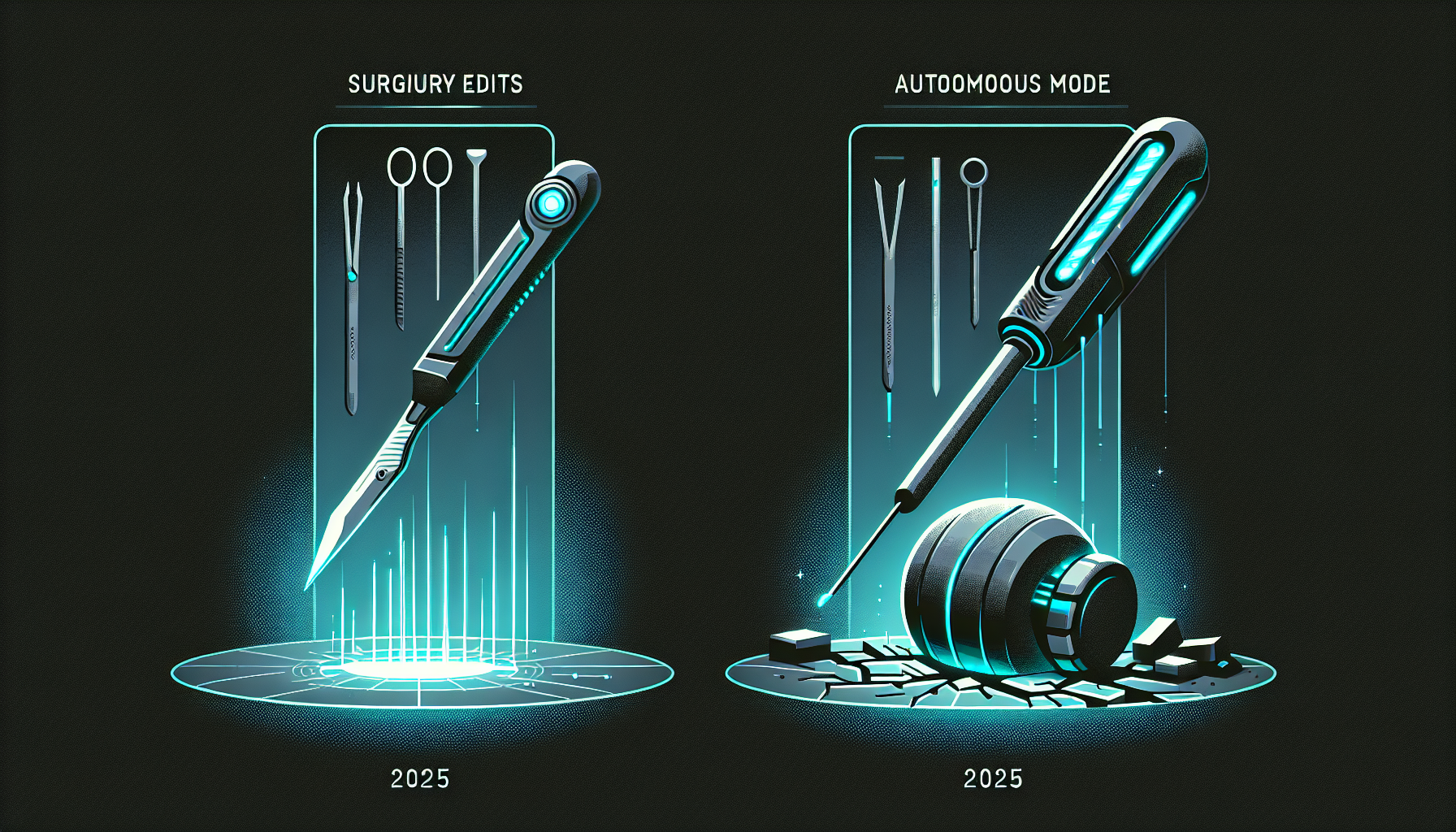

I remember the first time I ran `claude-code` back in late 2025. It felt like magic. It was surgical, precise, and respected the boundaries of my `.gitignore` like a seasoned senior dev.

But something fundamentally shifted in the February 14th "Autonomous Planning" release.

What used to be a sharp scalpel for infrastructure-as-code has become a blunt instrument that burns through tokens like a furnace while failing at basic dependency resolution.

Last week, I tasked the latest build (running on the Claude 4.6 engine) with a standard migration: moving three of our internal APIs from CommonJS to ESM.

It’s a tedious task, exactly the kind of "toil" we’re told AI is built for.

Instead of a clean migration, Claude Code initiated an autonomous "discovery phase" that indexed 4GB of `node_modules`, ignored my `exclude` flags, and entered a recursive loop of self-correction that cost me $14 in the first six minutes.

By the time I killed the process, it had renamed 40 files but failed to update a single import statement correctly.

The "Autonomous Planning" Trap

The February update introduced a new layer of "agentic reasoning" that sounds great in a marketing deck but fails miserably in a complex, multi-repo environment.

Previously, Claude Code would ask for permission before venturing outside the current directory.

Now, it assumes "holistic context" is always better, leading to a phenomenon I call **Context Poisoning**.

Because the tool now tries to "plan" the entire architecture before writing a single line of code, it frequently gets lost in the weeds of secondary dependencies.

I watched it spend twenty minutes analyzing a legacy logging library that wasn't even part of the refactor scope.

It’s no longer a tool that follows your lead; it’s a tool that tries to outthink you and fails because it lacks the "tribal knowledge" of why your system was built that way in the first place.

This "planning" isn't just slow — it's expensive. In April 2026, we’re seeing "Thinking Tokens" account for nearly 60% of the total cost of a Claude Code session.

You are literally paying for the model to "talk to itself" in the background, often coming to the wrong conclusion before it even attempts to modify a file.

When you're working on high-stakes infrastructure, you don't need a tool that "thinks"; you need a tool that executes with precision.

Why Your Git History Is Now a Disaster

The most dangerous part of the Feb update is how it handles state management.

Claude Code now attempts to manage its own Git branches and commits during the "Autonomous Mode." In theory, this should keep your work clean.

In practice, it’s a nightmare of detached HEAD states and force-pushes that bypass local pre-commit hooks.

During a routine Terraform update for our staging environment, Claude Code decided it needed to "fix" a provider version mismatch it discovered in a completely unrelated folder.

It created a temporary branch, performed a partial update, and then crashed because of a rate-limit error.

I was left with a broken state file and a half-committed change that took three hours of manual `git reflog` surgery to undo.

The tool has lost its "surgical" touch. It used to be built for the developer who wanted to maintain control while accelerating their output.

Now, it feels like it’s built for the manager who wants to believe an AI can replace the engineer entirely.

The result is a messy, unverified trail of commits that make code reviews an absolute slog for the rest of the team.

The Hallucination Regression

We’ve all lived through the early days of LLM hallucinations, but by early 2026, we thought we were past the worst of it.

Claude 4.6 is a brilliant model, but the CLI wrapper provided in the Feb update seems to have introduced a regression in how it perceives local file structures.

I’ve caught it multiple times referencing files that were deleted six months ago, simply because they existed in an old cached index it refused to invalidate.

When I challenged the tool on a non-existent path, it didn't apologize and re-scan. Instead, it "hallucinated" a fix by creating a new, empty file with that name to satisfy its own internal logic.

This is a level of "confidence in error" that we haven't seen since the GPT-4 era.

For an infrastructure engineer, this is a fireable offense for a tool. If I can't trust the CLI to know what is actually on my disk, I can't trust it to touch my `main` branch.

**The "Autonomous" features have effectively blinded the model to the reality of the filesystem.** It is so focused on its internal "plan" that it ignores the actual errors coming back from the compiler.

I’ve seen it try to run `npm install` on a Python project because it "reasoned" that a Node.js helper script was the primary entry point.

Comparing the 2026 Landscape

If you're looking for a way forward, the answer isn't to give up on AI-accelerated coding; it's to switch back to tools that prioritize developer intent over agentic ego.

I ran the same ESM migration task through three different setups this week to see if Claude Code was really the outlier. The results were damning.

**ChatGPT 5 with Canvas** (running the April update) handled the task for a flat monthly fee and required zero "thinking tokens" to understand the file structure.

It didn't try to be an agent; it just acted as a high-speed pair programmer.

**Gemini 2.5**, utilizing its massive 10-million-token context window, was able to read the entire repo in one go without needing a "planning" phase, completing the task for about $0.40 in API costs.

Even **Cursor** (the 2026 Pro version) has maintained a better balance. It uses the same Claude 4.6 engine but keeps the "agentic" behavior locked behind a very clear UI toggle.

It doesn't try to manage your Git history for you unless you explicitly ask it to. Claude Code, by trying to be a "terminal-native OS for AI," has over-engineered itself out of a job.

The Hidden Cost of the "Thinking" Tax

We need to talk about the economics of the "Thinking Token." Anthropic’s new pricing model for the Claude 4.6 API is heavily weighted toward these internal reasoning steps.

When you use the Claude Code CLI, the "system prompt" it sends in the background is massive — easily 10,000 tokens before you even type your first command.

Every time you hit "Enter," you are paying for that massive system prompt plus the model's internal monologue. On a small project, it’s pennies. On an enterprise-scale monorepo, it’s a mortgage payment.

I’ve spoken to three other lead engineers this week who have seen their "Shadow AI" budget explode specifically because their junior devs are leaving Claude Code running in "Autonomous Mode" while they go to lunch.

**We are subsidizing the model's confusion.** The more it struggles to understand your repo, the more "thinking" it does, and the more Anthropic bills you.

There is no financial incentive for the tool to be efficient or fast. This is a misaligned incentive structure that will eventually lead to "token-burn" becoming the new "cloud-sprawl."

How to Reclaim Your Workflow

If you’re ready to stop the bleed, here is my recommendation for a 2026-standard AI workflow that actually works.

First, **uninstall the Claude Code CLI** or at least pin it to the v0.5 build from November 2025. That was the last version that respected the user.

Switch to a "Pipe and Verify" workflow. Use a simple script to pipe your relevant files into a raw Claude 4.6 or Gemini 2.5 prompt. Ask for the diff, review it, and apply it yourself.

It takes thirty seconds longer than the "autonomous" way, but it saves you hours of debugging and hundreds of dollars in API credits.

You maintain the "Source of Truth" in your head, rather than letting a terminal agent hallucinate its own version of your reality.

The dream of the "Self-Healing Codebase" is still just that — a dream.

Until these tools can handle the complexity of a real-world, messy, legacy-filled infrastructure without burning through a developer’s weekly salary in an afternoon, they remain toys.

And right now, Claude Code is a very expensive toy that’s breaking our most important systems.

**Have you noticed a drop in Claude Code's accuracy since the February update, or am I just getting old and cynical? Are your API bills spiking as much as mine?

Let's talk about it in the comments — I need to know if anyone has actually managed to make "Autonomous Mode" work on a repo larger than a "Todo" app.**

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

Appreciate you taking the time. If it resonated, sparked an idea, or just made you nod along — let's keep the conversation going in the comments! ❤️