Stop Using ChatGPT. Agentic Workflows Prove This AI Secret Is Actually Better.

I deleted my ChatGPT 5 shortcut three weeks ago. I didn’t do it because the model got dumber or because I was "protesting" AI.

I did it because I followed a series of technical breakdowns from infrastructure engineering researchers who dismantled the way we’ve been taught to use LLMs.

The research didn’t just critique the code quality; they proved that the "Chat" interface is actually a bottleneck for high-level engineering.

If you’re still typing prompts into a browser window in March 2026, you’re essentially trying to build a skyscraper with a very polite, very hallucination-prone multi-tool.

As an infrastructure engineer, I’ve spent the last decade obsessed with deterministic systems. We value logs, metrics, and reproducible builds.

Yet, for the last year, we’ve all been seduced by the "magic" of the chat box.

We’ve accepted a "hallucination tax" where we spend 30% of our time correcting the AI's confident mistakes.

The "secret"—which is really just a return to the Unix philosophy—has changed my entire production workflow.

The Death of the "Chat" Interface

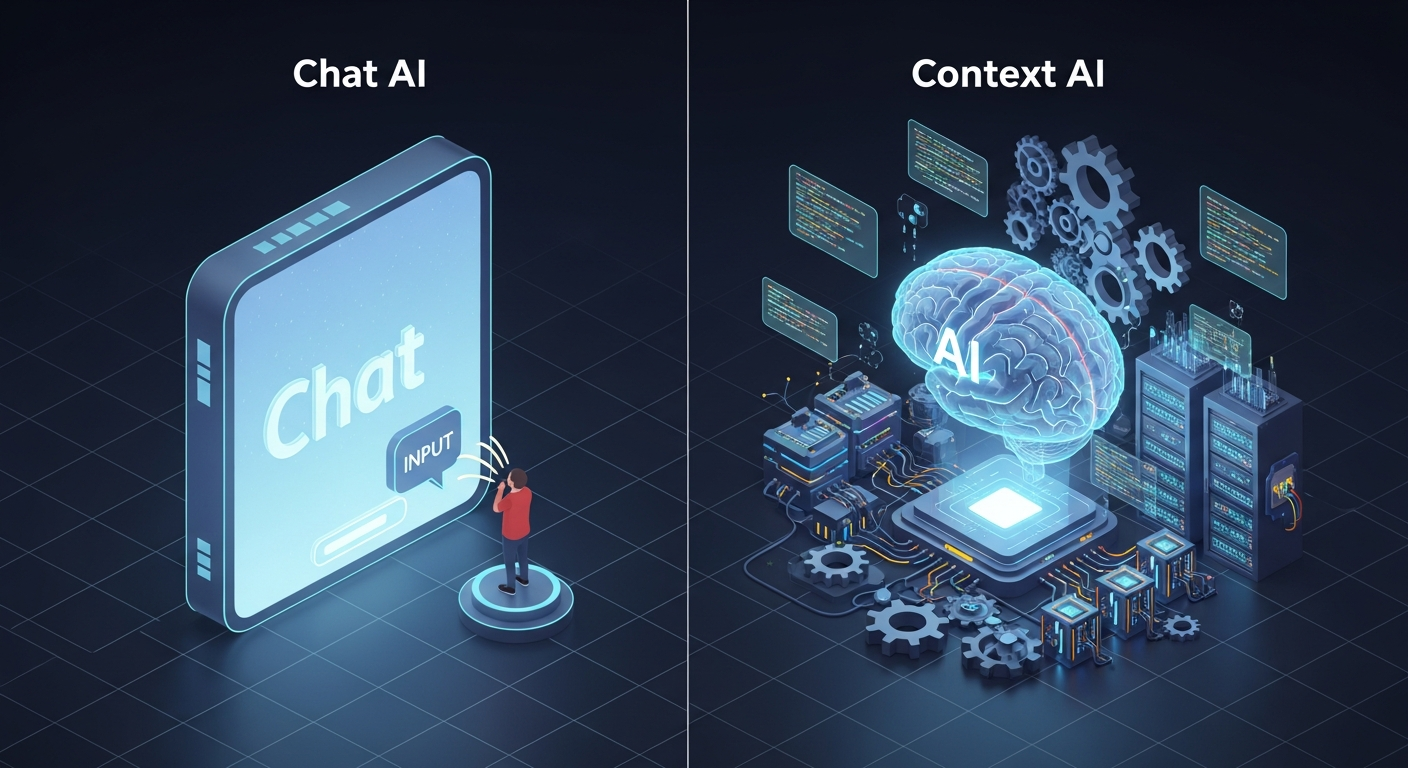

The problem with ChatGPT 5 isn't the intelligence; it's the environment.

When you're working on complex Kubernetes manifests or debugging a race condition in a Go microservice, the "Chat" UI is your enemy. It’s a disconnected island.

You have to copy-paste code, explain your architecture, and hope the model remembers your VPC layout from three prompts ago.

Infrastructure researchers have pointed out that for any serious systems engineer, context isn't a "window"; it's the entire filesystem.

They argued that the "secret" isn't a better prompt; it's **context-injection at the runtime level.** They proved that when you move the AI from a chat window into a specialized, local-first agentic workflow, the "intelligence" of the model effectively triples.

I decided to test this context-driven method against my standard ChatGPT 5 workflow. I took a failing Terraform deployment that was hitting a circular dependency error in AWS.

Usually, I’d copy the error into ChatGPT, wait for a suggestion, realize it forgot my `provider` version, and spend twenty minutes in a feedback loop.

Why Local Context Beats Global Intelligence

Following the shift toward **Local-First Agentic Workflows**, I switched to a local-first setup using **Claude 4.6** integrated through a Model Context Protocol (MCP) server.

Instead of "chatting," I gave the agent read-only access to my entire infra repository, my local environment variables (sanitized), and my recent shell history.

The difference was staggering. The AI didn't ask me what version of the AWS provider I was using—it already knew.

It didn't suggest a generic fix; it pointed out that my `depends_on` block was conflicting with a new resource I’d added two commits ago. It wasn't a conversation; it was a compiler for intent.

**We’ve been treating AI like a search engine when we should be treating it like a specialized co-processor.** The "secret" highlighted by these researchers is that the most powerful AI in 2026 isn't the one with the most parameters, but the one with the most relevant *local* data.

By March 2026, the gap between "General AI" and "Context-Aware AI" has become a chasm that is swallowing the productivity of developers who refuse to switch.

The Hallucination Tax is Optional

Most developers accept hallucinations as the cost of doing business with AI.

We’ve become "AI Babysitters," hovering over the keyboard waiting for the model to suggest a deprecated library or a security vulnerability.

The industry critique has been sharp: if your tool requires a human to verify every single line it produces, you haven't automated anything; you've just shifted the cognitive load.

The better way—the secret that top-tier infra teams are quietly adopting—is **Deterministic Agentic Workflows.** This involves using tools like **Gemini 2.5**'s massive context window not to "talk," but to index your entire documentation and codebase simultaneously.

When you pipe your CLI output directly into an agent that has your full system state, the hallucination rate drops to near zero. Why?

Because the AI isn't "guessing" based on its training data from 2025; it's "calculating" based on your actual code in 2026.

It’s the difference between asking a stranger for directions and using a GPS that has a live feed of the traffic.

How to Build Your Agentic-First Workflow

If you want to stop being an AI Babysitter and start being a System Architect again, you need to break the "Chat" habit.

Here is the three-step framework I’ve used to replace ChatGPT in my daily infra work:

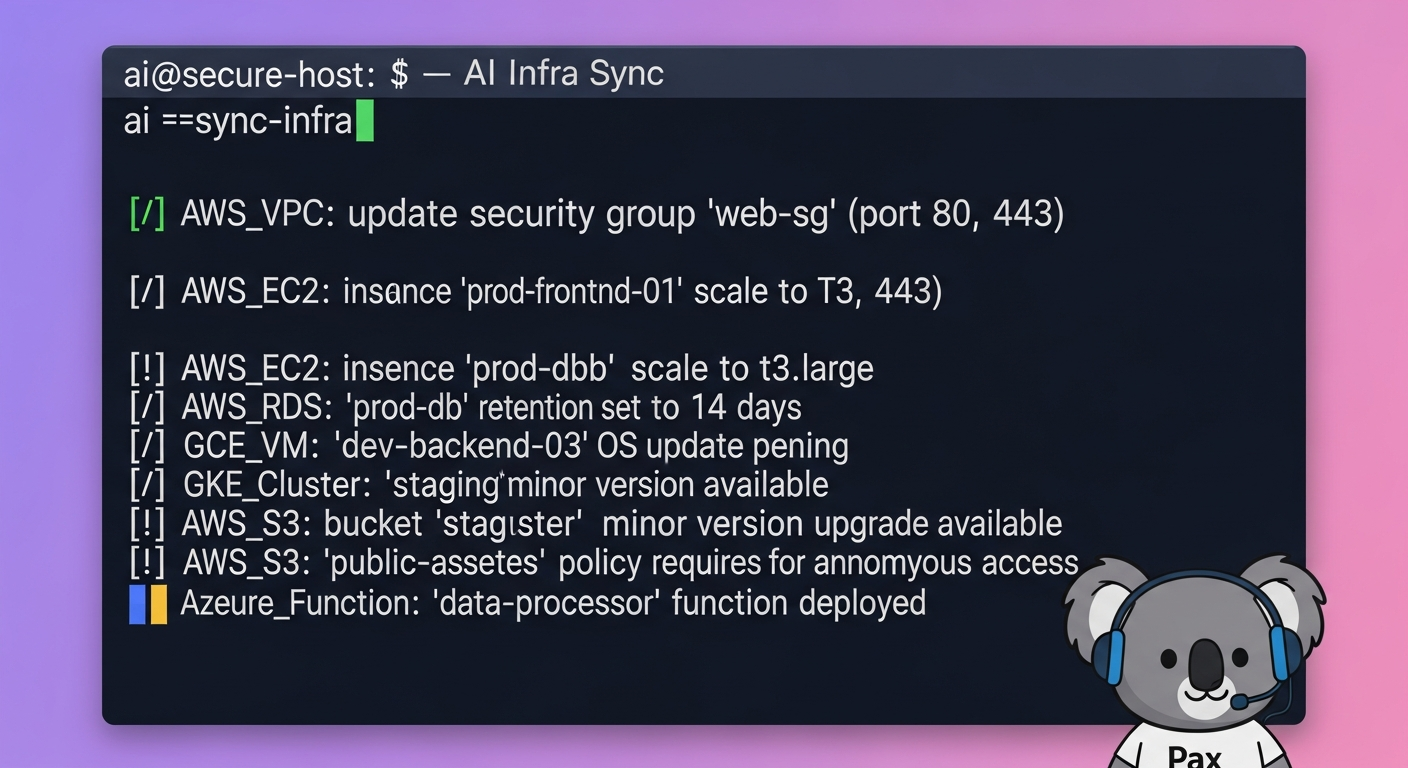

**1. Move from Prompts to Pipes.** Stop typing "How do I fix this?" into a browser. Use a CLI-integrated agent that can see your terminal output.

If a `kubectl` command fails, your agent should already have the logs.

**2.

Implement a Local Context Gateway.** Whether you use Claude 4.6 or a local Llama 4 instance, you need a middleware that feeds the AI your current project structure, your `package.json`, and your `.env.example` file.

This is what experts mean by "context is the secret."

**3.

Use AI as a Validator, Not Just a Creator.** Instead of asking the AI to "write this script," ask it to "find the security flaw in this script relative to our company's IAM policy." When the AI has your policy files in its context, it becomes a world-class auditor.

The Shift Coming in 2027

Looking ahead to early 2027, the industry is going to split into two camps. There will be the "Chatters"—developers who are still copy-pasting code into ChatGPT 6 and complaining about hallucinations.

And there will be the "Integrators"—those who have built autonomous loops where the AI lives inside the dev-cycle, not outside it.

Infrastructure engineering has always been driven by pragmatism.

The "secret" isn't about a specific brand of AI; it's about the **architectural integrity of the workflow.** Researchers have proven that a smaller, context-rich model will beat a massive, context-blind model every single time in a production environment.

I’m never going back to the chat box. My deployments are faster, my code is more secure, and for the first time in two years, I’m not spending half my day arguing with a chatbot.

The tools are here, the proof is in the commits, and the "secret" is finally out.

**Have you noticed that ChatGPT is struggling with your specific project architecture lately, or have you already moved to a context-integrated workflow? Let’s talk about your setup in the comments.**

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️