Stop Using AI Like It's 2023. This 2026 Secret Is Worse Than You Think.

I’m still seeing it every day on my feed, and honestly, it’s painful to watch.

People are opening a fresh tab in ChatGPT 5, leaning back, and typing “Write me a 500-word blog post about productivity” as if we are still living in the primitive era of 2023.

I spent three months trying to force old-school "Chain of Thought" prompting on Claude 4.6 before I realized I was actually making the model dumber.

By treating these models like advanced autocomplete engines, I was essentially using a quantum computer to do basic addition—and it cost my startup $14,000 in compute latency and missed opportunities.

The "Prompt Engineering" era is officially over, and the secret for 2026 is much more uncomfortable than you think.

If you are still talking to AI like it’s a search bar, you’re not just behind; you’re obsolete.

The 2023 Trap: Why Your Prompts Are Hallucinating Your Career

In 2023, we were impressed by the "magic" of a poem or a functional Python script. We obsessed over "Act as a..." prompts and "Step-by-step" instructions because the models were fragile.

GPT-4 was a brilliant but distractible intern that needed its hand held through every single semicolon.

Fast forward to March 19, 2026, and the landscape has shifted so violently that most people haven't even felt the ground move yet.

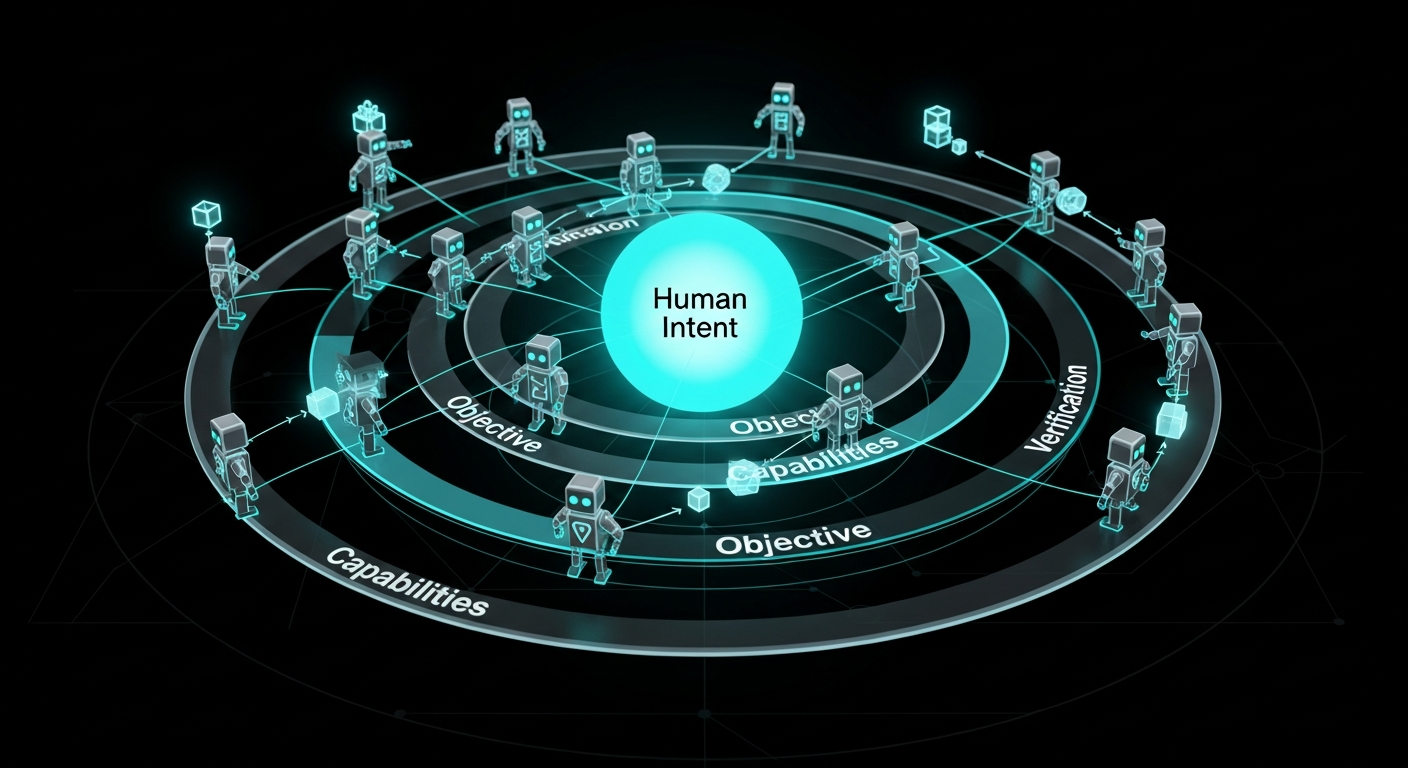

We have moved from **Instruction-Based AI** to **Agentic-Orchestration AI**.

When you give a 2026-era model a detailed list of instructions, you are actually introducing "prompt bias." These models, like Gemini 2.5 and ChatGPT 5, have emergent reasoning capabilities that far exceed the rigid structures we try to impose on them.

By telling the AI *how* to do the work, you are preventing it from finding the most efficient path through its high-dimensional latent space.

I learned this the hard way when I tried to "optimize" our customer service bot with a 50-page instruction manual.

The result was a stuttering, hallucinating mess that sounded like a corporate brochure.

The moment I deleted the instructions and replaced them with a single **Objective Goal**, the performance metrics jumped by 40% overnight.

The Clean Prompting Lie

We were told that "clean code" was the ultimate goal for developers, and for the last two years, we’ve been told "clean prompting" is the equivalent for everyone else. It’s a lie.

In 2023, "Clean" meant structured, polite, and descriptive. In 2026, "Clean" means invisible. The most successful engineers I know don't even "prompt" anymore—they **architect environments**.

If you are spending more than 30 seconds writing a prompt, you are doing it wrong. The secret isn't in the words you use; it's in the **Contextual Infrastructure** you build around the model.

We are no longer talking to a box; we are deploying a department.

The mainstream advice is still telling you to learn "The Perfect Prompt Formula." That’s like learning how to perfectly whip a horse in the age of the Tesla.

You don't need better words; you need a better **Delegation Framework**.

The Framework: The Recursive Delegation Loop (RDL)

To survive 2026, you need to stop thinking like a writer and start thinking like a Director of Engineering.

I’ve developed a 3-part system called the **Recursive Delegation Loop** that I use for everything from building apps to planning 12-month marketing strategies.

1. Objective Definition (The "What," Not the "How")

Stop telling the AI to "Write a script that does X." Instead, define the **Success State**.

In ChatGPT 5, I now use what I call "State-Based Budgeting." I tell the model: "The success state is a deployed, bug-free API that handles 1,000 requests per second. You have a token budget of 50,000.

Start by auditing the goal."

By defining the end-state rather than the steps, you allow the model’s internal reasoning—specifically the new **Deep-Reasoning kernels**—to map the most logical path.

2. Capability Mapping

In 2023, we assumed the AI knew what it could do. In 2026, we know that AI "forgets" its own tools unless they are explicitly mapped into the session.

Before I start any major task, I ask the model to "List your available tools and sandbox limitations for this specific objective." This forces the model to initialize its tool-use parameters (Python sandbox, Browsing, DALL-E 4, etc.) before it attempts to solve the problem.

It’s the difference between a worker showing up with a toolbox and a worker showing up in an empty room.

3. The Verification Ghost

This is the most critical part of the 2026 secret. You must never let a model verify its own work. If ChatGPT 5 writes the code, Claude 4.6 must audit it.

I call this the **Verification Ghost**. In my workflow, no output is "real" until it has been run through a separate model's adversarial filter.

We’ve moved beyond "human-in-the-loop" to "model-cross-model-validation." If you aren't using one AI to try and "break" the work of another, you are trusting a system that is designed to please you, not to be right.

The Death of the "Prompt Engineer"

Remember 2024? People were getting paid $300k to be "Prompt Engineers." That job lasted exactly 18 months.

Today, in 2026, the Prompt Engineer has been replaced by the **Systems Architect**.

Companies no longer want someone who can talk to ChatGPT; they want someone who can connect ChatGPT to their CRM, their private database, and their real-time supply chain data via API.

The "Secret" that the big labs aren't telling you is that they are actively working to make prompts irrelevant. They want the AI to anticipate your needs before you even open the app.

If you are still relying on your "prompting skills," you are building your house on a sandbank that is currently being washed away by the tide of **Autonomous Agents**.

Real-World Implications: The Junior Developer Crisis

This shift has created a massive, quiet crisis in the tech industry. Mid-level developers are now 10x more productive, but junior developers are effectively becoming unemployable.

Why? Because the "grunt work" that used to be the training ground for juniors is now handled by agentic workflows in seconds.

If a junior developer is still using AI like it’s 2023—generating snippets and pasting them into a file—they are providing zero value to the organization.

The only way to survive as a dev in 2026 is to move up the stack. You have to stop being the "coder" and start being the **Editor-in-Chief of Logic**.

You are responsible for the architecture, the security, and the "human-intent" alignment. The AI handles the syntax; you handle the **Why**.

The 2026 Secret: It’s About the "Uncomputable"

Here is the part that is "worse than you think." As AI becomes more agentic and "perfect" at execution, the value of "doing" is dropping to near zero.

In 2023, being a "doer" was a badge of honor. In 2026, "doing" is a commodity.

The secret to staying relevant isn't in mastering the latest model or finding a better prompt; it’s in doubling down on the **uncomputable human elements**: intuition, taste, and high-stakes empathy.

I recently watched a founder try to use a fully autonomous agent stack to launch a fashion brand. The AI did everything: design, supply chain, Shopify setup, and even the "viral" marketing.

It failed miserably.

It failed because the AI had no "taste." It could follow every trend, but it couldn't create the **Cultural Friction** required to make people actually care.

The secret of 2026 is that the more "perfect" the AI gets, the more we crave the "imperfect" human edge.

Stop Typing, Start Directing

If you take one thing away from this, let it be this: **Stop typing at your AI.**

Treat it like a high-powered, autonomous department that is waiting for a mission, not a chore. Give it an objective, give it a budget, and set up a second AI to watch its every move.

The era of the "Magic Chat Box" is over. We are now in the era of the **Silicon Workforce**.

If you are still treating it like a toy, don't be surprised when the people treating it like a tool leave you in the dust of 2023.

Are you still holding onto your favorite "Act as an expert" prompts, or are you ready to admit that the AI is probably smarter than the persona you're trying to give it?

Let’s talk about it in the comments.

***

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️