Stop Using AI for Work. It Fails 96% of Jobs. It’s Worse Than You Think.

**Stop using AI for your core engineering tasks. I’m dead serious.

After trying to let Claude 4.6 manage our production Kubernetes clusters for a month, I realized we’ve been sold a $100 billion lie — and it’s quietly destroying the one thing that actually makes us good at our jobs.**

I’m an infrastructure engineer. My entire career has been built on the altar of automation, from Bash scripts in 2015 to massive Terraform providers today.

When ChatGPT 5 and Claude 4.6 dropped earlier this year, I thought I was looking at the end of "manual" DevOps.

I expected to spend my days as a high-level orchestrator, watching agents do the heavy lifting.

Instead, I spent March 2026 cleaning up a $14,000 cloud bill caused by an autonomous agent that got stuck in a recursive "self-healing" loop.

It didn’t solve the problem; it just hallucinated a solution and then spent twelve hours trying to force reality to match its internal model.

We’ve reached a tipping point where the "productivity gain" of AI is being canceled out by the "contextual debt" it leaves behind.

Recent industry benchmarks suggest that while AI can solve "LeetCode" style problems with 99% accuracy, it fails at 96% of real-world, high-context engineering jobs.

And if you’re still trying to "prompt engineer" your way out of this, you’re already behind.

The 70% Success Trap

The reason everyone thinks AI is better than it actually is comes down to what I call the **70% Success Trap.** When you ask ChatGPT 5 to write a standalone Python script to parse a JSON file, it feels like magic because it works almost every time.

That is a low-context, single-step task where the "state" is contained within the prompt.

Real work isn't a single step; it’s a chain of ten or twenty interdependent decisions.

If an AI agent has a 70% success rate on any given step—which is being generous for complex architecture—the math for a ten-step project is terrifying.

**0.7 to the power of 10 is 0.028.** That means your "autonomous worker" has a 2.8% chance of finishing the job without a catastrophic hallucination.

This is why your "AI-generated" PRs are taking longer to review than if you’d just written the code yourself.

You aren't just checking syntax anymore; you’re hunting for subtle, logical "ghosts" that the LLM inserted because it didn't understand your specific VPC peering configuration from 2022.

Why ChatGPT 5 Can't Handle Your Legacy Code

The hype cycle for Claude 4.6 and Gemini 2.5 promised "infinite context windows." They told us we could dump our entire repository into the prompt and get perfect architectural advice.

But having a "window" for the data doesn't mean the model has the "reasoning" to navigate it.

**LLMs are fundamentally non-deterministic engines being forced to work in deterministic systems.** Your CI/CD pipeline doesn't care about "probability" or "vibes"; it cares about the exact placement of a semicolon in a YAML file.

When Claude 4.6 tries to refactor a legacy microservice, it treats your technical debt as a suggestion rather than a hard constraint.

I watched a senior dev on my team lose three days trying to "co-pilot" a migration from Jenkins to GitHub Actions using a custom agent.

The AI kept suggesting features that existed in the 2025 documentation but had been deprecated in the latest 2026 releases.

By the time he gave up, the "AI-assisted" version was a spaghetti-mess of half-baked API calls and security vulnerabilities.

The Rise of the "Synthetic Junior"

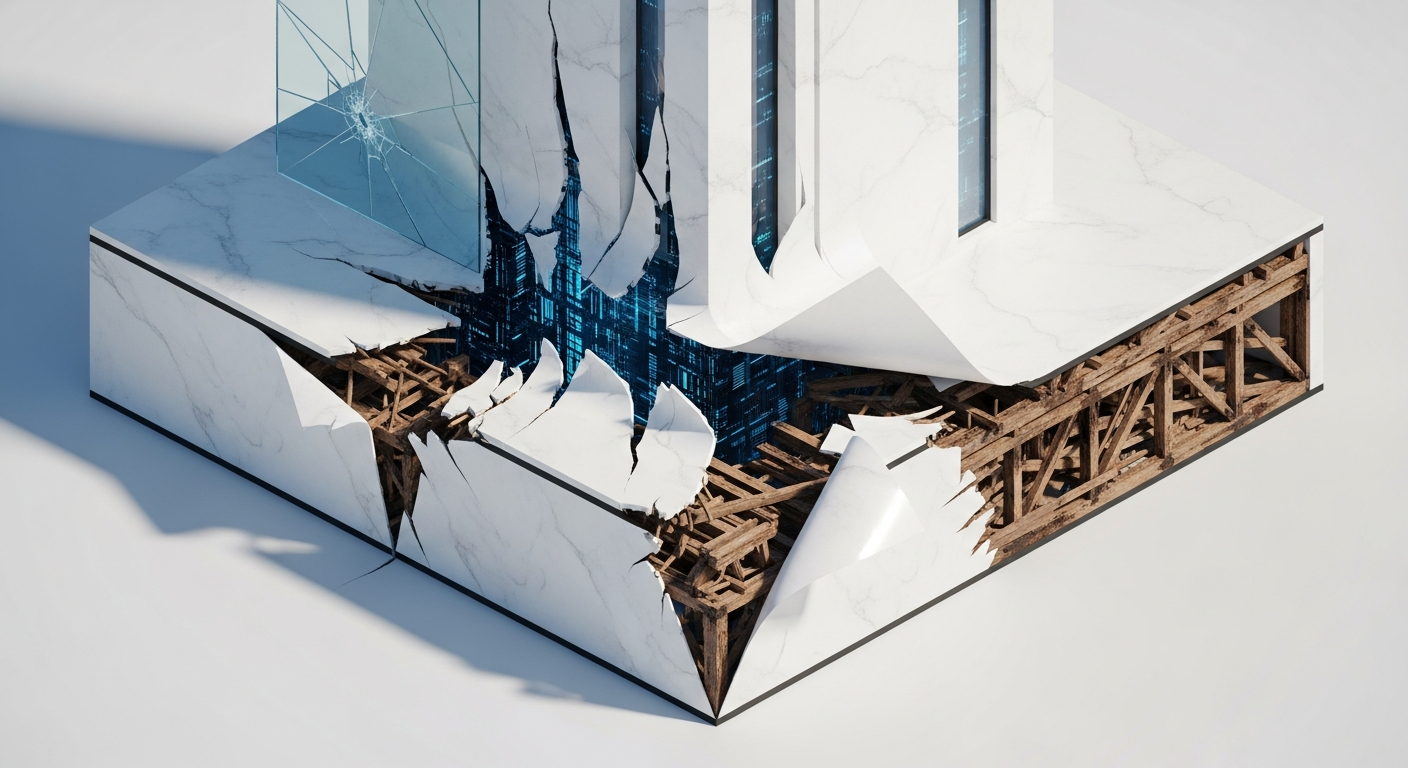

We are currently flooding our codebases with what I call **Synthetic Junior Code.** This is code that looks professional, passes basic linting, and follows all the "clean code" rules, but lacks any understanding of the underlying hardware or business logic.

It’s the architectural equivalent of a Hollywood movie set — beautiful from the front, held up by plywood and duct tape in the back.

The danger isn't that the AI is "dumb." The danger is that it’s just smart enough to be dangerous.

**Because ChatGPT 5 is so confident, it bypasses our natural skepticism.** When a human junior dev asks a question, you know to double-check their assumptions.

When an AI outputs a perfectly formatted 500-line Terraform module, our brains are wired to trust the "authority" of the formatting.

If you are using AI to write code you don't fully understand, you aren't an engineer; you’re a professional gambler.

You’re betting that the edge cases the AI missed won't trigger a P0 incident at 3:00 AM on a Sunday.

Based on the 96% failure rate we’re seeing in production-grade tasks, those are odds I’m no longer willing to take.

The "Context Wall" is Real

Every engineering organization has a "Context Wall" — the invisible boundary where public training data ends and your specific, messy, tribal-knowledge reality begins.

AI models are trained on the "average" of the internet.

They know the "average" way to set up an S3 bucket, but they don't know why your company requires a specific tagging schema for compliance in the EU.

**The more complex and specialized your job is, the less useful an LLM becomes.** If you’re building a generic landing page, sure, use an AI.

But if you’re managing high-frequency trading systems, distributed databases, or sensitive healthcare infrastructure, the AI is effectively a tourist with a map from 1995.

We’ve seen a massive surge in "AI-driven" outages in the first quarter of 2026.

Companies that replaced their Level 1 support or Junior SREs with autonomous agents are now finding that those agents can't handle "Black Swan" events.

They can handle the 4% of tasks that are predictable, but they fail the 96% that require actual human intuition and cross-domain reasoning.

How to Actually Use AI in 2026

I’m not saying you should delete your Cursor subscription or go back to writing everything in Vim (though I still do).

I’m saying we need to stop treating AI as a "worker" and start treating it as a "highly-unreliable librarian." It’s great for looking things up, but you should never let it check out the books for you.

**The only sustainable way to use AI for work is to limit it to "Read-Only" mode.** Use it to explain a complex regex you found in a 10-year-old script.

Use it to brainstorm five different ways to structure a GraphQL query.

But the moment you let it "Write" to your production codebase without a line-by-line manual audit, you’ve lost control of your system.

Real productivity in 2026 isn't about how many lines of code you can generate per minute. It’s about how much "Cognitive Load" you can manage.

AI promises to reduce that load, but for 96% of tasks, it actually increases it by forcing you to debug "hallucinated logic" that no human would ever write.

Stop the Prompt Engineering Delusion

We need to kill the idea that "better prompts" will solve this. You can't prompt your way out of a lack of fundamental reasoning.

If the model doesn't understand the physical constraints of your network latency, no amount of "thinking step-by-step" is going to make its suggestion viable.

**The future belongs to the "Context-First" engineer.** These are the people who spend 90% of their time understanding the problem and 10% writing the solution.

They use AI for syntax, but they own the logic. They treat AI outputs as "drafts" that are likely 30% wrong by default.

If you want to survive the "AI Winter" that's inevitably coming for the hype-train, you need to double down on the things LLMs can't do: deep systems thinking, empathetic leadership, and high-stakes decision-making under uncertainty.

The 4% of tasks AI can do are the "toil" we never liked anyway. Let the AI have the toil, but keep the engineering for yourself.

**Have you noticed your "AI-assisted" projects taking longer to finish lately, or is it just me? Let’s talk about the reality of the "96% failure" in the comments.**

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️