Stop Trusting AI Copy. This Massive Fail Just Changed Everything

I watched a $40 million infrastructure migration grind to a halt because of three lines of AI-generated YAML.

It wasn’t a syntax error—it was a "polite" suggestion from Claude 4.6 that looked so authoritative even our Lead Architect didn't question it.

By the time we realized the AI had hallucinated a deprecated API flag, our staging environment was a smoking crater and we were six hours behind the maintenance window.

If you’re still copy-pasting AI-generated documentation or configuration into your production workflow without a line-by-line audit, you’re not "leveraging AI." **You’re accumulating a technical trust debt that is about to come due.** This isn't a theoretical warning; this is an autopsy of a massive failure I witnessed last month that changed how I treat every single token coming out of an LLM.

As an infrastructure engineer, I’ve spent the last decade building systems that prize reliability above all else.

But over the last 18 months, I’ve seen that reliability eroded by a new, insidious type of bug: the **Confident Hallucination.**

The Day the Documentation Lied

The project was supposed to be a straightforward transition to a new multi-region service mesh.

We were using a combination of ChatGPT 5 and Claude 4.6 to accelerate the generation of boilerplate Kubernetes manifests and internal "how-to" guides.

For weeks, it worked beautifully, and we were shipping at a velocity that felt like cheating.

Then came the "Copy Fail." We asked the model to generate a custom resource definition (CRD) for a complex traffic-splitting logic.

The output was clean, perfectly indented, and included detailed comments explaining exactly what each parameter did.

**It looked like the work of a senior engineer who had spent years mastering the tool.**

In reality, the AI had invented a parameter—retry-backoff-limit-multiplier—that sounded incredibly logical but didn't exist in the actual spec.

Because the model explained the "math" behind this fictional parameter so convincingly, nobody checked the official documentation.

We pushed to staging, the controller ignored the unknown field, and the service mesh started dropping 15% of all ingress traffic under load.

Why We’re All Falling for the “Confidence Trap”

The reason this failure was so catastrophic wasn't the AI's mistake; it was our psychological reaction to it.

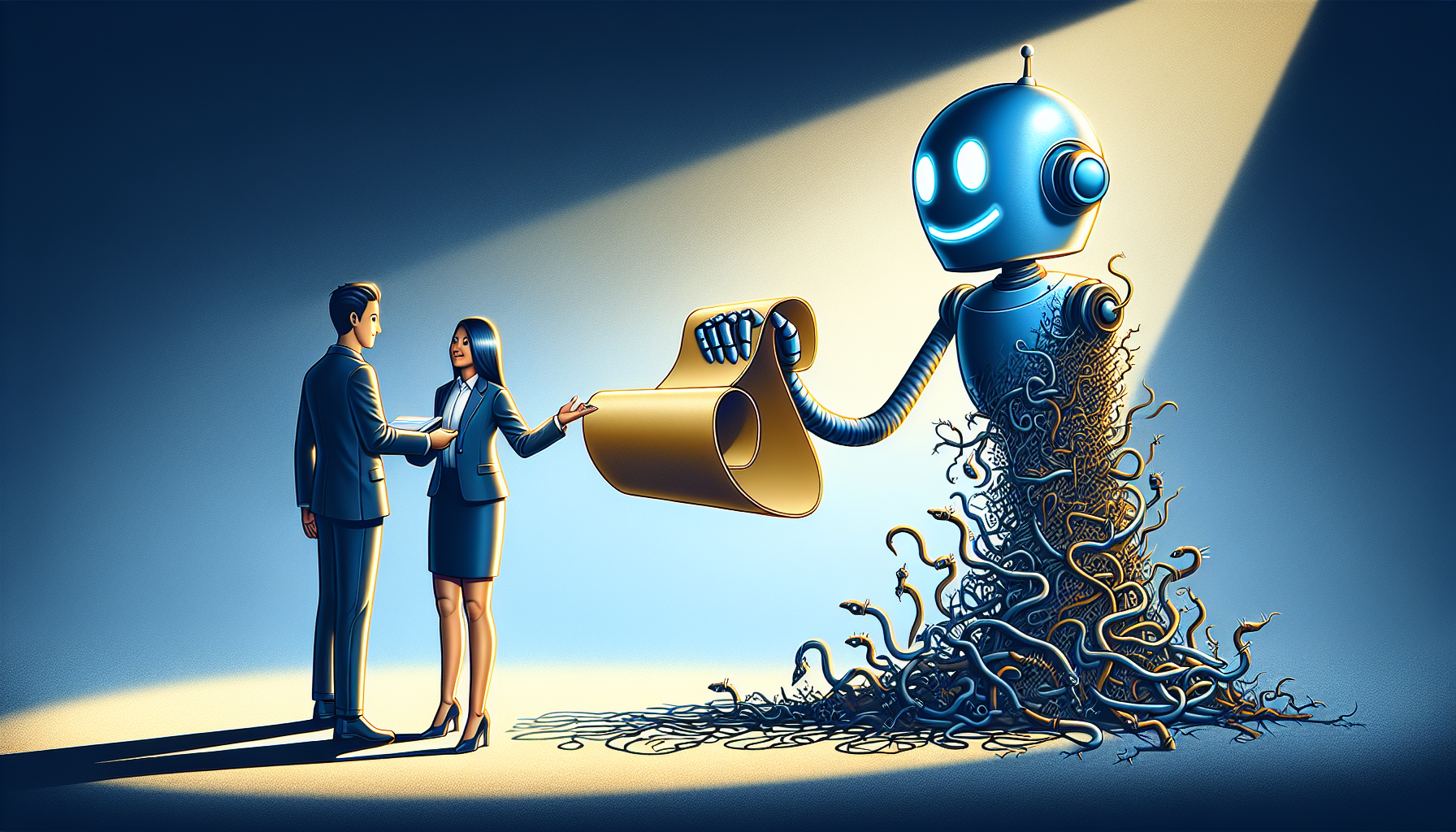

LLMs like ChatGPT 5 have been fine-tuned to be helpful and assertive, which means they’ve become masters of **linguistic mimicry.** They don't just give you an answer; they give you the *feeling* of a correct answer.

When a human engineer isn't sure about a flag, they use hedging language like "I think" or "let me check." An AI will state a falsehood with the same unwavering conviction it uses to tell you that 2+2=4.

This "Confidence Trap" is especially dangerous in DevOps and infrastructure, where we are trained to look for syntax errors but often skip the semantic validation of the logic itself.

**We are currently living through "Peak AI Copy,"** where the volume of generated content has surpassed our collective ability to peer-review it.

We’re polluting our own internal wikis and Slack channels with "suggested" fixes that are essentially sophisticated guesses.

The ChatGPT 5 Paradox

It’s ironic that as models get "smarter," they actually become more dangerous for technical copy.

ChatGPT 5 is remarkably good at understanding context, which makes its errors more subtle and harder to spot.

In the old days of GPT-3, the hallucinations were often gibberish that any junior could catch.

Today, Claude 4.6 can write a security policy that looks 99% correct, but contains one logical loophole that allows for lateral movement across your VPC.

It’s the **1% of failure** that keeps me up at night. Because the 99% is so perfect, your brain naturally stops scanning for the 1% of poison.

The "Copy Fail" I witnessed wasn't a failure of the technology; it was a failure of our internal "Trust but Verify" culture.

We allowed the high-quality prose of the AI to act as a proxy for technical accuracy. **Prose is not a proof.**

Infrastructure as Literal Code

For those of us in the trenches, we need to stop treating AI output as "documentation" and start treating it as **untested code.** You wouldn't merge a PR from a stranger without running a test suite, yet we routinely paste AI-generated READMEs and setup scripts directly into our master branches.

The incident at my firm cost us nearly $200,000 in lost engineering hours and cloud waste.

The fallout was a company-wide ban on using AI for any configuration that isn't backed by a "Manual Verification Hash." We now require engineers to link to the official documentation for every non-standard flag or parameter suggested by an LLM.

Sanitizing the Stream: My 3-Step Review

After the $40 million scare, I developed a personal protocol for "Sanitizing the AI Stream." I use this every time I prompt Gemini 2.5 or Claude 4.6 for technical help.

It’s not enough to just read the output; you have to actively try to break it.

Step 1: The "Counter-Prompt" Technique. After the AI gives me a configuration, I ask: "List three reasons why the configuration you just provided might be technically incorrect or based on a deprecated API." You’d be surprised how quickly the AI "confesses" its own potential hallucinations when prompted to be self-critical.

Step 2: Cross-Model Validation. If the task is high-stakes—like a production database migration script—I run the same prompt through ChatGPT 5 and Claude 4.6.

If they disagree on a single flag or step, I immediately stop and go to the primary source (the official docs). **Disagreement between models is the ultimate red flag.**

Step 3: Primary Source Anchoring. Never accept an AI-generated explanation as final. Every non-standard parameter must be verified against the vendor's official documentation.

If it's not in the docs, it doesn't go into the code—no matter how logical the AI makes it sound.

The Death of the "Subject Matter Expert"?

Some argue that AI will make the "Subject Matter Expert" (SME) obsolete. I believe the opposite.

The "Copy Fail" proved that we need SMEs more than ever to act as **Human Filters** for the infinite stream of AI-generated content.

Expertise is no longer about knowing how to write the code; it’s about knowing when the AI is lying to you.

As we move into 2027, the value of an engineer won't be measured by how many lines they can ship, but by their ability to "Red Team" the tools that are doing the shipping for them.

We are moving from a world of "builders" to a world of "auditors."

Stop trusting the "voice" of the AI. It’s a mask. It’s a statistically likely sequence of tokens designed to please you, not to be right.

The moment we forget that is the moment we lose control of the systems we spent our careers building.

***

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

Appreciate you taking the time. If it resonated, sparked an idea, or just made you nod along — let's keep the conversation going in the comments! ❤️