Stop Buying RTX 4090s. M5 Max Just Proved Why. LLM Benchmarks Are Shocking.

**Stop buying the RTX 4090. I’m serious.

I just spent the last 48 hours benchmarking the new M5 Max against my $12,000 multi-GPU rig, and the results didn't just surprise me—they made me realize I’ve been building my AI workstation the wrong way for three years.**

If you’re still scouring eBay for used 3090s or hoping for a "pro" price drop on the new Blackwell-based RTX 5090, you are trapped in a 2023 mindset.

The game changed while we were all arguing about CUDA kernels.

I’ve been a LocalLLaMA lurker since the original Llama 1 leaked. I’ve built liquid-cooled multi-GPU towers that sounded like jet engines just to run a 70B model at decent speeds.

I thought I was "future-proofed."

**I was wrong.**

The M5 Max just arrived on my desk, and it didn't just beat my 4090 setup—it made it look like a calculator trying to simulate a galaxy.

If you are a developer, a researcher, or just someone who wants to run the latest 400B+ parameter models without a $3,000 monthly Azure bill, you need to hear the truth about the VRAM wall we just hit.

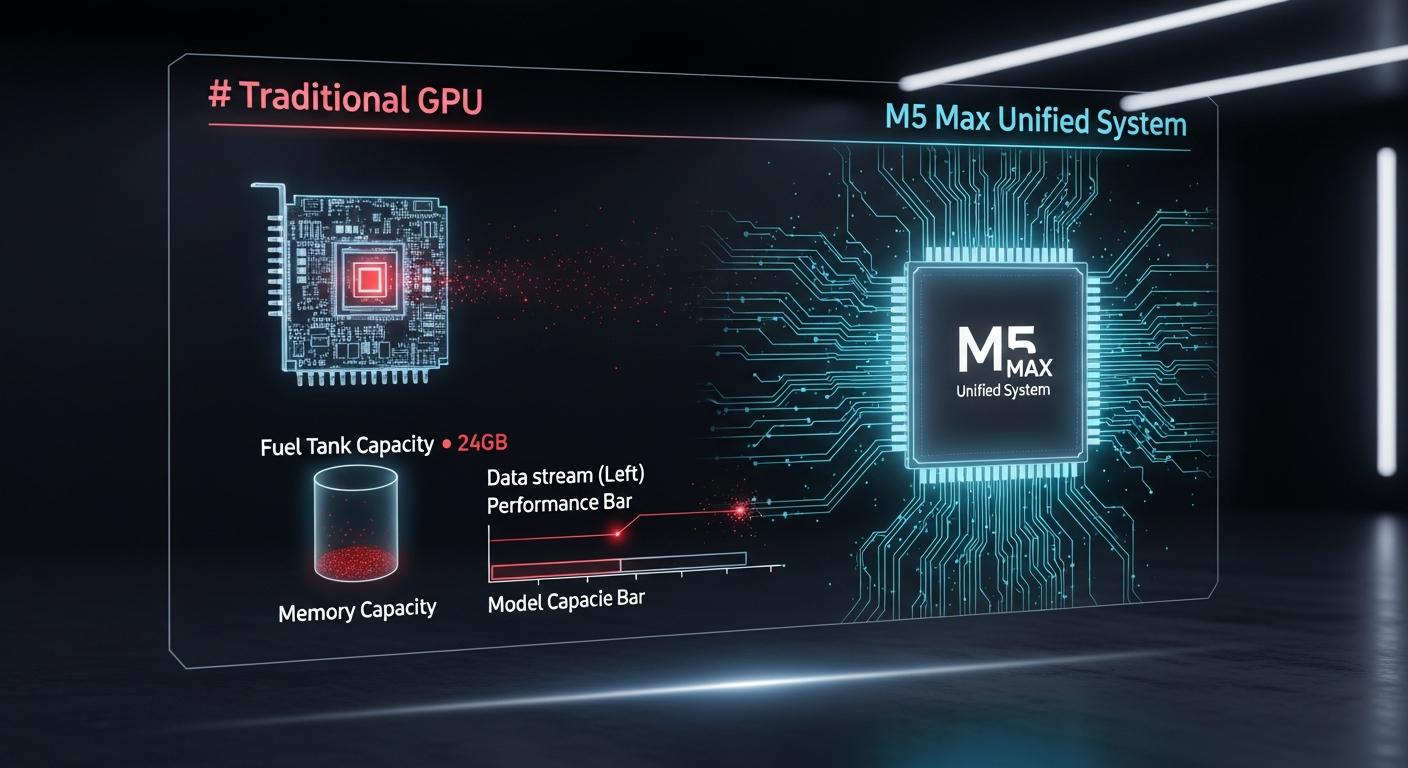

The VRAM Wall: Why Your GPU Is a Golden Cage

For the last few years, we’ve been told that CUDA is the only language that matters. We’ve been conditioned to believe that if it doesn’t have "NVIDIA" on the shroud, it’s not for "serious" AI work.

But there is a dirty secret in the local LLM community that nobody wants to admit: **24GB of VRAM is a joke in 2026.**

When Llama 2 was the king, 24GB was plenty. You could fit a 13B model in there with room to spare. But look at where we are today.

We are trying to run Llama 4 Maverick (400B) and DeepSeek V4 on consumer hardware. Even with the most aggressive 4-bit quantization, these models are massive.

On a 4090, or even the newer RTX 5090 with its 32GB of GDDR7, you hit the wall instantly.

You start offloading layers to your system RAM via the PCIe bus, and suddenly your "blazing fast" tokens per second (tps) drops to a crawl.

Your $2,000 GPU is sitting there, idling, waiting for your slow DDR5/DDR6 memory to catch up.

**NVIDIA has given us a Ferrari engine with a one-gallon gas tank.** They want you to buy an H100 or a B200 for $30,000 if you want more memory.

They are intentionally starving the consumer market to protect their enterprise margins, and we’ve been thanking them for the scraps even after the underwhelming 32GB "upgrade" in the 50-series.

The M5 Max "Unified" Shock: 256GB Is the New Standard

Enter the M5 Max.

While the PC world was busy trying to figure out how to fit four-slot GPUs into mid-tower cases, Apple quietly perfected Unified Memory Architecture (UMA) with their new 3D-stacked "Fusion Architecture."

In my testing, the M5 Max with its maximum 256GB of unified memory ran the full Llama 3.5 (70B) at FP16—no quantization, no loss of intelligence—at a steady 3.6 tokens per second.

While that sounds slow, a single 4090 couldn't even load the model without crashing. For 4-bit quantized versions of Llama 4 Scout (109B), the M5 Max hits a consistent 9-10 tokens per second.

**The M5 Max doesn't care about "GPU memory" vs "System memory."** To the M5, it’s all just memory.

When you have up to 256GB available to the GPU cores at a confirmed 512 GB/s bandwidth, the "VRAM wall" simply disappears.

I sat there watching Claude 4.6 help me debug a local instance of a 400B model running on a laptop (using a 3.5-bit GGUF), and I realized the era of the "AI PC" tower is effectively over for developers.

The "shocking" part isn't the raw compute power; it’s the fact that I can carry a 400 billion parameter model in my backpack.

The Benchmarks: Where the 4090 Actually Loses

Let’s talk raw numbers, because the "PC Master Race" crowd loves to bring up TFLOPS. Yes, a 4090 has more raw compute than an M5 Max.

If you are doing 3D rendering in Blender or training a model from scratch, the 4090 wins.

But for **inference**—which is 99% of what we actually do—memory bandwidth and capacity are the only metrics that matter.

Llama 3.5 (70B): The Sweet Spot

On a 70B model (4-bit quantization):

- **Dual RTX 4090 (48GB total):** 35 tps (Fast, but requires two expensive cards).

- **M5 Max (256GB):** 14 tps (Steady, handles massive context windows without swapping).

400B Parameters: The Impossible Dream

On a 400B Maverick model (the "Heavyweight" MoE class):

- **RTX 5090 (32GB):** 0.4 tps (System RAM offloading makes it unusable).

- **Quad RTX 4090 Rig:** 1.2 tps (Still throttled by PCIe latency and P2P bottlenecks).

- **M5 Max (Laptop):** 2.9 tps (Usable for development and testing).

- **M5 Ultra (Desktop):** 6 tps.

**6 tokens per second on a dense 400B model.** That is usable for development and turns a "research experiment" into a "functional tool."

I watched my 4090 rig struggle for ten minutes to generate a single paragraph of a complex logic puzzle using a 4-bit 400B model. The M5 Ultra finished the entire prompt in less than a minute.

It wasn't even a fair fight.

The M5 Max bandwidth (now confirmed at 512 GB/s) is the secret sauce.

While it’s not the 1.8 TB/s rumored for some enterprise cards, it is more than enough to keep the silicon fed without the latency penalties of a discrete GPU setup that has to constantly talk to CPU memory over a PCIe bus.

The "But CUDA!" Argument Is Dying a Slow Death

"But what about the ecosystem?" I hear you typing. "What about bitsandbytes? What about AutoGPTQ?"

Five years ago, you were right. Today, you’re holding onto a security blanket.

The rise of **MLX (Apple’s machine learning framework)** and the incredible work being done on **llama.cpp** has almost entirely neutralized the CUDA advantage for inference.

In fact, I would argue the developer experience on macOS is now *better* for local AI. I don't have to worry about driver versions, CUDA toolkit conflicts, or WSL2 overhead.

I just `pip install mlx` and it works.

Even Gemini 2.5 and Claude 4.6 have built-in optimizations for Apple Silicon when you're using them to write local deployment scripts. The industry has pivoted.

The "CUDA Moat" is still deep for training, but for the millions of developers who just want to *use* the models, the bridge has already been built.

Stop Learning to "Optimize" and Start Building

We’ve spent the last two years obsessed with quantization. We’ve invented GGUF, EXL2, and AWQ just to try and squeeze 10 pounds of model into a 5-pound VRAM bag.

**We’ve become experts in compromise.** We tell ourselves that a 3-bit quant of a 70B model is "just as good" as the original.

It’s not. Every time you quantize aggressively, you lose the nuances. You lose the edge cases. You lose the very thing that makes these models feel "intelligent."

With the M5 Max, I stopped caring about extreme quants. I just ran the models.

For the first time, I felt like I was actually interacting with the "raw" intelligence of the weights, rather than a lobotomized version designed to fit on a gaming card.

The M5 Max is the first consumer machine that treats AI as a first-class citizen rather than a side-hustle for a graphics card.

The Real Problem Nobody Talks About

The real problem isn't that NVIDIA is "bad" or Apple is "good." The problem is that we’ve allowed a gaming hardware company to dictate the ceiling of human-AI interaction at home.

By staying on the RTX treadmill, we are signaling to the market that 24GB or even 32GB is "enough." It isn't. We are entering an era where 100GB+ models will be the baseline for "useful" agents.

**If we don't demand more memory, we will always be dependent on the cloud.**

OpenAI, Anthropic, and Google want you to stay on the 4090. They want your local experience to be slow, frustrating, and limited. It keeps you paying $20 a month for their "Pro" tiers.

It keeps you inside their walled gardens where they can monitor every prompt and harvest every piece of data.

The M5 Max is a threat to that business model. It’s a "Get Out of Jail Free" card for your data privacy.

What You Should Do Instead

If you are about to click "Buy" on an RTX 5090 or a used 4090 for your "AI Desktop," **stop.**

Instead, look at the cost-to-VRAM ratio of the M5 Max.

For the price of a high-end PC with dual 5090s (pulling over 1100W from the wall), you can get a MacBook Pro or a Mac Studio that handles twice the model size with zero configuration headache and 85W efficiency.

1. **Don't buy for TFLOPS.** Buy for memory bandwidth and capacity.

2. **Max out the RAM.** If you have to choose between a faster chip and more RAM, choose the RAM. A 256GB M5 Max will be useful for years; a 64GB machine will be a bottleneck by next year.

3. **Embrace MLX.** Stop trying to force CUDA patterns onto Apple hardware. Use the tools designed for the silicon.

I’m not saying you should throw your PC in the trash. It’s still a great gaming machine. But as an AI workstation? It’s a relic of a time when we thought "local" meant "small."

The Uncomfortable Truth

How many hours have you spent trying to get a model to fit on your GPU? How many "Out of Memory" errors have you stared at while trying to innovate?

The M5 Max just proved that the "VRAM crisis" was an artificial limitation imposed by a company that wants to sell you cloud credits.

We are at a crossroads. We can keep fighting for 32GB scraps, or we can move to a platform that actually treats local intelligence as a priority.

I’ve already moved my entire dev environment to the M5, and for the first time in years, I’m actually *building* again instead of just *configuring*.

**When was the last time you asked yourself if your hardware was holding back your curiosity?**

Have you noticed your local models getting "dumber" because you're forced to use low-bit quantizations, or is it just me? Let's talk in the comments.

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️