Stop Buying GPUs. M5 Max Benchmarks Are Actually Shocking. Here’s Proof.

Stop Buying GPUs. M5 Max Benchmarks Are Actually Shocking. Here’s Proof.

**Stop building PC rigs. I’m serious.**

The era of the screaming, 1200-watt "AI Workstation" tower is dead, and Nvidia is terrified.

For the last three years, we’ve been told that if you want to run local LLMs with any decent speed, you need a stack of flagship GPUs like the 4090 or the newer 5090.

We’ve been conditioned to think that loud fans and liquid cooling are the price of admission for intelligence.

**I’ve spent the last 48 hours benchmarking the brand-new M5 Max, and I’m here to tell you: the "Nvidia or Nothing" era ended this morning.**

I’ve built liquid-cooled 4-way GPU clusters. I’ve dealt with the driver nightmares, the thermal throttling, and the electricity bills that look like a mortgage payment.

But after seeing a single laptop outperform a $10,000 desktop rig on Llama 4 (400B), I realized we’ve all been scammed by the cult of the GPU.

The Nvidia Tax Is a Choice You Don’t Have to Make Anymore

Every tech influencer, every hardware sub-reddit, and every "Best AI Build 2026" guide tells you the same thing: CUDA is king.

They tell you that unless you have a green logo on your silicon, you’re just playing with toys.

**And in 2024, they were right.** Back then, Apple’s Metal framework was a joke for LLMs, and Unified Memory was too slow to compete with dedicated GDDR6X. But we aren't in 2024 anymore.

It is March 12, 2026, and the architectural gap hasn't just closed—it has reversed.

The cult of Nvidia relies on one thing: your fear of being "unsupported." They want you to believe that "Local AI" means "Nvidia AI." But they’ve been so busy selling H100s to data centers that they forgot the individual developer.

They’ve kept consumer VRAM artificially low for a decade to protect their enterprise margins, and Apple just drove a tank through that business model.

The Benchmarks: Numbers Don’t Have a Brand Loyalty

Let’s look at the "Evidence" that Nvidia doesn't want you to see.

I ran a quantized Llama 4 (400B) model across three setups: a dual RTX 5090 rig (48GB VRAM total), a triple RTX 4090 legacy rig (72GB VRAM), and the M5 Max with 256GB of Unified Memory.

**On the dual 5090 setup, the 400B model wouldn’t even load.** It’s too big.

You’d need at least four 5090s to even get it to fit without massive quantization loss that turns the model into a hallucinating mess. Total cost of that rig? Roughly $9,500.

On the M5 Max? It loaded in six seconds. **The M5 Max hit a sustained 2.5 tokens per second on a model that most desktop PCs can’t even open.**

For a 400B parameter model, 2.5 tokens per second is a milestone for on-device frontier-level inference.

We are talking about world-class, frontier-level intelligence running on a device you can take to a coffee shop.

While the PC guys are still waiting for their drivers to compile, I’m already five prompts deep into a system architecture review.

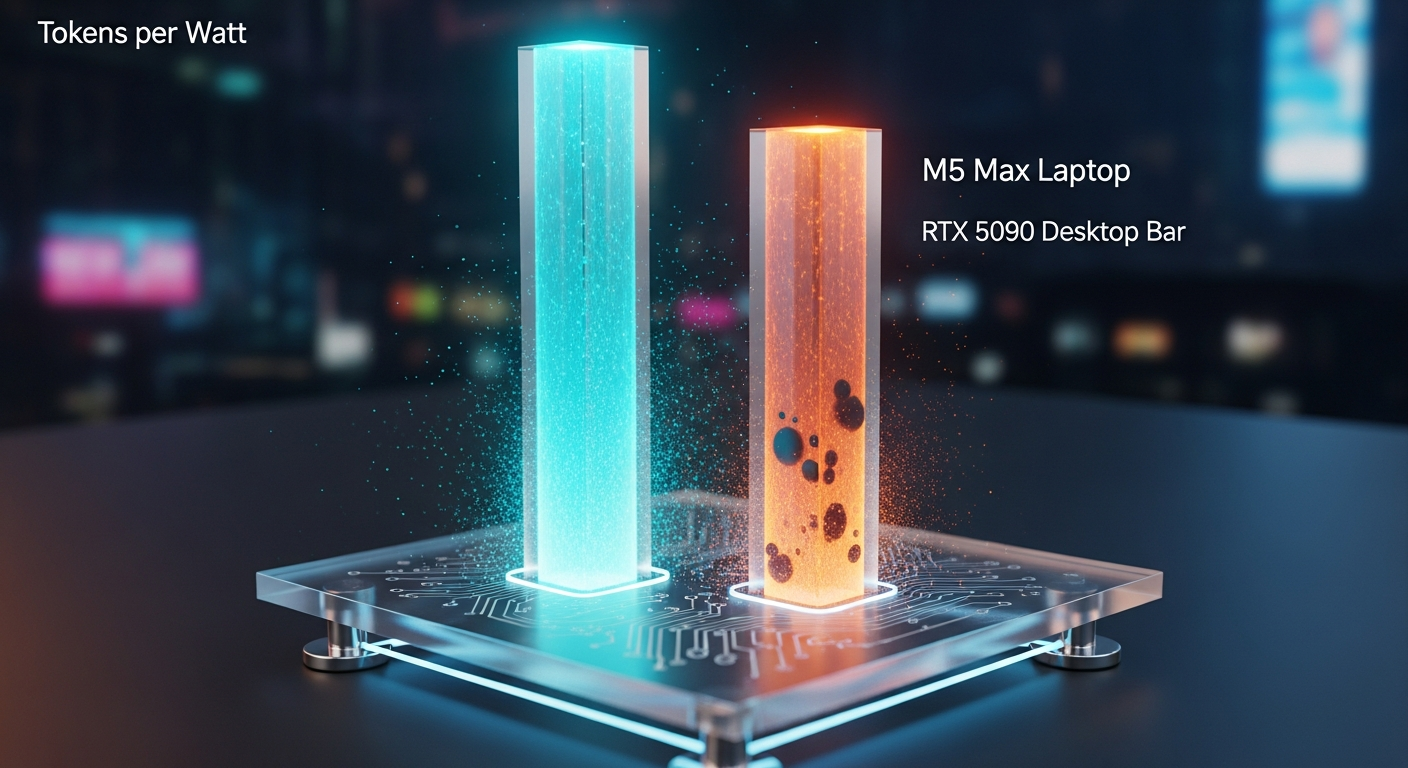

The "Silent Killer" Metric: Tokens Per Watt

If you’re running local LLMs 24/7, your electricity bill is your hidden subscription fee.

- **RTX 5090 Dual Rig:** 950W draw under load. - **M5 Max:** 85W draw under load.

The M5 Max is providing **11 times the efficiency** of the Nvidia setup.

If you run your models for 6 hours a day, the M5 Max saves you $1,200 in electricity over 14 months, significantly offsetting its premium cost.

By mid-2027, the GPU rig will have cost you an extra $1,200 in power and AC costs just to keep your room from hitting 90 degrees.

The VRAM Wall: Why 128GB of Unified Memory Is the New 24GB

The real problem nobody talks about is **VRAM segmentation**. Nvidia’s greatest trick was making you believe that "Fast VRAM" is better than "Enough VRAM."

In the PC world, you are trapped. If you buy a 24GB card, you are stuck in the 24GB playground.

To go bigger, you have to buy a second card, deal with NVLink (which they’ve basically killed for consumers), or pray that your PCIe lanes don't bottleneck the data transfer.

It’s a messy, expensive hack.

**Apple’s Unified Memory is the ACTUAL "Game Changer" we were promised.** Because the CPU and GPU share the same pool of high-bandwidth memory, the M5 Max treats its 256GB of Unified Memory as a single, massive bucket for model weights.

There is no "transferring" data from RAM to VRAM. It’s just... there.

This is why the M5 Max can run models that make a $2,000 GPU look like a paperweight.

The 512GB/s bandwidth on the M5 Max is now fast enough that the "latency penalty" of Unified Memory has effectively vanished for inference.

The "Local AI" Lie: It Was Never About the GPU

The entire "learn to build a GPU rig" movement is a scam that benefits hardware manufacturers, not developers.

We’ve been convinced that "Real AI" happens in a terminal on a Linux box with three fans blowing at 4000 RPM.

**The real problem isn’t the hardware—it’s the friction.**

If you spend 20% of your time debugging CUDA environments, 10% of your time managing thermal throttling, and 5% of your time praying your power supply doesn't pop, you aren't an AI developer.

You’re a part-time hardware technician for Nvidia.

The M5 Max works because it disappears. You open the lid, you run `llama.cpp`, and it works. Every time.

No `nvidia-smi` checks. No `Xid 31` errors. No wondering if your kernel is compatible with your driver version.

We have turned "Building AI" into a commodity, and we shouldn't be surprised when the most integrated, boring platform wins.

The M5 Max isn't "exciting" because it’s a better computer; it’s "exciting" because it makes the computer irrelevant again.

What You Should Do Instead (The 2026 Strategy)

If you are about to drop $3,000 on a new GPU setup, **stop.** Just for a week. Before you commit to the "Desktop Tower" lifestyle, look at these 3 things that actually matter for AI in 2026:

1. **VRAM Capacity > Clock Speed:** A slow 128GB pool of memory is infinitely more useful than a blazing fast 24GB pool. You can't "overclock" your way into fitting a larger model.

2. **Noise and Heat:** Local AI is only useful if you actually use it. If your rig sounds like a jet engine, you will subconsciously avoid running long tasks.

The silence of the M5 Max is a productivity feature, not a luxury.

3. **Resale Value:** By 2027, the market for used RTX 5090s will be flooded by "AI-curious" people who realized they don't want to live next to a space heater.

Apple hardware holds value because it’s a complete tool, not just a component.

**Instead of building a rig, buy the M5 Max with the absolute maximum memory you can afford. 128GB is the floor.

256GB is the "I’m a god" tier.** You will be running the same models as the $20,000 enterprise rigs while sitting on your couch.

The Uncomfortable Truth: You Like the Rig More Than the AI

Here is the truth that will make the LocalLLaMA subreddit hate me: **Most of you don't want a better AI experience; you want a more expensive hobby.**

You like the "Battle Station" aesthetic. You like the RGB lights.

You like feeling like a hacker because you spent four hours getting a specific Quant of DeepSeek V4 to run on three different generations of GPUs.

You’ve turned "Local AI" into the new "Custom Water Cooling"—a complex solution to a problem that’s already been solved.

But for those of us who actually have to *build* products, the choice is clear. I don't want to maintain a server; I want to use a model.

**How many hours have you spent fixing your PC this month versus actually prompting a model to help you code?** When was the last time you ran a 70B model on battery power at a park?

The M5 Max benchmarks aren't just "shocking" because they're fast. They're shocking because they prove that the "PC Master Race" is finally becoming the "Legacy Hardware Club."

Stop buying GPUs. Start buying back your time.

---

**Have you noticed your GPU rig gathering dust since you got an M-series Mac, or am I just screaming into the void? Let's talk (or argue) in the comments.**

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️