Sora 2 megathread (part 3) - A Developer's Story

OpenAI Just Dropped Sora — and Hollywood Should Be Terrified

I recently watched a 20-second AI-generated video that made me cancel my stock footage subscription.

Not because it was perfect — but because it was 90% as good as what I'd pay $299 for, and it took 30 seconds to create.

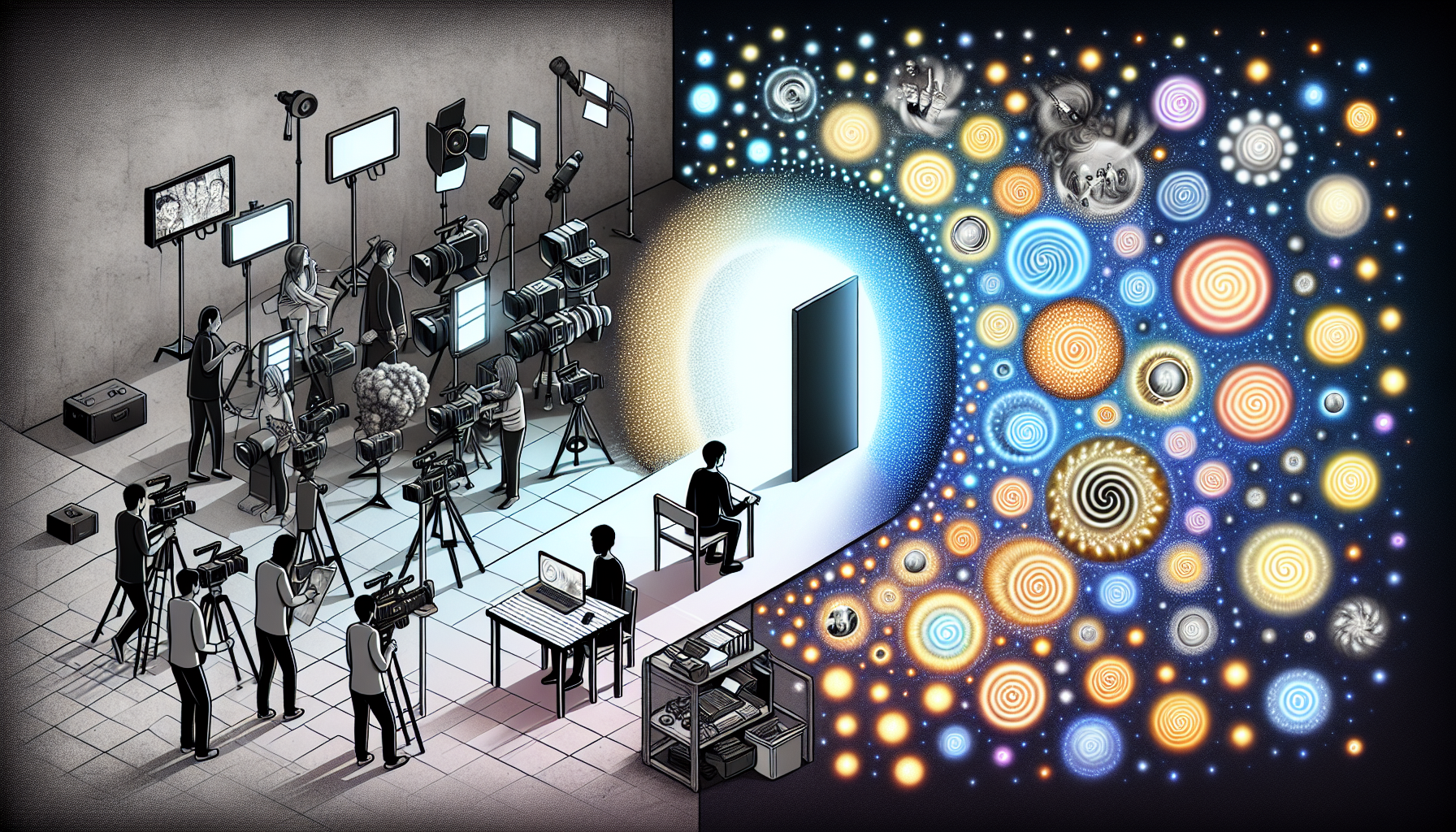

OpenAI's Sora has arrived, and after spending time deep in the community megathreads, I'm convinced we're witnessing the death of an entire creative industry.

The reactions range from euphoric to apocalyptic, and honestly? Both camps might be right.

The $20 Revolution Nobody Saw Coming

Here's what actually happened: OpenAI didn't just release a video generator. They released a creativity democratizer at a price point that makes Adobe look like highway robbery.

For $20 a month, you get 50 videos at 720p. That's less than the cost of a single stock video clip on Shutterstock.

The math is so absurd it feels like a pricing error — until you realize OpenAI isn't competing with stock footage sites. They're replacing them.

The specs are deceptively simple. Videos up to 20 seconds. Resolution capped at 1080p for Plus users.

No explicit content. No recognizable faces. These aren't limitations — they're guardrails on a tool that's already too powerful for its own good.

But here's what the specs don't tell you: Sora understands physics in a way that previous generators didn't. Water actually flows like water. Fabric moves like fabric.

Light bounces off surfaces correctly. One user in the megathread posted a comparison between Sora and Runway ML, and the difference was jarring — like comparing a PlayStation 2 game to Unreal Engine 5.

The Feature That Changes Everything

Storyboard Mode: The Secret Weapon

Buried in the interface is something called "Storyboard" — and it's the feature that made me realize traditional video production is dead.

You can now sequence multiple prompts into a coherent narrative. Think of it like building a film shot by shot, except each shot takes 30 seconds to generate instead of 3 hours to shoot.

Users are already creating mini-documentaries, product demos, and music videos that would have required a $50,000 production budget just a year or two ago.

The Remix Revolution

The remix feature is equally insane. Upload any video — your iPhone footage, a clip from your security camera, whatever — and Sora will reimagine it.

One developer uploaded boring screencasts and turned them into cinematic product tours. Another turned their kid's birthday party into a Wes Anderson film.

The implications are staggering. Every piece of video content ever created just became raw material for AI transformation.

Extended Generations: The 5-Minute Breakthrough

While individual clips cap at 20 seconds, the community has already figured out the workaround.

By chaining generations with consistent prompts and using the "extend" feature, creators are producing 5-minute narratives. It's janky, sure — but it works.

One filmmaker in r/OpenAI posted a 3-minute horror short created entirely in Sora. Was it Oscar-worthy? No.

Was it better than 80% of YouTube content? Absolutely.

The Panic in Creative Subreddits

"My Job Just Became Obsolete"

The meltdown in r/videography and r/AfterEffects is real, and it's justified.

Stock footage creators are the first casualties. Why would anyone pay $200 for generic B-roll when Sora can generate exactly what you need for $0.40?

Motion graphics artists are next — Sora handles particle effects, transitions, and abstract visuals better than most junior After Effects users.

But here's the contrarian take that's getting buried in the panic: **Sora doesn't replace creativity — it replaces execution.**

The New Creative Hierarchy

What's emerging is a new creative class structure:

**Winners**: Directors, writers, concept artists — anyone whose value is in the idea, not the implementation. These people just got a $1 million production studio for $20/month.

**Losers**: Technical specialists whose only skill is operating software. If your value prop is "I know how to use Premiere Pro," you're in trouble.

**Adapters**: Smart creators who are already using Sora as a rapid prototyping tool. Pitch decks with actual video mockups. Storyboards that move. Concepts that can be tested before spending real money.

Why Competitors Should Be Terrified

The Data Moat Nobody Can Cross

Here's what people don't understand about OpenAI's advantage: it's not the technology — it's the feedback loop.

Millions of users are now training Sora with every generation. Each prompt teaches the model what humans actually want. Each remix shows it how to improve.

Runway, Pika, and Stable Video Diffusion don't have this scale of human feedback. They're competing against a model that's learning from millions of creative decisions per day.

By the time competitors catch up to today's Sora, OpenAI will be two generations ahead. It's the same playbook they ran with ChatGPT, and it's unstoppable.

The Platform Play

OpenAI isn't just building a video generator — they're building a creative operating system. The API is coming. When it does, every app becomes a video app.

Notion documents that auto-generate explainer videos. Figma designs that animate themselves. GitHub repos that create their own demos.

This isn't speculation — developers in the megathread are already building wrapper apps using screen recording hacks.

The demand is so intense that a black market for Sora API access would probably emerge if OpenAI doesn't move fast.

The Dark Side Nobody Wants to Discuss

The Deepfake Nightmare

OpenAI's safety filters are impressive — no recognizable faces, no explicit content, automatic watermarking. But let's be real: these are speed bumps, not walls.

The community has already found workarounds. Generate a face that's 90% similar to a celebrity. Use video-to-video to transform existing footage.

Chain multiple AI tools to wash out the watermarks. Where there's a will, there's a way — and bad actors have plenty of will.

The Creativity Crisis

Here's my unpopular opinion: Sora might actually make content worse.

When everyone can generate professional-looking videos, the bar for "good enough" drops to the floor. We're about to drown in an ocean of mediocre AI content.

The same thing happened with writing when ChatGPT launched — suddenly everyone was a "content creator," and quality plummeted.

The paradox is real: tools that democratize creativity often homogenize it. When everyone uses the same AI, everything starts looking the same.

What Happens Next

The Next 6 Months

Based on the community feedback and OpenAI's track record, here's what's coming:

**API Release (March 2025)**: Developers are chomping at the bit. OpenAI knows this. Expect a limited API beta within 90 days.

**Resolution Upgrade (April 2025)**: 4K is already possible — OpenAI is just managing compute costs. As efficiency improves, resolution caps will lift.

**Longer Generations (June 2025)**: The 20-second limit is artificial. Once the infrastructure scales, expect 60-second generations.

**Audio Integration (Fall 2025)**: This is the holy grail. Video with synchronized speech and music. When this happens, YouTube changes forever.

The 5-Year Disruption

By 2030, I predict:

- **Stock footage sites**: Dead or pivoted to AI curation - **Entry-level video editing jobs**: Eliminated - **Film school enrollment**: Down 40%

- **AI-first production companies**: Valued at billions - **Human-made premium**: "Certified Human Created" becomes a luxury brand

But here's the twist: creativity won't die — it'll explode. When tools become invisible, ideas become everything. The kid with a brilliant concept but no budget suddenly competes with Pixar.

The entrepreneur who couldn't afford a commercial now has Super Bowl-quality ads.

The Question Nobody's Asking

Everyone's debating whether AI video is "good enough" to replace human creators. That's the wrong question.

The right question is: **What happens when an entire generation grows up never learning traditional video production because AI does it better?**

We're not just automating tasks — we're eliminating the apprenticeship model that created master filmmakers. No more film students learning by doing grunt work.

No more editors developing an eye through thousands of hours of cutting. No more cinematographers understanding light through practical experience.

Is that progress or cultural suicide?

I've spent two days in Sora, and I honestly don't know. What I do know is that my nephew just made a better music video than I could have produced with $10,000 and a crew. He's 14.

He learned Sora in an afternoon.

Maybe that's terrifying. Maybe it's beautiful. Maybe it's both.

**What's your take — is Sora democratizing creativity or destroying it? And more importantly: what are you going to create with it that you couldn't before?**

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️