Qatar Quietly Just Put AI on a 2-Week Clock. It’s Worse Than You Think.

Qatar Quietly Just Put AI on a 2-Week Clock. It’s Worse Than You Think.

I just watched the spot price for H200 compute instances jump 40% in three hours. I thought it was a glitch in the AWS dashboard—a regional outage, maybe a fiber cut in Virginia. It wasn't.

While we’ve all been arguing over whether **Claude 4.6** has better reasoning than **ChatGPT 5**, the physical world just sent us a reminder that AI isn't made of "cloud." It’s made of gas, sand, and extremely fragile cooling systems.

Yesterday, news leaked from the port of Ras Laffan that Qatar—which provides nearly 30% of the world’s helium—is shuttering two of its major liquefaction trains for "unspecified technical reasons." They’ve put the global supply chain on a **two-week clock**.

If you think this is just about party balloons, you’re about to get a very expensive education in semiconductor physics.

For those of us building in the AI space, **this is the "Oh Sh*t" moment** we should have seen coming months ago.

The Ghost in the Machine is Actually Gas

We talk about AI like it’s this ethereal, digital spirit. In reality, the high-end chips running our latest models are the most physically demanding objects ever manufactured.

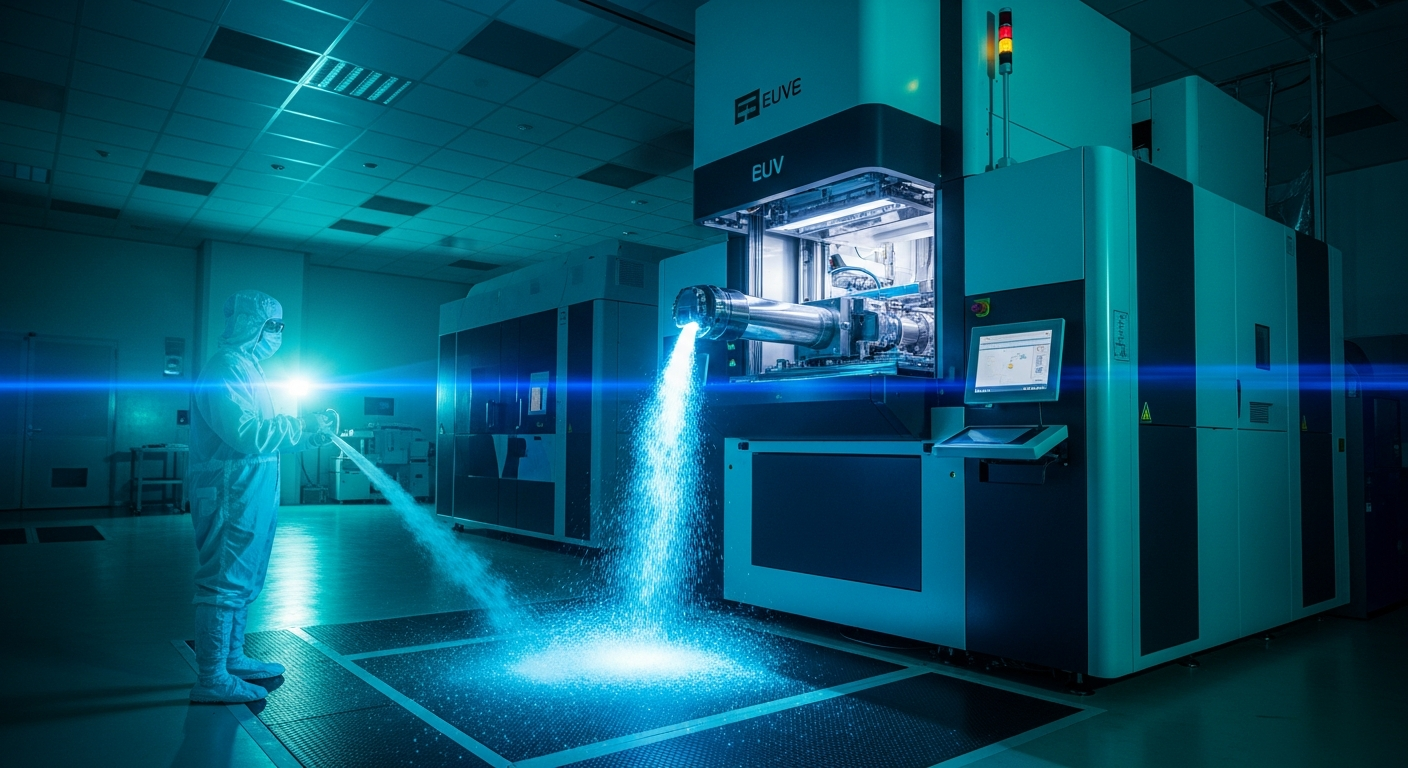

To build an NVIDIA Blackwell or the newer Rubin chips, you need Extreme Ultraviolet (EUV) lithography. These machines are the size of a bus and cost $350 million each.

They also happen to be **absolute helium hogs**.

Helium is the only element that can provide the ultra-low temperatures and inert atmosphere required for the delicate stages of chip etching.

You can't just swap it out for nitrogen or "hope for the best." **Without helium, the world’s most advanced fabs stop dead.**

I’ll admit, I spent the last year obsessing over context windows and token optimization. I never once looked at a map of Qatari gas fields.

That was a mistake that’s now costing my team thousands in projected compute overhead.

Why 14 Days Is the Magic Number

The semiconductor industry runs on a "Just-in-Time" (JIT) delivery model that would make a heart surgeon nervous.

Most of the major fabs in Taiwan and Korea only keep about **10 to 14 days of helium reserves** on-site.

It’s too expensive and dangerous to store in massive quantities for long periods. When the Qatari supply line halts, the clock starts ticking immediately.

We aren't looking at a "slowdown" in late 2026; we are looking at a **potential production cliff** by the end of this month.

If those Qatari trains don't spin back up in the next 10 days, the priority for helium will shift. Hospitals (for MRIs) and scientific research will get the first dibs.

**Chip manufacturing will be the first to get throttled.**

I’ve spent the morning talking to friends at the major cloud providers. The vibe is "controlled panic." They are already quietly **restricting new instance reservations** for H200 and B200 clusters.

If you don't already have your compute locked in, you might be back to running local Llama models on your MacBook sooner than you think.

The Death of the 'Infinite Scaling' Illusion

For the last 18 months, since the late 2024 boom, we’ve operated under the assumption that compute is an infinite resource. We figured NVIDIA would just keep shipping, and we’d just keep scaling.

This Qatar shutdown exposes the lie. **The Scaling Laws are hitting a physical wall**, and it’s not a lack of data or a lack of math. It’s a lack of basic elemental resources.

We’ve become so detached from the hardware that we forgot that **ChatGPT 5 requires a massive physical footprint**.

Every time we demand a larger model, we are demanding more helium, more neon, and more rare earth metals.

If this shutdown lasts more than a month, we will see the first **"Compute Recession" of the 2020s**.

Development on the next generation of models—the ones we expected to see in early 2027—could be pushed back by half a year or more.

What This Means for Your Stack Right Now

If you’re a developer or a CTO, you need to stop reading the latest "prompt engineering" threads and start looking at your infrastructure.

The era of **lazy compute** is over, at least for the next few months.

First, **optimize your token usage** like it’s 2022 again. If compute prices stay this high, your margins are going to evaporate.

I’ve already told my team to pivot back to smaller, fine-tuned models rather than relying on the "smartest" (and most compute-heavy) APIs for every trivial task.

Second, if you have any long-term projects that require heavy training, **lock in your hardware yesterday**. Don't wait for the official press releases.

By the time the mainstream media picks up on the "Helium Crisis," the prices will already be 5x what they are today.

Third, start investigating **inference-time optimization**. Tools like Quantization and Distillation aren't just for "fun" anymore; they are survival strategies.

We’ve been spoiled by cheap, abundant intelligence. That’s a luxury we might not have for the rest of 2026.

The Geopolitics of a Balloon Gas

It’s a bit ironic, isn't it? The future of AGI—the thing that’s supposed to solve all of humanity’s problems—is currently being held hostage by a maintenance schedule in the Persian Gulf.

This highlights the extreme **fragility of our tech stack**. We’ve built a skyscraper of software on a foundation of single-source hardware and rare gases.

One hiccup in Qatar, one tremor in Taiwan, and the whole thing starts to wobble.

I’m not saying the AI revolution is over. Far from it. But I am saying that we need to grow up. **Intelligence has a physical cost**, and we’ve been ignoring the bill for too long.

We need to stop acting like AI is a magic trick and start treating it like the heavy industry it actually is.

That means diversifying supply chains and, more importantly, **learning to do more with less**.

Are We Ready for the 'Great Pause'?

What happens if the 2-week clock runs out? We might see a "Great Pause" in AI development. For the first time in a decade, the hardware might not be there to support our ambitions.

I actually think this could be a good thing. It might force us to **stop throwing more parameters at every problem** and start actually innovating on the architecture.

We’ve been "brute-forcing" intelligence because compute was cheap. Now that it’s getting expensive, we have to get smart.

I’m curious—how much of your workflow depends on the "latest and greatest" models staying cheap and available? If your API costs tripled tomorrow, would your business still exist?

Let’s talk about it in the comments. Are you seeing the price hikes yet, or is it just my corner of the cloud?

***

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️