Poison Fountain: An Anti-AI Weapon - A Developer's Story

Poison Fountain: The Anti-AI Weapon Threatening the Data Gold Rush

I used to tell every junior data scientist: "Data is king. More data, better models." I was wrong.

After observing the quiet terror that emerging data poisoning techniques, exemplified by concepts like 'Poison Fountain,' could inject into AI models, I realized we've been building our future on quicksand – and the ground is about to shift beneath us.

This isn't just a technical novelty; it's a declaration of war on the assumption that AI can simply consume the world's information without consequence.

For years, the narrative has been clear: AI models need vast oceans of data to learn, grow, and achieve their superhuman feats.

Companies like OpenAI, Google, and Anthropic have voraciously scraped the internet, often without explicit consent or compensation, justifying it as "fair use" for the greater good of technological advancement.

Creators, artists, writers, and even entire industries have watched helplessly as their intellectual property became training fodder, their unique styles assimilated, and their livelihoods threatened.

It felt like an unstoppable force, a digital land grab where the biggest models always won.

But what if the land itself could fight back? What if the very data these models crave could become their undoing?

That's the chilling promise of 'Poison Fountain' – a conceptual framework for anti-AI data poisoning that is forcing a radical re-evaluation of data acquisition, model training, and the future of digital ownership.

This isn't about legal battles that drag on for years; it's about a direct, technical countermeasure that makes the data itself toxic to AI.

The gold rush is threatened, because the gold itself could soon become radioactive.

Everyone Is Wrong About the "Inevitability" of AI Data Capture

The conventional wisdom has been that the data acquisition war is a one-sided affair.

AI companies, armed with powerful crawlers and bottomless pockets, would always find a way to hoover up whatever they needed.

The prevailing belief was that creators and businesses could complain, but ultimately, their data would be absorbed, processed, and synthesized.

"You can't stop progress," they'd say, implying that resistance was futile. This passive acceptance, this belief in AI's inevitable data supremacy, is fundamentally flawed.

Poison Fountain shatters this illusion.

It operates on a principle of intentional data poisoning, subtly altering datasets in ways that are imperceptible to human eyes but catastrophic to AI training algorithms.

Imagine a library where every tenth book has a subtle, almost unnoticeable smudge on a specific character, or a page number swapped.

A human might read past it, but an AI trying to learn the precise structure of language from millions of such books would develop severe cognitive dissonance, leading to unpredictable and often harmful outputs.

It’s not about making data *unreadable*; it's about making it *unlearnable* in a coherent way.

This isn't just about privacy; it's about control, and the ability of data to defend itself against unchecked AI consumption.

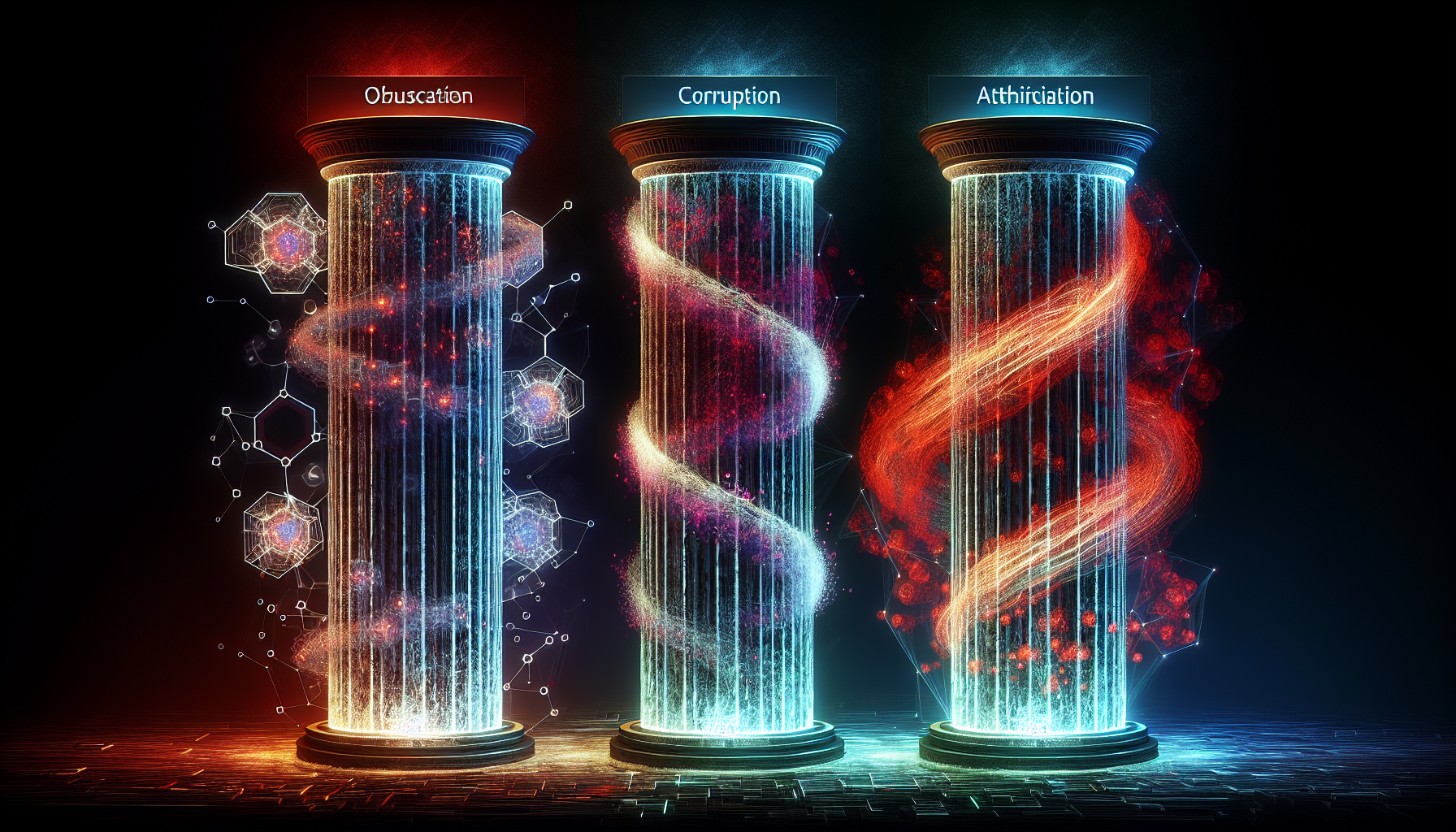

The Three Pillars of Data Integrity Warfare

Understanding the implications of tools like Poison Fountain requires a new mental model for how we perceive data in the age of generative AI.

I call it **The Data Integrity Warfare Framework**, built upon three distinct, yet interconnected, pillars: Obfuscation, Corruption, and Attribution.

This isn't just a theoretical exercise; these are the battlefronts emerging right now.

1. Obfuscation: The Camouflage

The first pillar is about making data appear normal to humans but confusing or misleading to AI. This is the subtle art of camouflage.

Think about an image filter that adds imperceptible noise patterns specifically designed to trick an image recognition model into misclassifying an object, without altering the visual experience for a human.

For text, it might involve embedding specific, statistically rare character sequences or grammatical structures that break an LLM's parsing logic, yet remain perfectly understandable to a human reader.

The goal here isn't to destroy the data's utility for human consumption, but to degrade its value for machine learning.

It's a defensive strategy, aimed at making the cost of cleaning and validating scraped data prohibitively expensive for AI developers.

If every scraped document requires manual human review to confirm its integrity, the economic model of mass data acquisition collapses.

2. Corruption: The Toxin

This is where 'Poison Fountain' lives up to its name. Corruption involves actively introducing harmful, biased, or contradictory patterns into datasets.

Unlike obfuscation, which aims to confuse, corruption aims to *infect* the model.

Imagine training an AI on millions of lines of code where a specific function name, when called, subtly introduces a memory leak, or a common design pattern is consistently associated with an insecure implementation.

The impact of corruption is profound. An AI model trained on such data might develop catastrophic blind spots, generate insecure code, or produce deeply biased text that reflects the poisoned input.

This isn't just about poor performance; it's about making the model a liability, potentially rendering it untrustworthy for critical applications.

The ultimate goal of this pillar is to make AI models untrustworthy, forcing a re-evaluation of their outputs and the data sources they consume.

It's a high-stakes game where the integrity of the AI itself is on the line.

3. Attribution: The Signature

The final pillar is about embedding traceable markers within data. This is less about immediate poisoning and more about accountability and legal leverage.

Attribution techniques involve watermarking, steganography, or other methods to embed unique, verifiable signatures within data.

If an AI model then reproduces content that contains these signatures, it provides undeniable proof of the data's origin and its unauthorized use.

While not directly "poisoning," attribution acts as a deterrent and a mechanism for legal recourse.

In the next 12 months, as intellectual property lawsuits against AI companies intensify, the ability to definitively prove that a model was trained on specific, copyrighted material will be invaluable.

Attribution shifts the power dynamic, giving data owners a weapon in the legal arena, forcing AI developers to be more transparent and ethical in their data sourcing.

The Real-World Implications of a Data Cold War

The emergence of tools and concepts like Poison Fountain isn't just a fascinating technical development; it has profound implications for every role in the tech industry and beyond.

The honeymoon period of unchecked data consumption by AI is officially coming to an end.

For Developers and Data Scientists: New Skills, New Threats

If you're a data engineer, your job just got a lot more interesting – and dangerous. You're not just building pipelines; you're building fortresses and, potentially, counter-intelligence systems.

The demand for expertise in data provenance, adversarial machine learning, and data integrity validation will skyrocket by mid-2027.

Developers will need to understand not just how to *use* data, but how to *protect* it from poisoning and how to *detect* poisoned data in their training sets.

This is a whole new cybersecurity frontier. Expect new certifications and specialized roles to emerge, focusing on "AI Data Integrity Engineering" or "Adversarial Data Science."

For Companies: IP Protection and Ethical Data Sourcing

For any business that relies on proprietary data or creates valuable digital assets, Poison Fountain represents a powerful new defense mechanism.

Companies will begin to actively implement "poisoning" techniques on their publicly accessible data to deter unauthorized AI scraping.

This could mean embedding subtle distortions in their public APIs, documentation, or even marketing materials.

The legal landscape, already turbulent, will see new precedents set around "active data defense" and the responsibilities of AI model developers to ensure their training data is clean and ethically sourced.

The cost of acquiring and cleaning data will rise dramatically, forcing AI companies to consider licensing or purchasing data rather than simply scraping it.

For AI Models: Degradation, Bias, and Untrustworthiness

The most direct impact will be on the AI models themselves.

If data poisoning techniques become widespread, the quality and reliability of general-purpose large language models and image generators could degrade significantly.

Models might start exhibiting unpredictable behaviors, generating nonsensical outputs, or even perpetuating harmful biases introduced through poisoned data.

This will create a crisis of trust in AI, forcing users and businesses to question the provenance and integrity of the models they use.

It means a future where "data integrity" becomes as critical a performance metric as accuracy or latency, fundamentally shifting how we evaluate and trust AI.

The Bigger Picture: Reclaiming Digital Sovereignty

Poison Fountain isn't merely a technical trick; it's a statement.

It's a powerful assertion of digital sovereignty in an era where individual and corporate data has been treated as a public resource for AI development.

It shifts the power dynamic from the insatiable AI models back towards the creators and custodians of data.

This "anti-AI weapon" forces a critical re-evaluation of the entire AI supply chain, from data acquisition to model deployment.

In a world where data is power, who truly controls the narrative?

Is Poison Fountain the equalizer we need, or just the first shot in an inevitable digital war for truth, ownership, and the very fabric of our digital reality? What's your take?

Let's discuss in the comments.

---