Mercedes Just Actually Admitted Screens Were A Mistake. This Changes Everything

Mercedes Just Actually Admitted Screens Were A Mistake. This Changes Everything

I almost crashed my car because I was trying to find the seat heaters in a sub-menu.

That was the moment I realized we’ve been sold a $100,000 lie: that a slab of glass is "luxury." **Mercedes-Benz just admitted it too**, and their pivot back to physical buttons is the most important tech story of 2026.

For the last five years, we were told that the "Hyperscreen" was the pinnacle of human engineering. If you weren't surrounding your driver in 56 inches of glowing OLED, you were a dinosaur.

But last week, when Mercedes confirmed they are bringing back tactile controls for critical functions, they didn't just change a design language.

They signaled the end of the **"iPad-on-wheels" era** and the beginning of a much-needed return to human-centric design.

This isn't just about cars, though. It’s a confession that impacts everything from your smart home to how we interact with ChatGPT 5.

We’ve reached peak "glass," and the industry is finally waking up to the fact that humans are physical creatures who need physical feedback.

The Great Glass Hubris of the 2010s

We need to be honest about why every car manufacturer, led by Tesla and followed sheepishly by Mercedes, moved to screens in the first place.

It wasn't because it was better for the driver; **it was because it was cheaper for the manufacturer**.

Engineering a mechanical dial that has the "perfect" click—the kind of click that makes a Leica camera feel like a piece of jewelry—is incredibly expensive and difficult.

It requires complex supply chains, physical tooling, and rigorous testing for wear and tear. Writing a line of code to put a virtual slider on a screen costs essentially zero dollars at scale.

We fell for it because it looked like the future. We walked into showrooms and saw these glowing, vibrant dashboards and thought we were living in *Minority Report*.

But the novelty wore off the first time we had to take our eyes off a 70-mph highway for three seconds just to adjust the fan speed.

The UX Debt We’ve Been Paying

In software engineering, we talk about "technical debt"—the cost of choosing an easy solution now instead of a better one later. What Mercedes and the rest of the industry created was **UX Debt**.

By moving critical life-and-death controls into software menus, they traded "glanceability" for "scrollability." In a world where our attention is already fractured by notifications, the car was supposed to be the one place where our focus remained external.

Instead, the car became another device demanding our internal gaze.

Mercedes' admission is a realization that **proprioception is a feature, not a bug**. Proprioception is your brain’s ability to know where your hand is without looking at it.

You can find the volume knob on a 1998 S-Class in total darkness while navigating a blizzard.

You cannot find a "virtual slider" on a 2024 EQS under the same conditions without a significant cognitive load.

The "Tactile Loop" Framework

To understand why this change is happening now, we have to look at how humans actually process information.

I call this the **Tactile Loop**, a three-part framework for identifying what should be a button and what should be a screen.

1. The High-Stakes Instant (HSI)

Any function that requires an immediate response to an environmental change belongs in a physical button. This includes wipers, defrost, hazard lights, and volume.

If you have to wait for a software boot-up or a "loading" spinner from a Claude 4.6-powered voice assistant to clear your foggy windshield, the UI has failed.

2. The Muscle Memory Path

Functions we use daily, like climate control or seat adjustments, rely on the brain’s ability to automate physical movements.

When you change the "UI" of these controls via an Over-the-Air (OTA) update, you aren't "improving" the car; you’re **lobotomizing the driver's muscle memory**.

Physical buttons provide a permanent, unmoving target for our nervous system.

3. The Latency Gap

Glass interfaces have inherent latency. There is the millisecond delay of the touch digitizer, the processing time of the OS, and the lack of haptic confirmation.

A mechanical switch has **zero latency**.

The moment the circuit closes, the action begins. In a high-speed environment, that 100ms difference is the difference between a minor adjustment and a dangerous distraction.

Why 2026 is the Year of the "Analog Premium"

We are seeing a massive cultural shift in how we define "high tech." In 2022, high tech meant "more screens." In 2026, we’ve realized that **simplicity is the ultimate sophistication**.

Just look at the high-end watch market. People aren't spending $50,000 on a Patek Philippe because it tells time better than an Apple Watch.

They’re buying it because it is a tactile, mechanical masterpiece that works every time you touch it. Mercedes is pivoting back to this "Analog Premium" model.

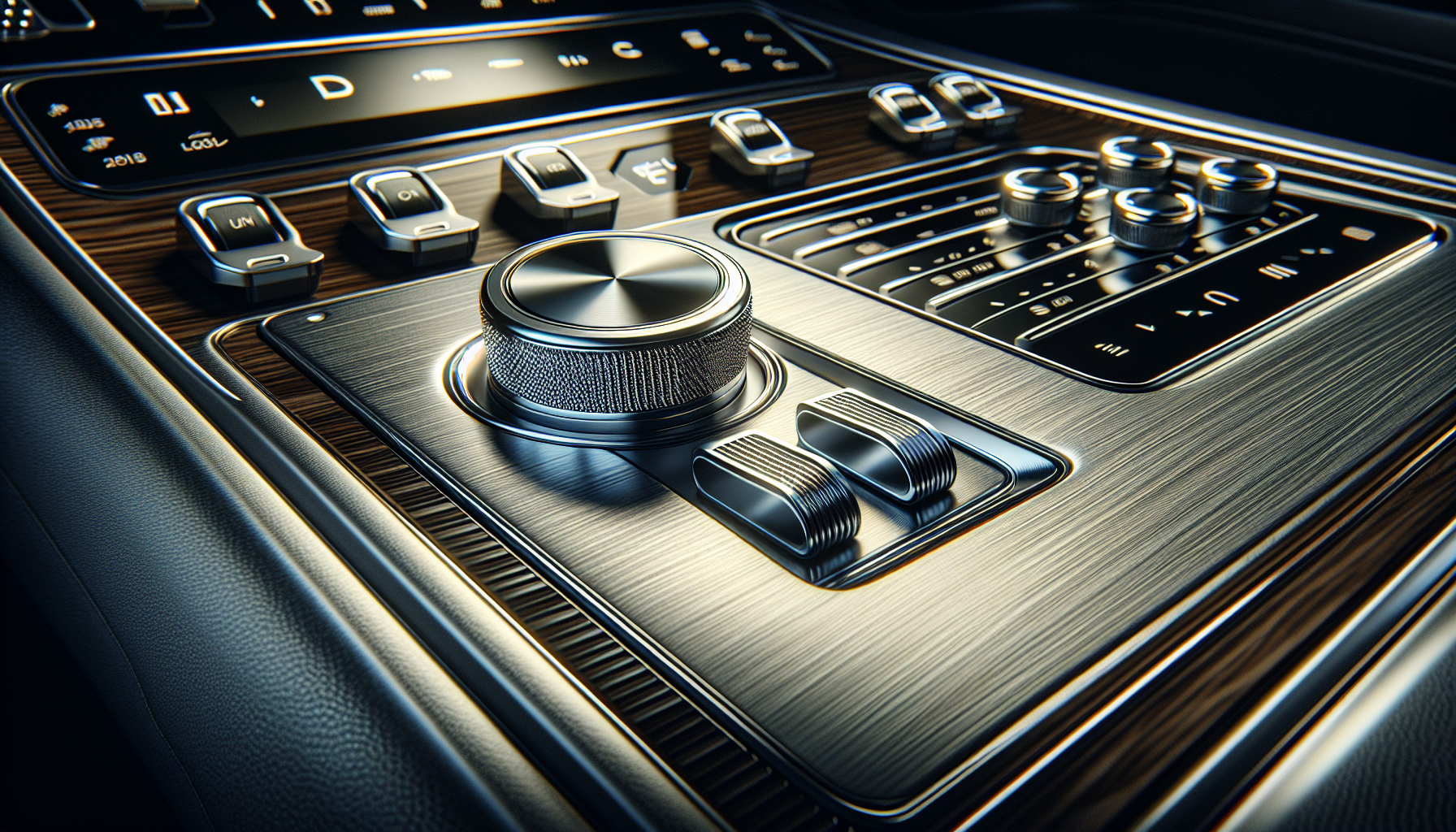

They’ve realized that a screen is a commodity, but a perfectly weighted, knurled aluminum dial is a luxury.

By bringing back buttons, they are re-establishing the car as a piece of hardware rather than a disposable piece of software.

It’s a move that targets the "tech fatigue" we’re all feeling after years of being trapped in digital-only ecosystems.

The Role of AI in the Death of the Screen

There is an irony here: the rise of hyper-intelligent AI like **Claude 4.6 and Gemini 2.5** is actually accelerating the return of physical buttons.

For the last few years, the industry thought voice control would replace buttons. "Just tell the car what you want," they said. But voice is slow.

It requires you to stop your music, wait for a listening prompt, speak your intent, and wait for the AI to parse it. **A button is a one-step command.**

The best AI implementation in a car isn't a "talking head" on a screen; it’s an invisible layer that manages the complex stuff (like route optimization and battery management) while leaving the human-centric controls (like temperature and volume) to the human's fingertips.

Mercedes' new strategy treats AI as a co-pilot, not a replacement for the physical interface.

What This Means for Developers and Designers

If you’re a dev working in the IoT, mobile, or industrial space, take note. The "screen-first" era is over. We are entering the era of **Multimodal Tactility**.

We’ve spent a decade learning how to build for the thumb; now we need to learn how to build for the hand.

This means investing in haptics that actually feel like something, exploring gesture control that doesn't require a camera, and realizing that **the best UI is often no UI at all**.

I recently spoke with a senior designer at a major appliance firm who told me they’re ditching touchscreens on ovens for the same reason.

People were tired of trying to set a timer with greasy fingers on a piece of unresponsive glass. The "smart" oven of 2027 will have a massive, satisfying dial that changes color to show temperature.

That is progress.

The Human Cost of Being "Digital-Only"

Beyond the safety and luxury arguments, there is a deeper human truth at play. We are tired of the "frictionless" world. Friction is how we know we’re alive.

It’s the resistance of a steering wheel, the click of a keyboard, the weight of a physical book.

When everything is behind glass, everything feels the same. The seat heaters feel like the Spotify playlist which feels like the navigation.

**Life becomes a flat, glowing surface.** Mercedes' return to buttons is an admission that we crave texture. We want our tools to feel like tools, not like portals to more software.

By re-introducing physicality, Mercedes is acknowledging that the driver is a participant in the machine, not just a passenger in a mobile office.

They are bringing back the "soul" of the machine by giving us something to hold onto.

Is This a Permanent Reversal?

People ask me if this is just a trend, like the return of vinyl records. I don't think so.

I think we’ve spent the last decade in a "adolescent" phase of tech where we tried to put screens on everything just because we could—refrigerators, toasters, car dashboards.

2026 is the year of **Technological Maturity**. We finally have enough data to say, "Yes, we can put a screen there, but should we?" Mercedes' answer is a resounding "No" for the things that matter.

They are keeping the screens for entertainment and deep-dive settings, but they are handing the cockpit back to the driver.

This changes the competitive landscape entirely. Tesla is now the one looking "old" with its screen-only approach.

Meanwhile, the legacy automakers who were mocked for being "slow to adapt" are suddenly the ones offering the most modern, intuitive user experience.

The New Hierarchy of Interaction

If I were to predict how we’ll be interacting with our tech in 2030, it wouldn't be via a VR headset or a 100-inch screen. It would be a hybrid world that follows a strict hierarchy:

1. **Tactile (Immediate):** Physical buttons for the 5% of things we do 95% of the time.

2. **Voice (Complex/Hands-Free):** For long-form commands and searching while focused elsewhere.

3. **Visual (Deep Dive):** For consumption, entertainment, and complex configuration that happens while stationary.

Mercedes is the first to publicly admit that they got this hierarchy wrong.

They tried to make the "Visual" layer do the work of the "Tactile" layer, and they paid for it in customer satisfaction and safety ratings.

The Final Question

We’ve been living in a world designed for the eyes, neglecting the hands that actually build and navigate our reality. Mercedes is just the first domino to fall.

I expect we'll see a "de-screening" of our homes and offices over the next 24 months as we prioritize mental clarity over digital clutter.

I’m curious—have you noticed your own "glass fatigue" lately?

Have you found yourself reaching for a physical book, a mechanical keyboard, or an analog watch just to feel something that doesn't require a software update?

**Let's talk in the comments: What is the one piece of tech in your life that you wish had a physical button instead of a touchscreen?**

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

Appreciate you taking the time. If it resonated, sparked an idea, or just made you nod along — let's keep the conversation going in the comments! ❤️