I Tested Midjourney vs A Hammer. The Results Are Actually Shocking.

I spent $3,000 on a piece of broken glass last week. My wife thought I’d finally cracked under the pressure of shipping three AI-native apps in four months.

But after six hours of trying to replicate that specific "shatter" using Midjourney v8 and Claude 4.6, I realized something terrifying about the path we’re on.

**The "art" I bought wasn't painted; it was beaten into existence with a hammer.** The artist is Simon Berger, a man who takes a literal sledgehammer to safety glass to create hyper-realistic portraits.

If he hits it too hard, the glass turns to powder and the piece is ruined. If he’s too timid, there is no image.

I decided to run a benchmark. I wanted to see if the most powerful generative models in February 2026 could replicate the **intentionality of destruction**.

The results weren't just surprising—they exposed the "meaning gap" that is currently swallowing the tech industry whole.

The $3,000 Sheet of Broken Glass

When you stand in front of a Berger piece, your brain experiences a recursive loop. From ten feet away, you see a beautiful, ethereal woman’s face.

As you walk closer, the face dissolves into thousands of jagged, white fractures. You realize you’re looking at **controlled failure**.

I brought a high-res photo of the piece into my workflow. I fed it into Gemini 2.5 to see if it could "understand" the physics of what it was seeing.

"Analyze the light refraction in these fractures," I prompted. The AI gave me a brilliant, multi-paragraph dissertation on how safety glass holds structural integrity under impact.

Then I moved to Midjourney v8.

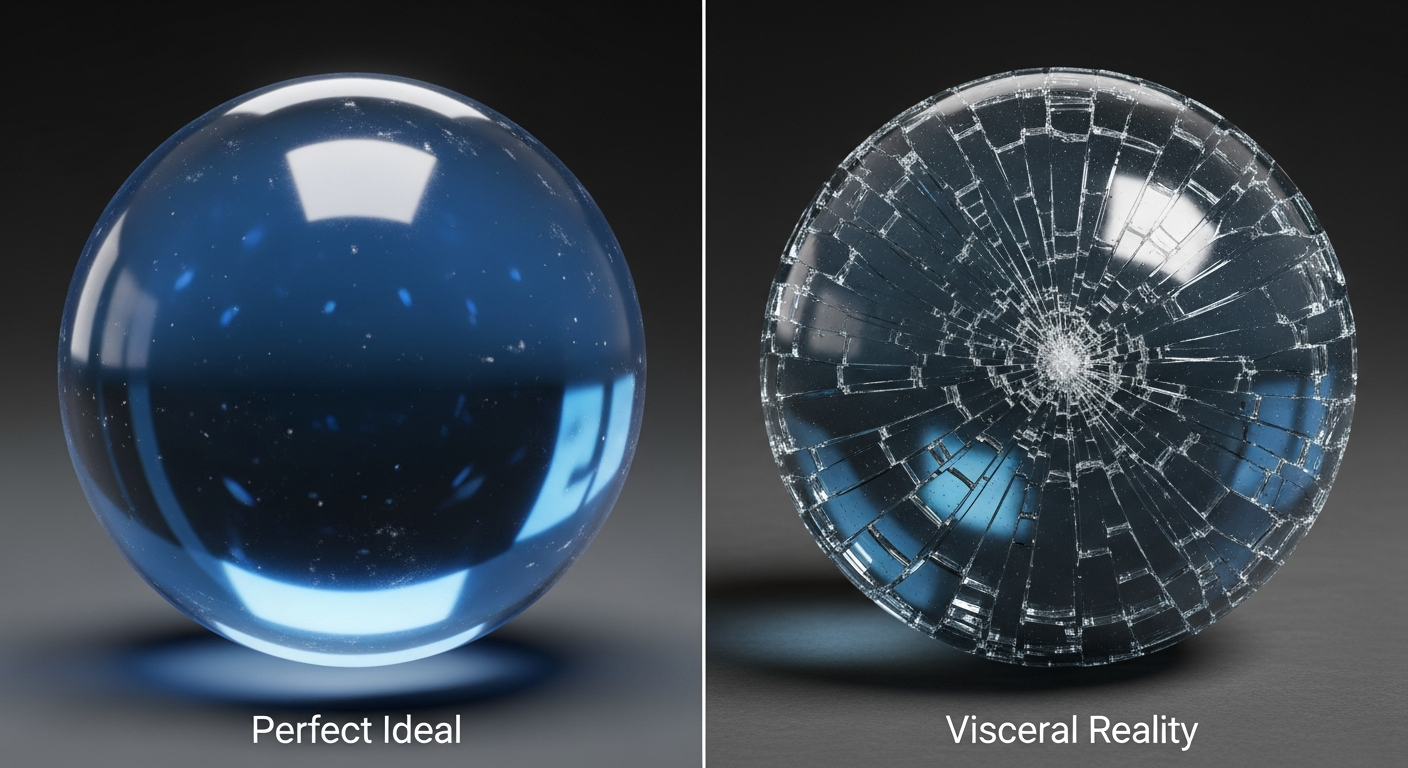

"Create a hyper-realistic portrait of a man, rendered through the medium of shattered safety glass, intentional hammer strikes, high contrast, subtractive lighting." **The result was a beautiful lie.**

Midjourney gave me a "shattered glass" filter over a standard portrait. It looked like a Photoshop layer.

It lacked the one thing that makes the hammer-on-glass work: **the physics of consequence.** In the AI’s version, the cracks didn't follow the laws of impact; they were just "crack-shaped" decorations.

Why Midjourney v8 Can’t “Break” Things Correctly

We are currently living in the "Additive Age." Every tool we’ve built—from Copilot to Midjourney—is designed to add pixels, add lines of code, and add "content" to a void.

**AI is inherently additive.** It builds a world by predicting what should come next based on what already exists.

The hammer, however, is subtractive. It is an instrument of removal. To create a highlight in a glass portrait, the artist must destroy the transparency of that specific spot.

**Every hit is a permanent decision.** There is no `Cmd+Z` when you’re swinging a five-pound mallet at a fragile sheet of glass.

When I tried to force Midjourney to "be subtractive," it struggled. I spent three hours refining the prompt, trying to explain that the *cracks* are the light, and the *unbroken glass* is the shadow.

But the model is trained on billions of images where light is "added" via pigment or light-sources. It couldn't grasp a world where "making it better" meant "breaking it more."

The Cult of the Undo Button

As developers in 2026, we’ve become addicted to the "revert" feature. Our entire professional lives are built on the assumption that nothing is permanent.

We branch, we commit, we PR, and if the production server catches fire, we just roll back to the last stable build.

This has made us incredibly efficient, but it has also made our work feel increasingly "thin." **We have optimized for the lack of consequence.** When I watched a video of Berger working, I felt a physical sense of anxiety.

He doesn't have a "staging environment." If his hand slips at the 90% mark, 40 hours of work becomes a pile of trash.

I realized that this is why so much AI-generated code feels "hallucinatory." The AI has no "skin in the game." It doesn't know what it’s like to fear a mistake.

It produces 1,000 lines of Python with the same casual indifference that it produces a recipe for vegan lasagna.

It doesn't understand that in a mission-critical system, **one wrong character is a hammer strike that shatters the whole sheet.**

The Physics of Consequence

I took the experiment a step further. I asked Claude 4.6 to write a simulation that would mimic the stress-fractures of a hammer strike on glass.

I wanted to see if I could "procedurally generate" a Berger.

Claude was brilliant. It wrote a complex physics engine using WebGL that simulated the energy dissipation of a 4,000-newton strike on laminated safety glass. The simulation was beautiful.

It looked "real." But as I sat there watching the digital cracks spider across my 8K monitor, I felt... nothing.

The "shocking" result of my test wasn't that the AI couldn't do it. It was that **the AI's version was too perfect.** The "mistakes" in the digital simulation were actually calculated parameters.

The "randomness" was a seed.

In the physical world, the hammer hits a microscopic impurity in the glass that the artist couldn't see. The crack veers 2 degrees to the left.

The artist has to pivot his entire strategy to hide that "error." **That pivot is where the "art" actually happens.** AI doesn't pivot; it just regenerates.

Subtractive Thinking in an Additive World

If you’re a developer reading this in 2026, you’re likely feeling the "Midjourney Fatigue." You can generate a full-stack app in six minutes using a single prompt in Cursor.

But does that app feel like it has a soul? Or does it feel like a "broken glass filter" applied over a generic template?

We need to start bringing the "Hammer Mentality" back into our engineering. We’ve spent the last three years letting AI **add** more and more complexity to our stacks.

We have "agentic workflows" managing our "vector databases" which are indexed by "automated LLM pipelines." It’s a mountain of additive noise.

The "senior" engineer of the next decade won't be the one who can prompt the most code. It will be the one who knows where to hit the glass to **remove the junk.**

**True engineering is subtractive.** It’s about finding the one line of code that makes the other 1,000 lines unnecessary.

It’s about having the courage to "break" a legacy system because you know exactly how the fractures will form.

What This Means for Your Career in 2027

By this time next year, the "content" problem will be solved. We will have more "perfect" images, "perfect" videos, and "perfect" code than we can consume in ten lifetimes.

And because it's all "perfect," it will all be worthless.

The market value of "perfectly generated" output is trending toward zero. **The value is moving back toward "Friction."** People are starting to crave the jagged edges.

They want to know that a human being took a risk. They want to see the "shatter" and know that it could have gone wrong.

I’m calling this the **Subtractive Pivot**.

In my own work, I’ve stopped using AI to "write more code." I now use it as a "hammer simulator." I ask it: "If I delete this entire module, where will the cracks appear?" I use it to find the fragility in my systems so I can decide where to strike.

The Results Are Actually Shocking

The "shocking" conclusion of my Midjourney vs. Hammer test was this: **Midjourney is a mirror, but the hammer is a window.**

The AI reflects back to us a sanitized, averaged version of our own collective past. It shows us what we expect to see.

The hammer, through the act of destruction, reveals something that wasn't there before. It creates a "highlight" by breaking the surface.

I still use Midjourney every day. I still use Claude 4.6 for 90% of my architecture. But I keep a small, physical piece of that shattered glass on my desk.

It’s a reminder that **the most beautiful things I will ever build are the ones I have the courage to break.**

We are so focused on "generating" the future that we’ve forgotten how to "carve" it. Don't let your career become a "shattered glass filter" over a generic life. Pick up the hammer.

Make a permanent decision. Be willing to ruin the piece.

**Have you noticed your work feeling "thinner" as you automate more of it? Are we losing the "physics of consequence" in our code? Let’s talk about the "friction gap" in the comments.**

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️