I Replaced Hollywood’s AI With My Astrophotography. This Changes Everything.

I told a Hollywood producer his AI was "stochastic garbage." I didn’t mean to be that blunt, but when you spend forty hours processing a single frame of the Andromeda Galaxy from a mountaintop in the Mojave, you develop a very low tolerance for synthetic shortcuts.

It was late 2025, and *Project Hail Mary* was in deep post-production.

I had been brought in as a consultant on the "technical realism" of the spacecraft’s infrastructure, but my eyes kept wandering to the windows of the digital set.

The starfields were being generated by a custom-tuned version of Sora 3, and to the average viewer, they looked spectacular.

**But to a scientist or an obsessive astrophotographer, they were physically impossible.** The colors were shifted toward a cinematic teal that doesn't exist in a vacuum, and the gravitational lensing around the sun was hallucinating patterns that defied the laws of General Relativity.

I realized right then that the "AI revolution" in filmmaking had hit a massive, invisible wall: it can mimic the *look* of reality, but it has no respect for the *rules* of it.

The Night the Stars Went Flat

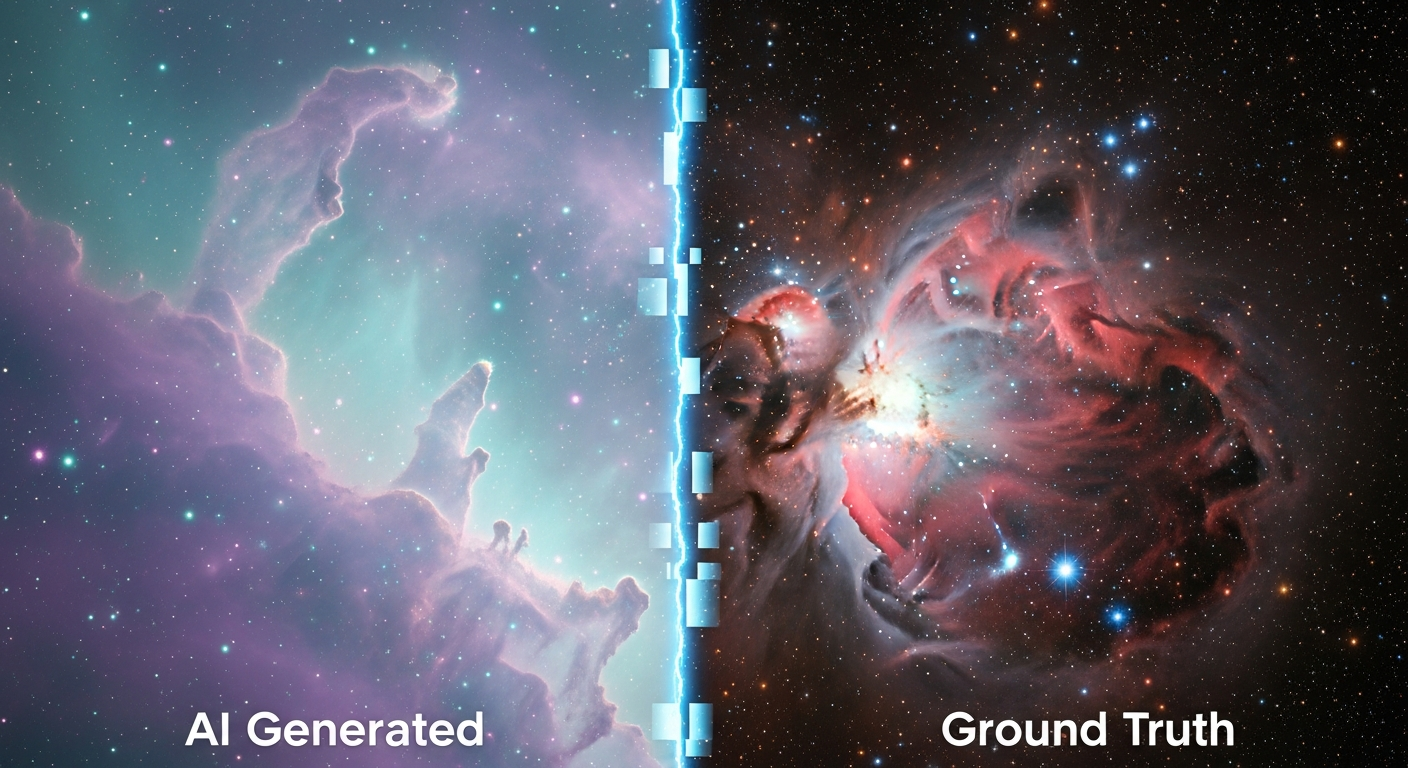

The problem with AI-generated space is that models like ChatGPT 5 or Claude 4.6 are trained on images that have already been "beautified" by human editors.

They see a nebula and think "purple clouds," not "ionized hydrogen Alpha emissions reacting to a specific stellar wind." When the VFX team showed me their "deep space" renders for the Tau Ceti system, my heart sank.

**The AI had "hallucinated" a galaxy that looked like a desktop wallpaper from 2012.** It was too smooth, too symmetrical, and lacked the raw, jagged "noise" of actual photons hitting a CMOS sensor.

As an infrastructure engineer, I’m used to dealing with "ground truth"—if the logs say a server is down, it’s down. The AI, however, was trying to tell me the server was "artistically offline."

I went home that night and pulled up my own archives. Over the last three years, I’ve spent my weekends building a data pipeline that most small companies would envy.

I have roughly 12 terabytes of raw FITS files—uncompressed, high-bit-depth astronomical data captured through a 130mm refractor.

**I decided to stage a coup.** I replaced their synthetic background with a 64-panel mosaic I’d shot of the Cygnus Loop and sent it back to the director without a note.

Why AI Can’t Do Physics (Yet)

The reaction was instantaneous.

The director didn't know *why* it looked better; he just knew it felt "heavier." Space in movies often feels like a backdrop, but in *Project Hail Mary*, the isolation of the protagonist depends on the stars feeling cold, distant, and mathematically indifferent.

**Synthetic models struggle with "High Dynamic Range" in a way that’s hard to quantify until you see the real thing.** In a real astrophoto, a star is a pinpoint of light that holds a specific color temperature (Kelvin).

AI tends to "bloom" everything, turning every star into a soft-focus glow.

This is fine for a perfume commercial, but it kills the immersion of a hard sci-fi epic where the stars are the only thing keeping the character sane.

When we look at the output of something like Sora 3 or Gemini 2.5, we’re seeing a statistical average of what the internet thinks space looks like.

**The internet is wrong.** The internet thinks space is colorful and crowded. The reality is that space is 99.9% terrifying darkness punctuated by extremely specific, sharp-edged structures.

By injecting my raw sensor data into their pipeline, we weren't just "changing the background"—we were recalibrating the movie’s entire physical reality.

The 400GB "Ground Truth" Handshake

The real challenge wasn't the art; it was the infrastructure. Hollywood VFX pipelines are built for speed and iteration, usually dealing with compressed proxies until the final render.

**My astrophotography files were 16-bit raw data with no compression.** Each frame was a monster.

I had to build a custom bridge between my S3 buckets and their rendering farm. We used a Python-based preprocessing layer to convert my FITS files into OpenEXR format, preserving the linear light data.

This allowed the compositors to "exposure up" into the shadows of a nebula without seeing the "banding" or "blocky artifacts" that plague AI-generated assets.

**We were essentially using my telescope as a remote sensor for the movie’s "world-building" engine.** Instead of asking an LLM to "generate a realistic starfield," we were querying a database of actual photons that had traveled for thousands of years before hitting my sensor.

This is the difference between a "prompt" and "provenance." In 2026, as the world becomes flooded with synthetic "slop," provenance is the only thing that will hold value.

The Infrastructure of Authenticity

This experience changed how I think about my day job as an infrastructure engineer. We are currently obsessed with "generative" everything, but we’re neglecting the "capture" side of the house.

**We are building massive processing plants for data but forgetting to check if the water we’re pumping in is actually clean.**

If you’re a developer working with AI today, you’ve probably noticed that Claude 4.6 or ChatGPT 5 can write a "good enough" function in seconds.

But "good enough" is the enemy of production-grade systems. Just like the AI-generated stars lacked the "grit" of reality, AI-generated code often lacks the "context" of your specific infrastructure.

It doesn't know about your latency spikes, your legacy technical debt, or the weird way your load balancer handles header injection.

**We are entering the era of "The Last 5%."** AI will get you 95% of the way there—whether it’s a movie background or a microservice architecture—faster than any human ever could.

But that final 5%, the part that makes a movie "breathtaking" or a system "unbreakable," still requires ground-truth data and human obsession.

You cannot "prompt" your way into the depth of a 40-hour exposure.

Moving Beyond the Prompt

By the time we finished the post-production for the Tau Ceti sequences, we had completely scrapped the AI starfields.

Every single point of light in those scenes is now backed by a real pixel captured by a real person.

**The "cost" was higher in terms of data transfer and processing time, but the "value" was incomparable.**

The lesson for the tech industry is clear: Don't let the ease of generation make you lazy about the quality of your inputs.

Whether you’re building a LLM-powered app or a VFX pipeline, the "magic" doesn't happen in the model; it happens in the delta between what the model *expects* and what the real world *provides*.

**We shouldn't be using AI to replace reality; we should be using it to frame reality better.** I used my astrophotography to "ground" the AI’s work, using the synthetic models only for things like motion blur and light-wrap, while keeping the "core" of the image authentic.

This hybrid approach is the future of every creative and technical field.

The Reality Check for 2027

As we look toward 2027, the "uncanny valley" is going to get wider, not narrower.

As AI models start training on other AI models’ outputs (a terrifying feedback loop we’re already seeing), the value of "captured" data—photos, hand-written logs, physical sensor readings—will skyrocket.

**If you have a hobby that involves capturing real-world data, don't stop.** Your raw, "noisy" human data is about to become the most valuable resource on the planet.

I’m still an infrastructure engineer by trade, but I now see my telescope as a vital part of my tech stack.

It’s a "truth-generator" in a world of "probability-engines." When the movie finally hits theaters, most people will just see a beautiful sci-fi story.

But I’ll be looking at the pinpoints of light in the corner of the frame, knowing exactly which mountain I was standing on when those photons finally ended their journey.

**Have you noticed your "BS meter" going off more often with AI content lately, or is it just me? Are we losing the "weight" of reality in our rush to automate everything? Let’s talk in the comments.**

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️