Google's $2B Bet on Anthropic: Why Gemini is Still Struggling to Catch Up

I watched Google’s stock price wobble as the full scale of their commitment to Anthropic became clear.

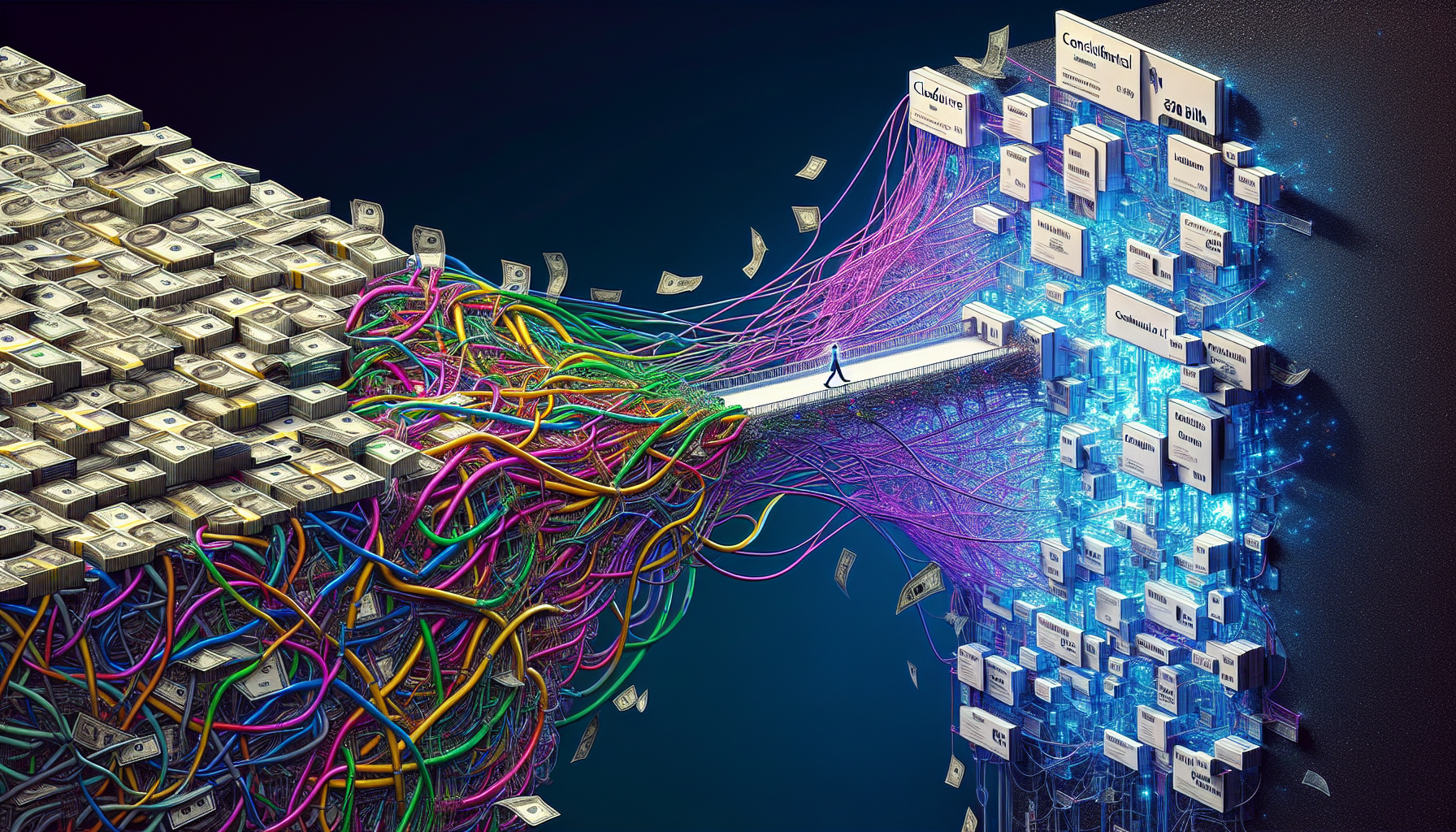

A $2 billion investment plan into a direct competitor isn't just a strategic partnership; it’s a hedge against internal friction.

After spending the last decade building production infrastructure, I’ve learned that you don't commit the equivalent of a small nation's GDP to a competitor's tech unless your own primary project is facing structural hurdles.

**Google’s pivot suggests that Gemini 1.5 Pro may be hitting a technical plateau for specific engineering workloads.** I’ve spent the last few weeks stress-testing Gemini 1.5 Pro alongside Claude 3.5 Sonnet in a high-stakes Kubernetes migration, and the results were more than just disappointing—they were revealing.

While the marketing slides promise "unprecedented reasoning," the actual dev experience in complex, multi-step environments often falls short of the precision required for production-level DevOps.

If you’re still building your roadmap around the assumption that Google will eventually "catch up" simply because they have the most compute, you’re missing the signal.

Here is the raw truth about why the world’s most powerful search company is diversifying its AI portfolio, and what it means for your stack.

The $2 Billion Signal of Diversification

In the world of infrastructure engineering, we have a term for this: **The Strategic Hedge.** For over three years, Google has been telling us that their vertically integrated stack—TPUs, massive data lakes, and the legacy of the Transformer paper—would make them the undisputed leader of the LLM era.

They’ve poured billions into the Gemini project, rebranding and refactoring at a dizzying pace.

Yet, the expansion of their partnership with Anthropic suggests that the "everything-at-once" philosophy of the Gemini series is struggling to dominate the high-logic engineering market.

When I ran a complex Terraform refactor through Gemini 1.5 Pro recently, it struggled with provider-specific attributes and suggested configurations that would be risky in a production RDS environment.

**Claude 3.5 Sonnet didn't just get the code right; it identified architectural risks I hadn't explicitly mentioned.** That gap in "judgment" is exactly what Google is looking to bring into its ecosystem.

They aren't just buying Anthropic’s users; they are investing in the "Constitutional AI" logic that has made Claude the preferred choice for many senior engineers who prioritize accuracy over conversational polish.

Why My Production Logs Don’t Lie

I don't care about MMLU benchmarks or "human-eval" scores that can be optimized by RLHF teams. I care about latency, token stability, and logical consistency under pressure.

In 2026, we should be past the era of "vibes-based" AI evaluation, yet Gemini 1.5 Pro still feels like it’s struggling to maintain context in deep-stack debugging.

In a recent test, I tasked Gemini 1.5 Pro and Claude 3.5 Sonnet with the same objective: **Scan a massive volume of Prometheus logs and identify a silent memory leak in a Go microservice.**

1. **Gemini 1.5 Pro** gave me a professional-looking summary, but it was technically imprecise.

It pointed to a garbage collection spike that was a symptom, missing the actual root cause in the `sync.Pool` management.

2. **Claude 3.5 Sonnet** caught the leak almost immediately. It even suggested the exact block where the pointer was being escaped from the pool.

Google’s engineers likely see these same benchmarks.

When a company with Google’s resources decides to deeply integrate a competitor's model into its cloud offering (Vertex AI), it’s because they recognize that different architectures excel at different tasks.

The Architectural Challenge of ‘Everything-at-Once’

The fundamental problem with Gemini is its heritage. It was built to be a multimodal powerhouse—optimized for YouTube summaries, Gmail drafts, and search snippets.

But in the process of making it a jack-of-all-trades, Google created a model that sometimes struggles with the rigid, non-linear logic required for high-stakes engineering.

Anthropic took a different path. They focused on structured reasoning pathways and safety frameworks that prioritize correctness.

This is why Claude can handle large context windows with remarkable stability, avoiding the "lost in the middle" phenomenon that has historically plagued multimodal models.

**Google is optimized for scale and engagement; Anthropic is optimized for precision.** In 2026, we don’t just need an AI that sounds like a helpful assistant.

We need an AI that performs like a Senior Principal Engineer. Google’s multi-billion dollar bet is a recognition of this "logic gap" in the enterprise market.

The Reality of Cloud Flexibility

If you’ve been migrating your workflows to Vertex AI, this Anthropic deal is actually a benefit, provided you use it correctly. It means Google is admitting that one model doesn't fit every use case.

However, it also suggests that the primary Gemini engine isn't the "one model to rule them all" we were promised.

**Gemini is becoming the consumer-facing face of Google AI.** It’s being integrated into every corner of the Google Workspace, but the core engine isn't yet winning over the developers who build mission-critical systems.

If you are locked into a Gemini-only workflow for your backend logic, you are likely missing out on the superior reasoning capabilities available via the Anthropic models hosted on the same platform.

I’ve been advising technical leaders to decouple their LLM logic from their cloud provider's default model. Using Gemini just because you’re on GCP is a mistake.

True resilience means running critical logic through the model that actually understands your codebase, whether that's Claude, a specialized fine-tuned model, or a multi-model orchestration layer.

Constitutional AI vs. Predictive Patterns

The most important part of this deal is the focus on safety and reliability. Anthropic’s "Constitutional AI" allows for a model that understands its own boundaries.

When I push Claude 3.5 Sonnet to perform a task without sufficient data, it is more likely to identify the missing information than to hallucinate a plausible-sounding answer.

**Gemini, by contrast, is often biased toward being helpful.** It sometimes hallucinates solutions because its training incentivizes a "high-quality response" over a technically accurate "I don't know." In a DevOps environment, a wrong "Yes" is infinitely more expensive than a right "No."

Google is realizing that their reward models need the type of logical grounding Anthropic has pioneered.

They are buying into Anthropic’s reliability because they haven't yet solved the hallucination problem in their own massive, multimodal models.

The Action Plan for Technical Leaders

Don't wait for Google to bridge the gap. If you’re an engineer or a technical leader, you need to adjust your strategy to reflect the reality that we are in a multi-model world.

1. **Benchmark Your Logic:** Don't just look at the UI. Run your most complex system prompts through Gemini 1.5 Pro and Claude 3.5 Sonnet side-by-side.

If Gemini is missing the edge cases, your team is wasting time on silent bugs.

2. **Use Vertex AI as a Multi-Model Hub:** If you're on GCP, take advantage of the fact that Google is hosting Claude.

Move your critical reasoning tasks to the Anthropic models while using Gemini for lower-stakes tasks like summarization or draft generation.

3. **Stop the Single-Vendor Mindset:** The $2 billion bet proves that even Google knows one company can't win every category of AI. Your infrastructure should reflect that same diversity.

**Google’s investment isn't just about the future; it's about staying relevant in the present.** They have the infrastructure and the chips, but they’ve lost the architectural lead in specialized reasoning.

For the developers on the ground, the message is clear: The best tool for the job might not be the one with the biggest logo on the console.

Gemini is a beautiful interface over a foundation that is still being rebuilt. Google knows it. Anthropic knows it. And now, you know it too.

**Have you noticed a discrepancy between Gemini's multimodal features and its technical reasoning, or is your stack performing as expected? Let's discuss the benchmarks in the comments.**

---

Hey friends, thanks heaps for reading this one! 🙏

Appreciate you taking the time. If it resonated, sparked an idea, or just made you nod along — let's keep the conversation going in the comments! ❤️