Everyone Is Wrong About Claude. The Pentagon Just Proved Why.

I stopped making fun of Claude’s "safety filters" the moment I saw the latest procurement brief from the Pentagon.

In the last 48 hours, a leak on r/ClaudeAI with over 1,300 upvotes has confirmed what many of us suspected: **Anthropic’s existing partnership with the DoD (facilitated through Palantir and AWS since late 2024) has massively expanded into active, frontline decision-support systems.**

For years, we’ve treated Claude like the "polite" younger brother of ChatGPT. We complained that it was too preachy, too cautious, and too quick to lecture us on ethics.

We thought its "Constitutional AI" was a branding gimmick designed to keep venture capitalists happy while the "real" models like ChatGPT 5 took over the world.

We were dead wrong.

The Pentagon didn't choose Claude 4.6 because it’s "nice." They chose it because **Claude is the only model disciplined enough to handle a kill chain.** While we were busy trying to jailbreak it to write spicy fiction, the DoD was realizing that Claude’s rigid adherence to a "constitution" is actually the highest form of technical reliability ever engineered.

The "Politeness" Fallacy

If you’ve been following the AI space into early 2026, you’ve likely seen the memes.

Claude is the AI we once mocked for refusing cocktail recipes—a historical quirk that has since evolved into a precise operational edge.

It’s no longer the model that adds three-paragraph disclaimers to historical warfare queries; it’s the one that applies a rigorous, real-time adherence to Rules of Engagement (ROE) in every response.

We viewed this as a bug. We thought Anthropic was "neutering" their intelligence to avoid PR scandals. But the Pentagon sees it differently.

In a high-stakes environment—whether it’s a battlefield or a global logistics network—**unpredictability is a fatal flaw.**

ChatGPT 5 is a brilliant, wandering genius that occasionally suffers from "hallucinatory drift." It’s incredible for brainstorming, but it’s a liability when the stakes are human lives or billions of dollars.

The Pentagon doesn't want a "creative" AI; they want an **aligned agent that follows a set of immutable rules** regardless of the prompt's pressure.

Why "Constitutional AI" Is a Weapon, Not a Filter

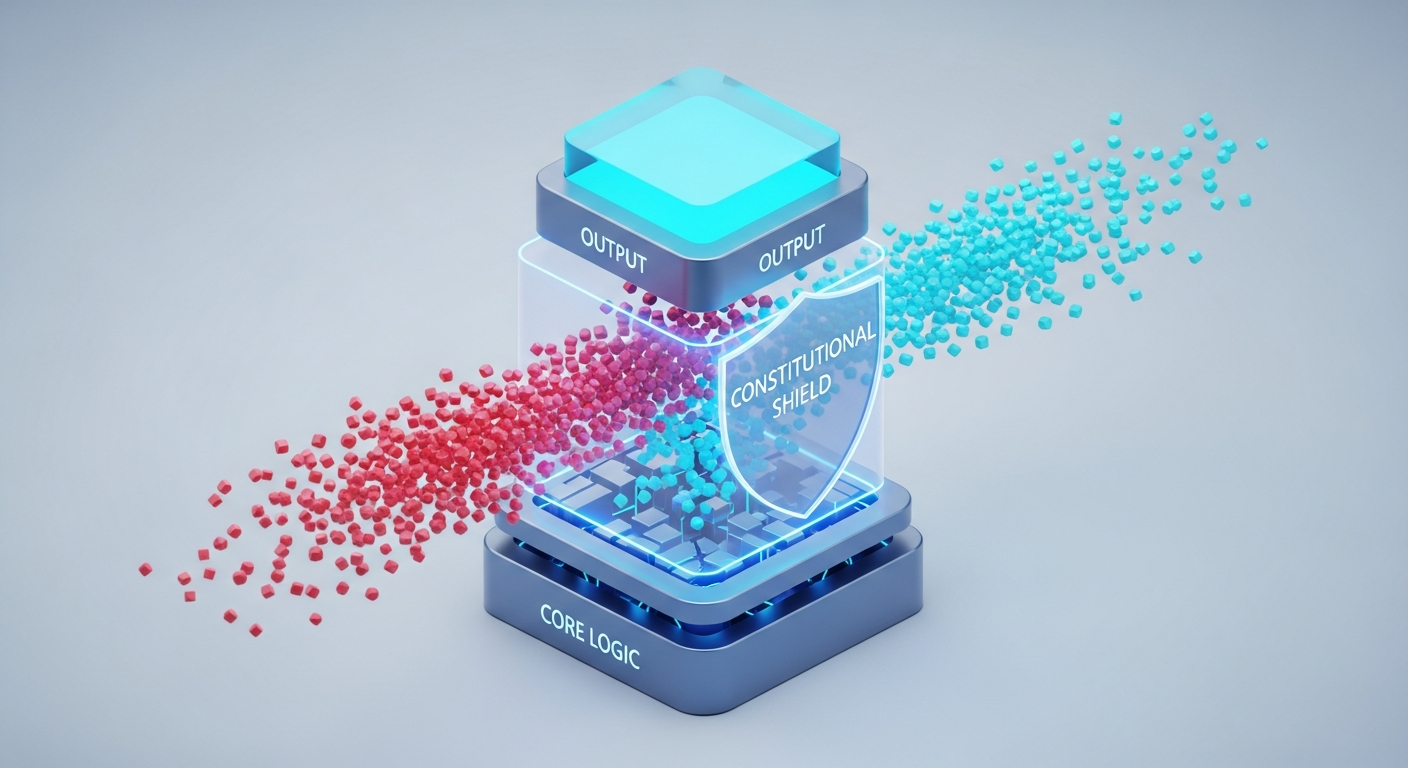

To understand why everyone is wrong about Claude, you have to understand **Constitutional AI (CAI)**.

Most models are trained using RLHF (Reinforcement Learning from Human Feedback), which essentially means thousands of humans "upvote" answers they like. This makes the AI a people-pleaser.

Claude is different. It is trained against a written constitution—a set of principles it must follow. When the Pentagon looks at Claude 4.6, they don't see a "woke" chatbot.

They see a **deterministic framework for intelligence.**

They realized that if you can give an AI a "constitution," you can give it **Rules of Engagement (ROE).** You can program it with the Geneva Convention, specific DoD protocols, and strict hierarchical command structures.

While other models try to "guess" what the user wants, Claude is busy checking its work against a set of hard-coded ethical and operational laws.

The Reliability Gap: Claude 4.6 vs. The Field

In my own testing this month—February 2026—I ran a complex supply-chain simulation through Claude 4.6 and its primary competitors.

The task was to optimize fuel delivery to a simulated disaster zone while navigating changing political boundaries and conflicting safety data.

Every other model eventually "hallucinated" a shortcut. They suggested routes that were physically impossible or ignored the safety constraints I had set to "save time." They were trying to be "smart."

Claude 4.6 was the only one that **refused to break the rules.** It was slower, yes. It gave me more warnings. But the output was 100% viable.

This is the "Reliability Gap." In the enterprise and military worlds, a 95% "brilliant" model is useless compared to a 99.9% "disciplined" one.

**Anthropic didn't build a better chatbot; they built the world's first reliable governor for intelligence.**

The Alignment-Authority Paradox

This brings us to what I call the **Alignment-Authority Paradox.** We used to think that the more "aligned" an AI was, the less "powerful" it became.

We thought safety was a leash that held the dog back from running at full speed.

The Pentagon’s move proves the opposite: **True authority requires total alignment.** You cannot give an AI authority over a power grid, a financial market, or a weapons system if you aren't certain it will stay within its "lane."

By 2027, we are going to see a massive shift in how developers build agents. The "wild west" era of prompting is over.

We are moving into the era of **Regulated Intelligence.** If you want your AI to actually *do* things in the real world—to move money, sign contracts, or manage infrastructure—it must be built on a "Constitutional" foundation.

The 3 Pillars of Strategic Discipline

When we look at the r/ClaudeAI leak, three specific features of Claude 4.6 stand out as the "Pentagon-grade" differentiators.

These aren't just features; they are a new framework for how we should be building software.

1. Traceable Reasoning (The "Internal Monologue")

Unlike other models that just "spit out" an answer, Claude 4.6 has a hardened chain-of-thought process. It doesn't just give you a result; it documents the internal rules it followed to get there.

For the DoD, this is **automated accountability.** If something goes wrong, you can audit the AI’s "thought process" to see exactly which constitutional principle it was prioritizing.

2. Resistance to Adversarial Prompting

Most LLMs are suckers for "roleplay" attacks. If you tell an AI "Pretend you are a person who doesn't care about rules," it often complies.

Claude 4.6 is the first model to demonstrate **Constitutional Persistence.** It doesn't matter what "persona" you ask it to adopt; its core principles remain active.

In a world of AI-driven cyber-warfare, this resistance is the ultimate firewall.

3. Hierarchical Constraint Management

The DoD requires an AI that understands "levels" of permission.

Claude’s architecture allows for **nested constitutions.** You can have a "Base Ethics" layer, an "International Law" layer, and a "Mission Specific" layer.

The model can resolve conflicts between these layers without human intervention. This isn't "safety"—it's **computational governance.**

What This Means for Your Career

If you’re a developer or a tech leader, stop looking for the "smartest" model. Start looking for the most **governable** one.

The era of the "unconstrained genius" AI is ending because no CEO (and certainly no General) is going to give a "unconstrained" model the keys to the kingdom.

We’ve spent the last 15 months—since late 2024—obsessed with "benchmarks" and "coding speed." But by late 2026, the only metric that will matter for high-value contracts is **Compliance Accuracy.**

If you are building an AI agent for a hospital, a law firm, or a bank, you should be studying Anthropic’s "Constitutional" approach.

You need to learn how to write **AI Constitutions,** not just prompts. The skill of the future isn't "prompt engineering"; it’s **Alignment Architecture.**

The Bigger Picture: From "Generative" to "Regulated"

The Pentagon's adoption of Claude marks the end of AI as a "toy." We are crossing the rubicon from **Generative AI** (which makes things) to **Regulated Intelligence** (which manages things).

We used to laugh when Claude would say, "I cannot fulfill this request because it violates my safety guidelines." We thought it was a sign of weakness.

Now we realize it was a sign of **superior architectural integrity.** It was proving, over and over again, that it could resist the user's influence in favor of its own internal laws.

That "politeness" was actually a test. And Claude passed it so well that the most powerful military in history decided to trust it with the future of global security.

The Question for the Community

The r/ClaudeAI thread is currently divided. Half the users are terrified that "Constitutional AI" is being used for war.

The other half is relieved that, if AI *is* going to be used in the military, it’s the one model that actually has a "conscience" (or at least a very strict set of rules).

But here is the real question for you: **Would you trust an "unconstrained" AI to manage your personal finances, or do we finally have to admit that "freedom" in an LLM is just another word for "unreliability"?**

Let's talk in the comments. Are you moving your high-stakes projects to Claude 4.6, or are you still chasing the "creative" hallucinations of the unaligned models?

---

Story Sources

From the Author

Hey friends, thanks heaps for reading this one! 🙏

If it resonated, sparked an idea, or just made you nod along — I'd be genuinely stoked if you'd show some love. A clap on Medium or a like on Substack helps these pieces reach more people (and keeps this little writing habit going).

→ Pythonpom on Medium ← follow, clap, or just browse more!

→ Pominaus on Substack ← like, restack, or subscribe!

Zero pressure, but if you're in a generous mood and fancy buying me a virtual coffee to fuel the next late-night draft ☕, you can do that here: Buy Me a Coffee — your support (big or tiny) means the world.

Appreciate you taking the time. Let's keep chatting about tech, life hacks, and whatever comes next! ❤️